AI model development process mainly comprises five stages: model design, feature engineering, model training, model validation, and model fusion.

Model design

In the model design phase, product managers need to consider whether the model should be built for the current business, whether the team has the capability to build it, how to define the target variable, what data sources to use, and how to obtain data samples, including whether to use random sampling or stratified sampling.

The most important tasks in this phase are defining the model's target variable and selecting the data samples. Different target variables determine the model's application scenarios and expected business outcomes. The model is trained on the selected samples, so sample selection determines the final model performance. In other words, samples form the foundation of the model and should be chosen according to the model objective and the actual business scenario.

Feature engineering

Model building can be understood as extracting features from sample data that well describe the data, then using those features to build a model that predicts unknown data effectively. Feature engineering is a critical part of model construction. Good feature selection can directly improve model performance and reduce implementation complexity.

Too many or too few features or data can affect model fitting, causing overfitting or underfitting. With high-quality features, a model can achieve good performance even if its parameters are not optimal, reducing time spent tuning parameters and lowering implementation complexity.

Data and features determine the upper bound of machine learning, while models and algorithms only approximate that bound. Algorithm engineers typically spend about 60% of the total model development time on feature engineering.

Feature engineering is the process of converting inputs into numerical representations such as vectors, matrices, or tensors. For example, a person's credit status can be represented using features like age, education, income, and number of credit cards. Converting these attributes into numerical features and using them to judge creditworthiness is an instance of feature engineering.

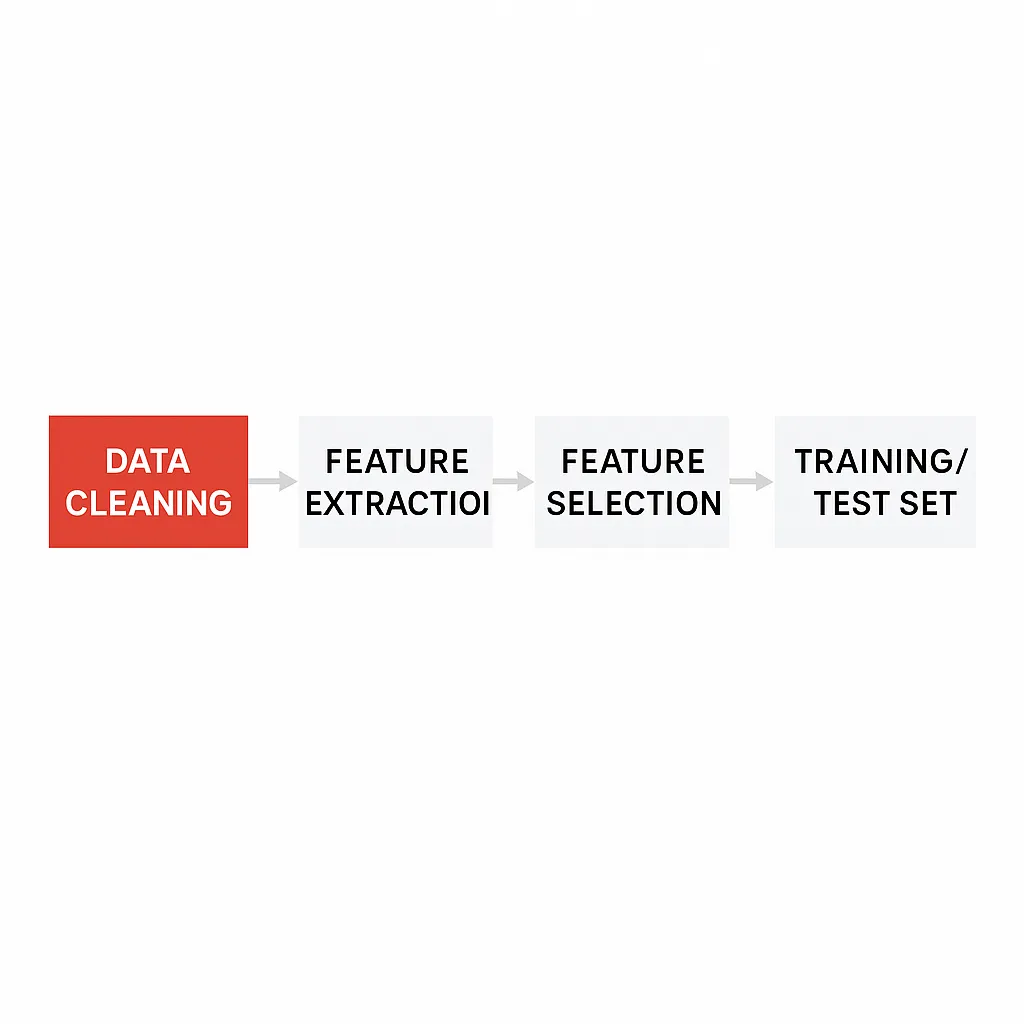

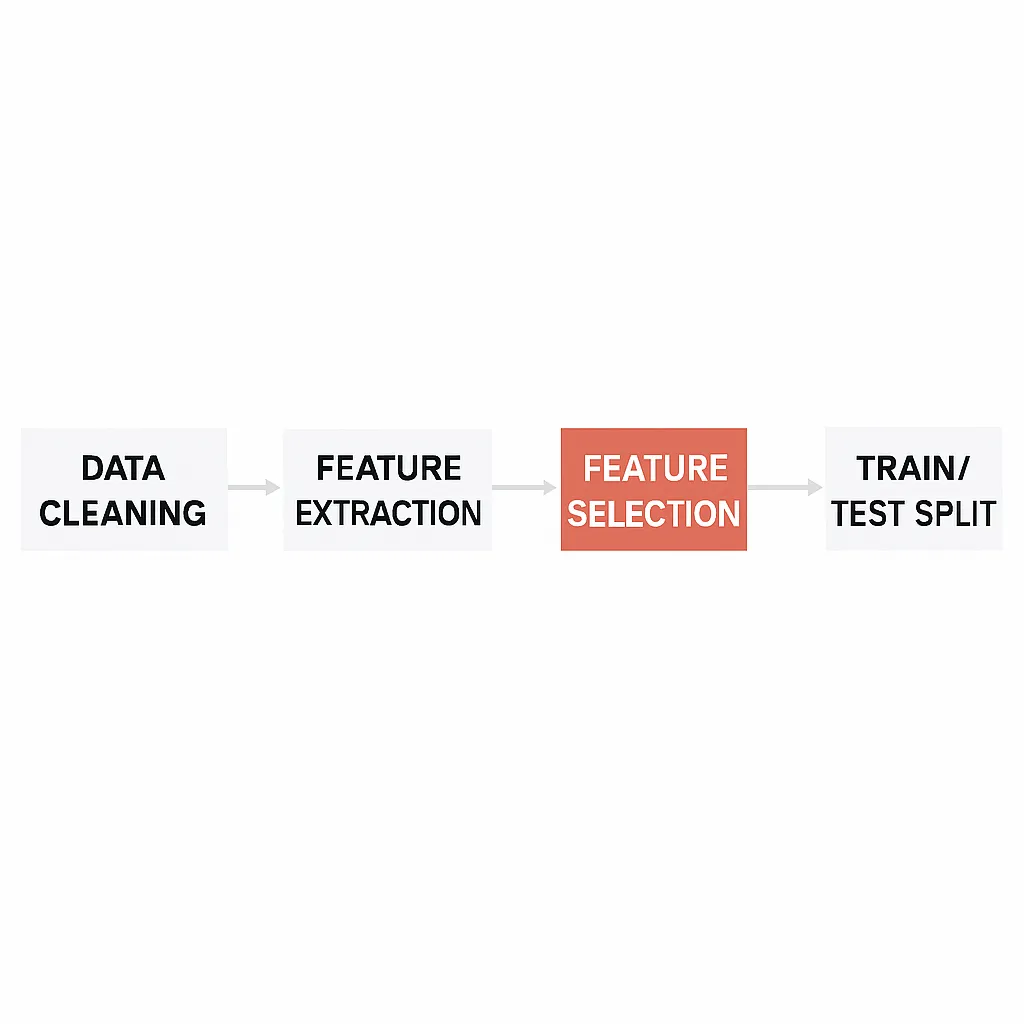

The typical feature engineering workflow is: data cleaning, feature extraction, feature selection, and finally generating the training and test sets.

1. Data cleaning

At the start of feature engineering, algorithm engineers usually inspect data characteristics via data visualization, checking distributions, presence of outliers, and whether features follow assumed distributions. Then they perform data cleaning to address issues such as missing values, outliers, class imbalance, and inconsistent units.

Missing data is the most common problem. Engineers can handle it by removing missing entries or imputing values. For numerical anomalies, they may correct or remove outliers; if the objective is to detect anomalies, those values should be retained and labeled. For class imbalance, which can lead to overfitting or underfitting, appropriate resampling or weighting strategies should be applied. For inconsistent units, normalization or standardization is typically used to make units uniform.

2. Feature extraction

Extracted features generally fall into four common types: numerical features, categorical or descriptive features, unstructured data, and network relational data.

Numerical features: These features are often abundant and can be retrieved directly from a data warehouse. Aggregation functions such as total count, mean, and ratios between current and historical averages can describe behavior effectively.

Categorical or descriptive features: These features have low ordinal relationship and represent categories. They are typically encoded into binary or one-hot representations, e.g., has_house [0, 1], has_car [0, 1].

Unstructured data (text features): Unstructured data often appears in UGC (user generated content). For example, churn prediction may use user comments, which are unstructured text. Typical processing involves text cleaning and feature extraction to derive attributes that reflect user behavior.

Network relational data: While the first three types describe individuals, relational data describes a person's connections with others. Feature extraction here involves mining relationship strength in complex networks, such as family, classmates, or friends.

3. Feature selection

Feature selection involves filtering out unimportant features and keeping important ones. Engineers evaluate candidate features using coverage, IV, and other metrics, then apply thresholds based on those metrics and experience. Finally, they check feature stability and remove unstable features.

4. Training and test sets

Before formal training, data must be split into training and test sets. The training set is used to fit the model, while the test set is used to validate model performance.

Model training

Model training iteratively fits, validates, and tunes the model to reach an optimal solution. A key concept is the decision boundary, which determines whether an algorithm is linear or nonlinear.

Decision boundaries are typically lines or curves, and their complexity is related to model capacity. Generally, the steeper or more complex the decision boundary, the higher the accuracy on the training set, but a highly complex boundary can make predictions on unseen data unstable.

The goal of model training is to find a balance between fitting ability and generalization ability. Fitting ability measures how well the model performs on known data, while generalization ability measures performance on unseen data. The optimal solution is the set of model parameters that best balances these two aspects, producing a decision boundary with good fit and generalization. Algorithm engineers commonly use cross-validation to find these optimal parameters.

Model validation

Model validation evaluates model performance on held-out data using performance and stability metrics.

Model performance refers to predictive accuracy. Evaluation differs by task: classification and regression.

Classification evaluation: Classification assigns instances to categories, such as distinguishing whether a user is a "good" or "bad" user in risk management, or detecting whether an image contains a face. Common metrics include recall, F1 score, KS, and AUC.

Regression evaluation: Regression predicts continuous values, such as real estate or stock prices. Common metrics include variance and MSE.

Product managers should know which metrics are available and what ranges are reasonable for their business context. While acceptable ranges vary across applications, it is important to identify clearly unreasonable values.

Model stability: Stability can be assessed using PSI. If a model's PSI > 0.2, its stability is considered poor and the delivery should be revisited.

Model fusion

Model fusion trains multiple models and combines them via ensemble techniques to improve accuracy. In short, combining multiple models can improve overall performance.

Model deployment

Typically, algorithm and engineering teams are separate, so models are often deployed as independent services exposing an HTTP API for engineering teams to call. This decouples work and dependencies. Simple machine learning models are commonly deployed using Flask, while deep learning models are often deployed using TensorFlow Serving.