Multi-Level Map Construction for Dynamic Scenes

Introduction

Localization and map construction in visual SLAM face major challenges in dynamic scenes. Many works have proposed effective localization solutions, but fewer address long-term consistent map construction in dynamic environments. This paper presents a multi-level mapping system designed for dynamic scenes. The system uses YOLOX to obtain semantic information, multi-object tracking to compensate for missed detections, DBSCAN clustering and depth information to refine detection bounding boxes, and then extracts static point clouds to build dense point cloud maps and octree maps. We propose a plane mapping method tailored to dynamic scenes covering plane extraction, filtering, data association, and fusion optimization to create a plane map. We also introduce an object mapping method for dynamic scenes including object parameterization, data association, and update optimization. Extensive experiments on public datasets and real scenes validate the accuracy and robustness of the multi-level maps built by the proposed methods. Finally, by using the constructed object map for dynamic object tracking, we demonstrate practical application potential.

Main Contributions

- Filtered point clouds based on corrected object detection to build clean point cloud maps and octree maps that contain only static elements.

- Proposed a method to construct plane maps in dynamic scenes to capture static environmental structure.

- Proposed an object mapping method for dynamic scenes, enabling SLAM to support higher-level tasks such as robotic scene understanding, object manipulation, and semantic augmented reality.

- To our knowledge, this is the first work to build a plane map in dynamic scenes and the first to accurately parameterize objects and build complete, lightweight object maps under dynamic conditions.

System Overview

Figure 1 shows the system framework for multi-level map construction in dynamic scenes. The input module handles RGB and depth images. The preprocessing module acquires and refines semantic information. The mapping modules build dense point cloud and octree maps, plane maps, and object maps. The output module exports the constructed multi-level maps. Experiments on publicly available datasets and real scenes validate the effectiveness of the proposed algorithm.

Method Details

Dense Point Cloud and Octree Map Construction

When semantic priors are available, points inside detection boxes or semantic masks for dynamic classes can be removed to produce a point cloud containing only static factors. Relying solely on raw semantic results can leave residual dynamic objects due to missed detections and under-segmentation. We use YOLOX to obtain semantic information. To address missed detections, we apply multi-object tracking for compensation. To address under-segmentation, DBSCAN clustering is applied inside candidate dynamic object bounding boxes to extract foreground points. Based on neighboring pixels along the detection box borders and depth of foreground points, detection boxes are expanded appropriately. To avoid DBSCAN-induced errors, expansions are limited to 50 pixels in each direction. For keyframes, pixels outside corrected bounding boxes that map to potential moving objects are extracted and projected to 3D world coordinates. Using camera poses from our prior work [9], point clouds extracted from different keyframes are fused, then downsampled by voxel grid filtering. For storage efficiency and support of navigation and obstacle avoidance, the point cloud map is converted to an octree map.

Plane Map Construction

Plane extraction uses the PEAC algorithm [30] to obtain plane parameters and inlier point clouds in the current camera frame, then plane edge points are extracted. PCL is used for secondary plane fitting to refine parameters and inliers, and outliers among plane edge points are removed. Planes are filtered based on depth information, inlier ratio, and spatial relationship to detection boxes. After plane map initialization, detected planes in the current frame are associated with existing map planes. In complex dynamic scenes, detected planes can be noisy and unpredictable, causing association failures. With more observations, unassociated plane pairs tend to converge toward correct parameters, easing later association. Therefore, in the local mapping thread, map planes are compared pairwise. If two planes satisfy association conditions, they are considered potentially unassociated observations of the same plane. The less-observed plane is merged into the more-observed one and optimized, then removed from the map.

Object Map Construction

Object Parameterization and Data Association

Objects to be modeled often belong to the background and are distant from the camera, so extracted map points are sparse and low quality. Removing outliers by clustering is infeasible. Therefore, dense point clouds from each frame are used for object modeling, and DBSCAN is used to process the point cloud. For each detected instance in the current frame k, association checks are performed against each object instance in the map. Motion IoU, projection IoU, 3D IoU, and nonparametric statistics are common association strategies. While each has limitations, integrating them produces a more robust and accurate object data association algorithm.

Object Update and Optimization

We parameterize detected instances using both dense point clouds and sparse map points. This compensates for insufficient map points in single frames and avoids the heavy computational cost of dense point clouds across many frames. After successful data association, map points and object parameters are updated. Outliers are removed from object map points using distances between object points and associated planes and an isolation forest, as illustrated in Figure 2.

Experiments

We evaluated the method on the TUM RGB-D dataset and applied it to dynamic object tracking in real scenes. Since the tested sequences do not provide ground-truth maps, experiments focus on qualitative demonstration of mapping results. The algorithm was run on a laptop with an i9-12900H CPU, an RTX 3060 GPU, and 16 GB of RAM.

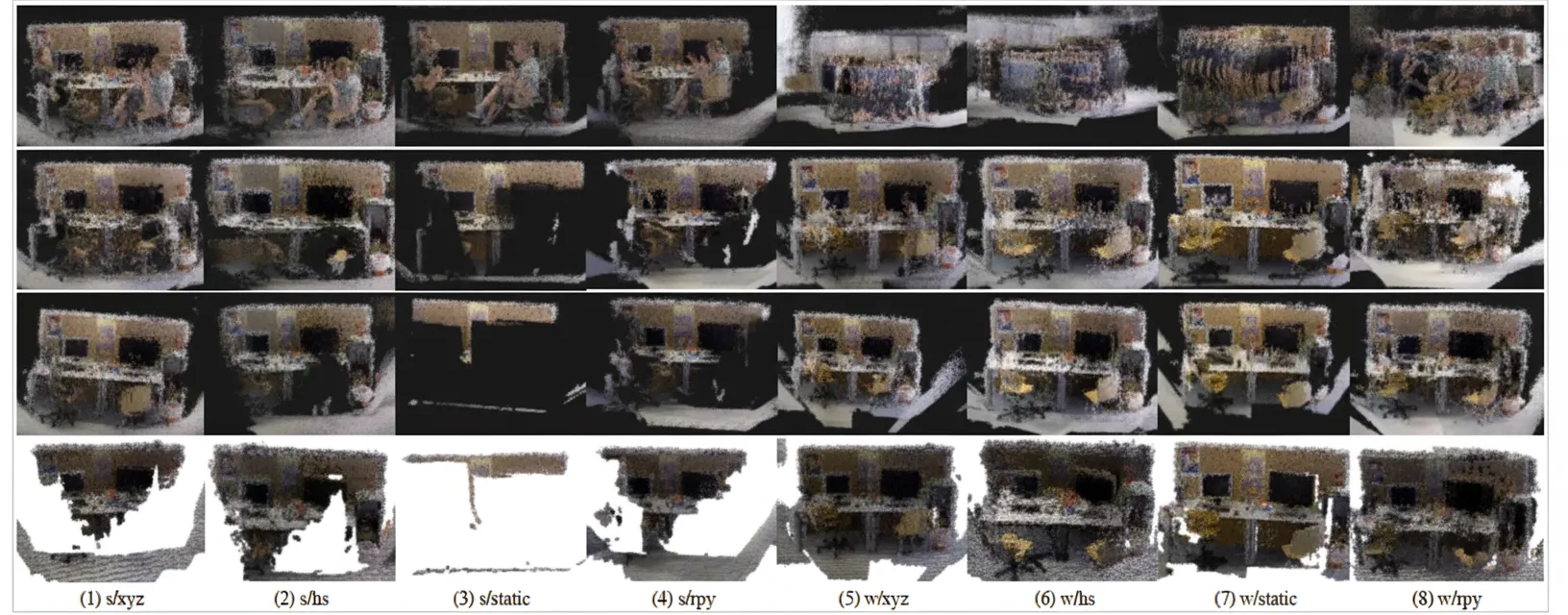

Geometric Map Results

Results for dense point cloud and octree maps are shown in Figure 3. ORB-SLAM2 fails to localize and build maps in highly dynamic scenes and retains dynamic object points in low-dynamic scenes. Due to missed detections and the difficulty of fully covering potential moving objects with detection boxes, removing points inside original candidate moving-object boxes still leaves significant residual traces. Our method produces cleaner dense maps and octree maps by compensating missed detections and refining bounding boxes.

Figure 1. Point cloud and octree maps. Top row: dense point cloud map using ORB-SLAM2 and dense mapping module. Second row: dense map using the prior localization method [9] with points inside potential moving-object regions removed. Third row: dense map produced by our algorithm. Bottom row: octree map generated by our algorithm.

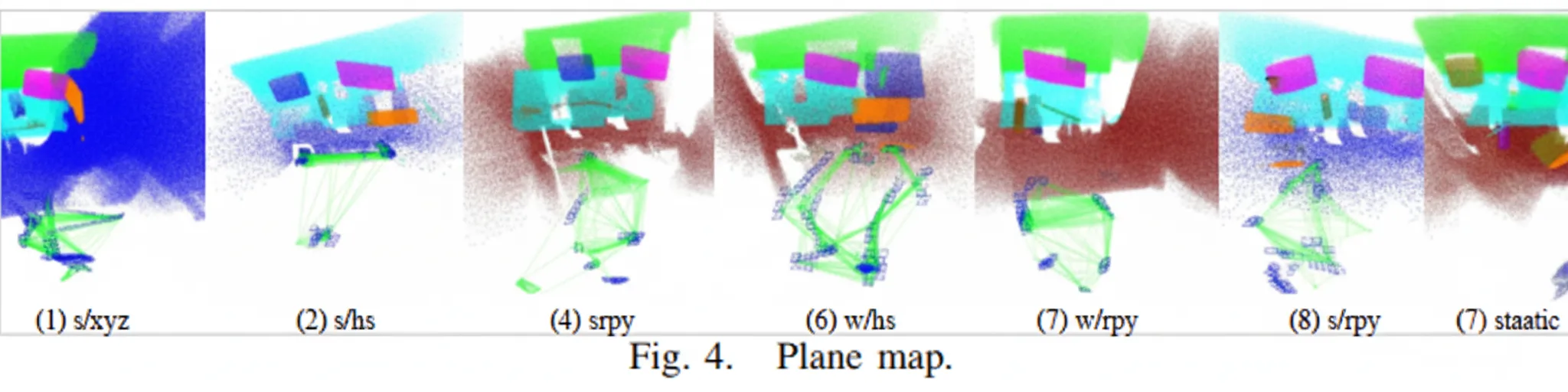

Figure 4 shows plane maps that accurately capture static background plane structures in dynamic scenes. These plane maps can support advanced tasks such as augmented reality and serve as landmarks to improve camera pose estimation.

Figure 2. Generated map of repeated adjacent objects. Left image provides an overview of the sequence.

Object Mapping Results

We evaluated object mapping on eight dynamic sequences from the TUM dataset, as shown in Figure 5. To validate accuracy, constructed object models were overlaid on dense maps and projected onto image planes. In highly dynamic scenes, our algorithm accurately models almost all objects regardless of camera motion patterns and dynamic objects in the environment. In low-dynamic scenes with two people seated at a table causing heavy occlusion of static objects and background, some objects lack sufficient observations and are modeled less accurately. The results indicate effective object parameterization, data association, and optimization, and the object maps support downstream applications such as semantic navigation, object grasping, and augmented reality.

Robustness in Real-World Scenes

We tested the method in real scenes using a RealSense D435i camera. A person moved irregularly within the field of view. To assess robustness, two camera motion patterns were evaluated: 1) movement from one end of the scene to the other, and 2) near-static. Multi-level mapping results are shown in Figure 6. The algorithm constructs accurate dense point cloud, octree, plane, and lightweight object maps under varying object and camera motion conditions.

Dynamic Object Tracking

We applied the constructed object maps to dynamic object tracking. Scene images were captured with a Pico Neo3 device and used to build object maps. Depth for map points was obtained by stereo matching, computed only on keyframes to preserve real-time performance. The constructed object map is shown in Figure 7(a). Once an object is selected, the system uses KCF single-object tracking and optical-flow tracking to compute real-time object poses while the object moves. Figures 7(b) to 7(d) show dynamic tracking results for a book, a keyboard, and a bottle. The experiments show that the algorithm provides accurate object models and poses for tracking in dynamic environments, and that it is device-agnostic, demonstrating robustness and generality.

Conclusion

This paper presents a multi-level mapping algorithm tailored to dynamic scenes. We construct dense point cloud, octree, plane, and object maps that include static background and objects despite dynamic interference, enriching environment perception for mobile robots and expanding mapping applications in dynamic environments. Extensive experiments demonstrate the accuracy and robustness of the algorithm. Dynamic object tracking experiments confirm practical applicability. Future work will consider real motion of movable objects beyond humans and leverage planes and objects as landmarks to optimize camera pose and further improve localization accuracy.