Introduction

As intelligent service robots become more common, mobile robots are appearing more frequently in everyday environments. With ongoing advances in sensor technology, intelligence, and computing, mobile robots are expected to play an increasing role in industrial and residential settings. Simultaneous localization and mapping, or SLAM, is a core technology for autonomous positioning and navigation. This article explains what SLAM is, how it is implemented, and the key steps and challenges involved.

What is SLAM?

SLAM stands for simultaneous localization and mapping. The concept was proposed by Hugh Durrant-Whyte and John J. Leonard in 1988. Rather than a single algorithm, SLAM refers to the set of methods that enable a robot starting from an unknown location in an unknown environment to estimate its own pose while incrementally constructing a map using repeated observations of map features such as corners and pillars.

Core steps of SLAM

SLAM generally comprises three processes: perception, localization, and mapping.

- Perception: the robot acquires information about its surroundings through sensors.

- Localization: using current and past sensor data, the robot estimates its position and orientation.

- Mapping: based on its pose and sensor data, the robot builds a representation of the environment.

For example, imagine Zhang San drank too much and was escorted home by his friend Li Si to the wrong house. The next morning, Zhang San can identify whose house he is in by observing the surrounding features. The observation process corresponds to perception; recognizing whose house it is corresponds to localization; and associating the observed features with Li Si's house corresponds to mapping. Perception is a necessary condition for SLAM, since without observations reliable localization and mapping are not possible.

Localization and mapping are mutually dependent: localization relies on known map information, and mapping relies on reliable localization. The data used in both processes include the relative displacements observed by the robot and corrections to those displacements.

Core challenges

SLAM is commonly divided into a front end and a back end. The front end processes raw sensor data and converts it into relative poses or other representations suitable for the robot. The back end handles optimal posterior estimation for states such as pose and map.

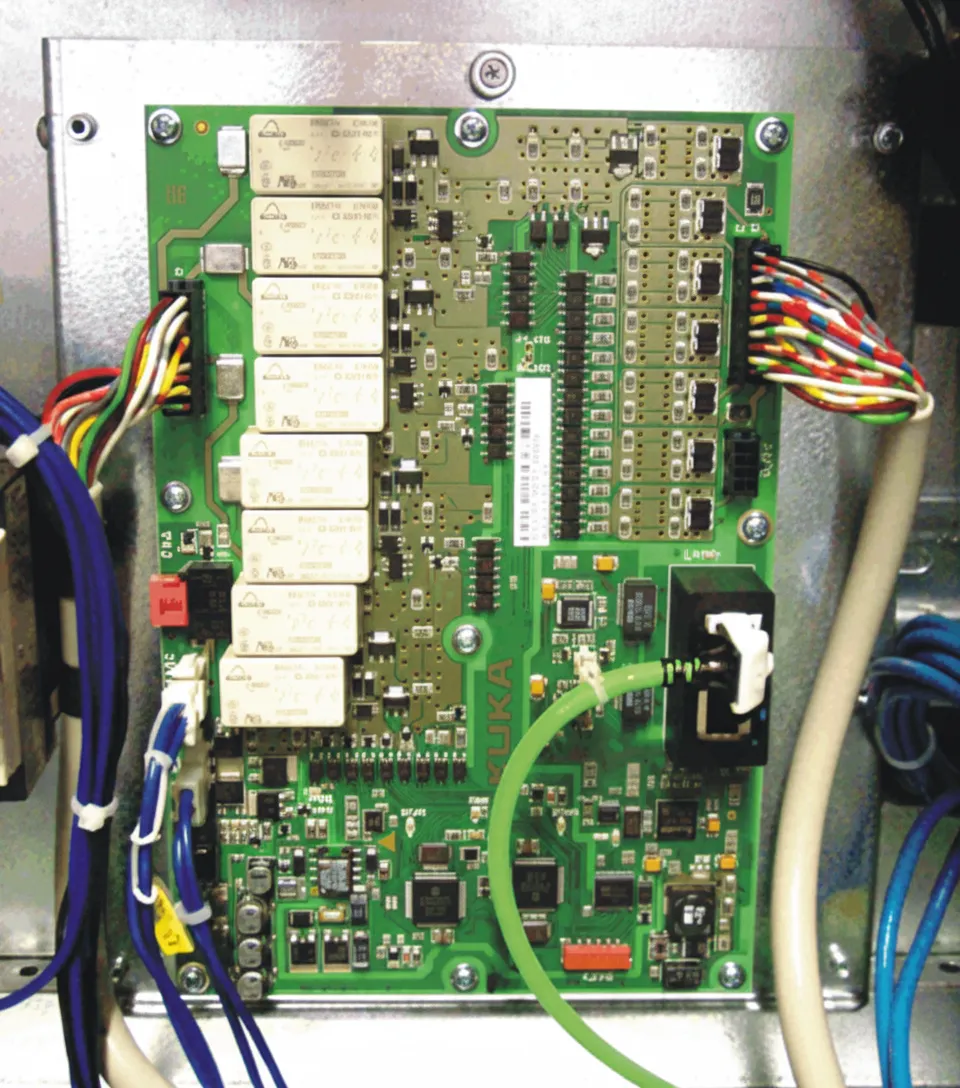

Typical sensors include depth sensors (ultrasonic, laser scanners, stereo vision), vision sensors (cameras, beacons), inertial sensors (gyroscopes, encoders, electronic compasses), and absolute positioning systems (Wi?Fi, GPS). Compared with human perception, these sensors provide limited information and cannot fully characterize the environment. For example, a 2D laser scanner provides depth information only on a single plane, and camera images do not yield the same rich semantic understanding that a human brain can extract.

Sensor noise and erroneous measurements also affect SLAM. Therefore, another core challenge is how to optimally estimate the robot pose and the map from noisy observations.

Back-end SLAM solutions can be broadly classified into probabilistic model based methods and optimization based methods. Probabilistic model based approaches are common in 2D SLAM; representative methods include the extended Kalman filter (EKF), unscented Kalman filter (UKF), and particle filter (PF). These approaches are relatively mature and have been used in commercial applications. Optimization based methods have become the mainstream in recent years, especially in visual SLAM, with representative frameworks such as TORO and g2o.

Implementation methods

The back-end optimization methods for SLAM are mainly divided into probabilistic model based methods and optimization based methods. As a classical category from SLAM's early development, probabilistic model based SLAM has a well-established theoretical foundation and remains active in many applications due to its solid performance.

Probabilistic SLAM methods are derived from Bayesian estimation. In simple terms, Bayesian estimation combines a prior probability for a hypothesis, the likelihood of observed data given that hypothesis, and the observed data itself to produce a posterior probability. Bayes' theorem provides the formal relationship between these quantities.

Consider a simple example: Zhang San leaves Li Si's house and is unsure how many blocks he has walked. He sees a bank on his right and uses Bayesian reasoning to estimate which block he is on. Let p(A|B) denote the probability that Zhang San is at block A given that he observes a bank on the right. Let p(B) be the probability of observing a bank on the right overall, p(B|Ai) be the probability of observing a bank on the right assuming he is at block Ai, and p(Ai) be the prior probability of being at block Ai.

If there are ten blocks in total and the prior probability of being on the third block is p(A3)=10%, and there are two banks along the route so p(B)=2/10=20%, but if Zhang is on block 3 then p(B|A3)=100%. Applying Bayes' theorem increases the posterior probability that Zhang is on the third block to 100%×10%/20%=50%.

If Zhang initially believes the probability of being on block 3 is 50% and other blocks share the remainder uniformly, the overall probability of seeing a bank is p(B)=100%×50%+100%×5.55%+0%×5.55%×8=55.55%. Under this observation, the posterior probability of being on block 4 becomes 100%×50%/55.55%=90%. This demonstrates how Bayes' theorem combines prior beliefs with observations to produce a posterior estimate.

In a classic SLAM formulation, let x_t denote the state at time t, z_1:t the observations up to time t, u_1:t the controls applied up to time t, and m the map. SLAM seeks the optimal estimate of the robot pose and the map given the control inputs and observations. This can be expressed using Bayesian inference over the posterior distribution of the state and map.

Assuming the observation probability p(z_t|z_1:t-1,u_1:t) does not depend on x and can be treated as a constant, and assuming a first-order Markov model where the current state x_t depends only on the previous state x_{t-1}, Bayesian estimation transforms the current posterior into a function of the likelihood of the observations, the state transition model, and the previous posterior. Thus the posterior estimate for the state and map can be obtained iteratively.

Common probabilistic solutions to SLAM include the Kalman filter (KF), extended Kalman filter (EKF), unscented Kalman filter (UKF), FastSLAM (particle filter based), and information filters (SEIF). These methods aim to compute the optimal posterior estimate under the probabilistic model.

Conclusion

This article provided an overview of SLAM fundamentals and introduced probabilistic model based SLAM methods rooted in Bayesian estimation. Implementing SLAM for practical robot motion involves many additional details and engineering work. Follow-up articles will describe representative probabilistic SLAM algorithms in more depth.