Overview

Optimus relies on vision to perform tasks.

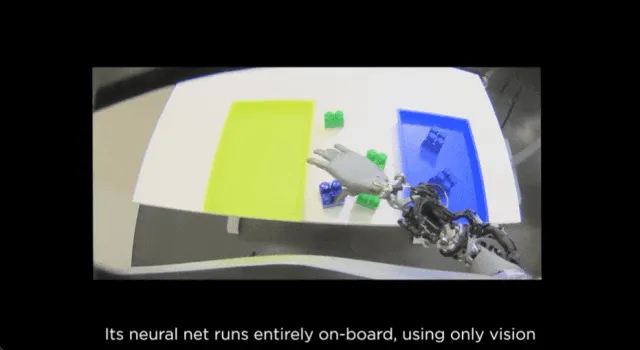

On September 24, Tesla posted a video showing progress on the Optimus humanoid robot. Tesla said Optimus can now autonomously classify objects, and that its neural network training is fully end-to-end: it takes video input and directly outputs control commands. End-to-end refers to a neural network training approach that maps input data directly to outputs without hand-engineered feature extraction or intermediate steps. End-to-end networks can automatically learn features and patterns in the data to improve performance and generalization.

Perception and calibration

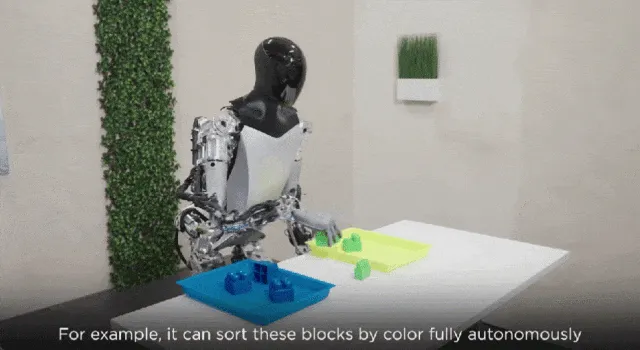

The video shows Optimus automatically calibrating its arms and legs, using only vision and joint position encoders to localize its limbs. After precise self-calibration, Optimus can learn various tasks more efficiently. Tesla states that the robot's neural network can run fully on-board without a network connection, using vision as the sole perception modality. For example, Optimus can autonomously sort colored blocks by color, handle external disturbances, and perform self-correction.

Precise calibration enables more efficient task learning, and with on-board vision processing, the neural network operates without external connectivity. As a result, Optimus can classify objects by color entirely autonomously.

Even when a person interferes, Optimus can still sort objects accurately by color.

Optimus also demonstrated the ability to self-correct by upright?ing tipped objects.

After training, it can perform new tasks, such as deliberately shuffling previously sorted objects.

Industry context

Humanoid robots have become a focal point in the robotics sector as a branch of mobile robotics. An industry conference in December will include a dedicated session on legged and humanoid robots, where representatives from the legged/humanoid robot supply chain will discuss recent technologies and development trends.