Introduction

Image quality is determined by lens quality and sensor size. Due to design constraints for thinner and lighter mobile devices, it is not possible to maximize sensor size and lens optical performance. In this context, adding a second camera to improve image quality becomes a practical option. As early as 2011, HTC released the first dual-camera phone for 3D imaging, the EVO 3D (G17). Later HTC introduced the One M8 with dual cameras that recorded depth information to enable a 'refocus after capture' feature. After that, Huawei, Coolpad, and other Chinese manufacturers also released phones with this configuration, and different dual-camera advantages began to become competitive differentiators.

Dual camera principle

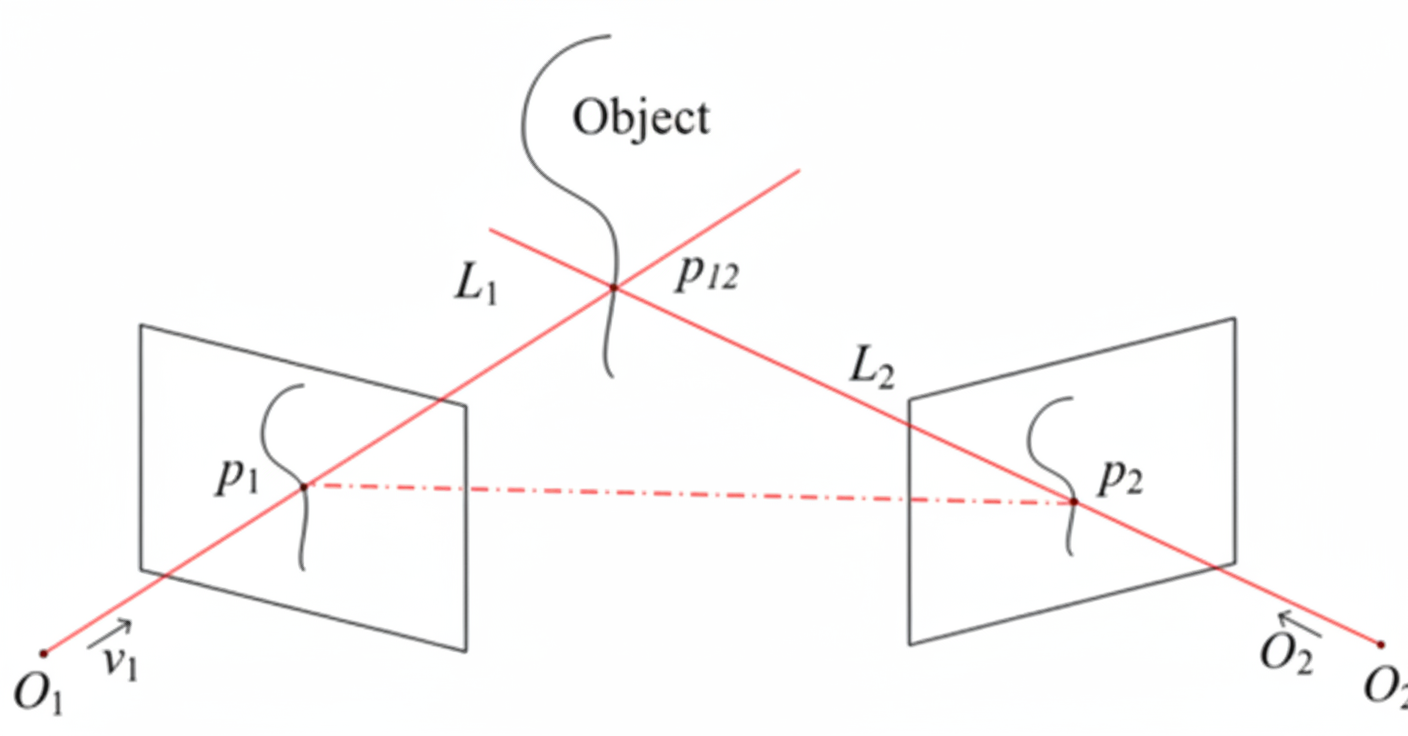

Moving from a single camera to dual cameras can be seen as a transition from two dimensions to three dimensions. Two dimensions have x and y axes, like a flat sketch, while three dimensions add the z axis, which corresponds to the distance of points from the observer.

A simple example is the human eyes. The human visual system is a binocular system. Try a small experiment: close one eye and hold a pen in each hand, then try to touch the pen tips together. It is difficult because with only one eye the brain cannot easily derive depth information. When both eyes view the same object, the images differ slightly; that disparity is the basis for three-dimensional vision. With the two images and the brain's matching ability, the distance to an object can be determined. The dual-camera approach for phones is intended to provide the same kind of depth information.

From a biomimetic standpoint, many animals have two eyes, while others such as insects have multiple eyes arranged in an array. The move from a single camera to dual or array cameras in phones can be seen as a natural technological evolution.

In phones, when taking a photo the two cameras perform complementary roles similar to human eyes: one lens may focus on color capture while the other captures luminance, contours, and fine detail. The image signal processor (ISP) then combines information from both cameras to produce a better final image.

Implementations and use cases

Dual cameras are now commonly used in smartphones. Below are the main implementations and their advantages.

Autostereoscopic 3D

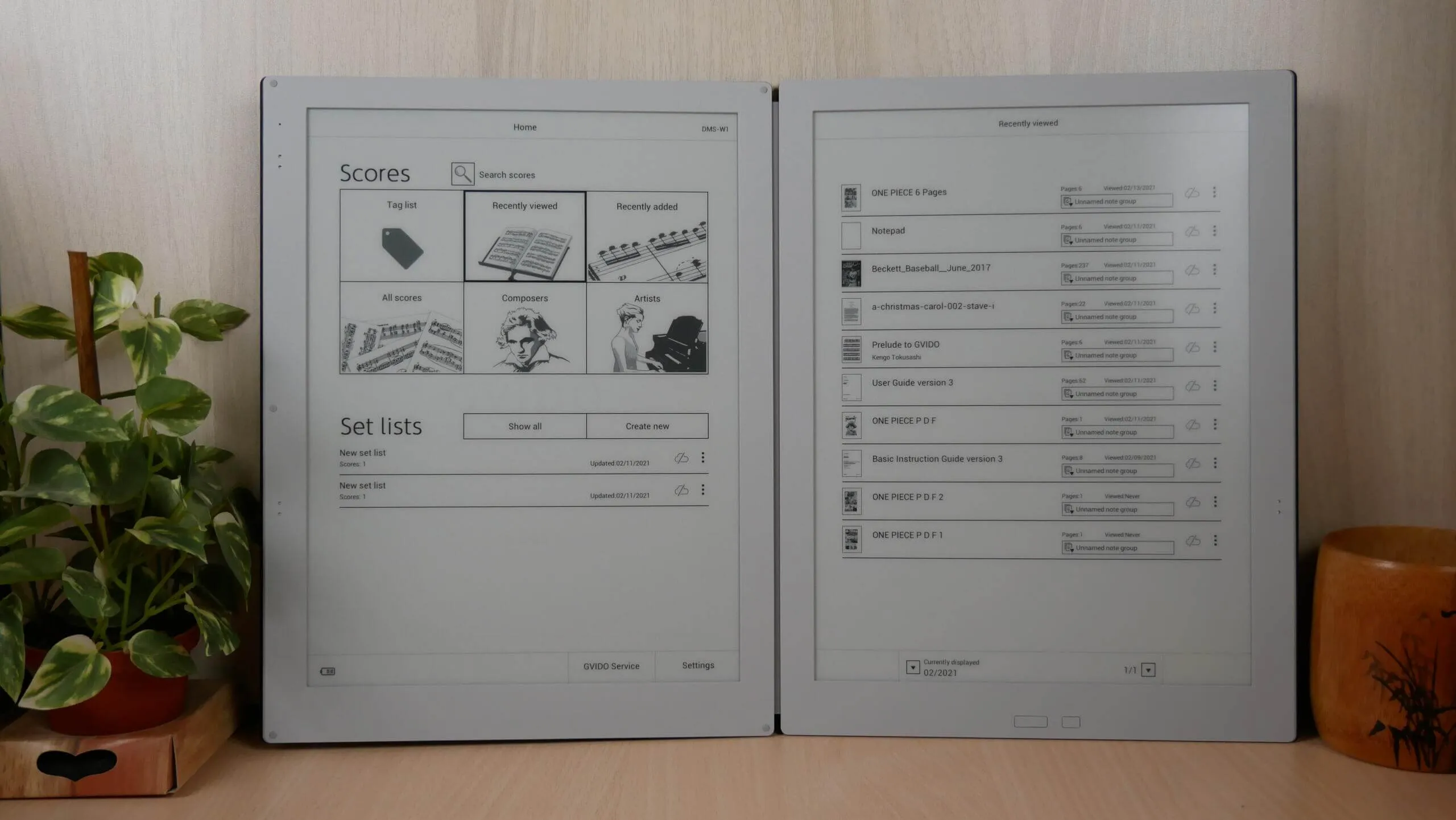

Initially Sharp, HTC, and LG developed autostereoscopic 3D phones. Two identical cameras captured 3D photos or videos that could be viewed on displays supporting autostereoscopic 3D.

Depth-assist

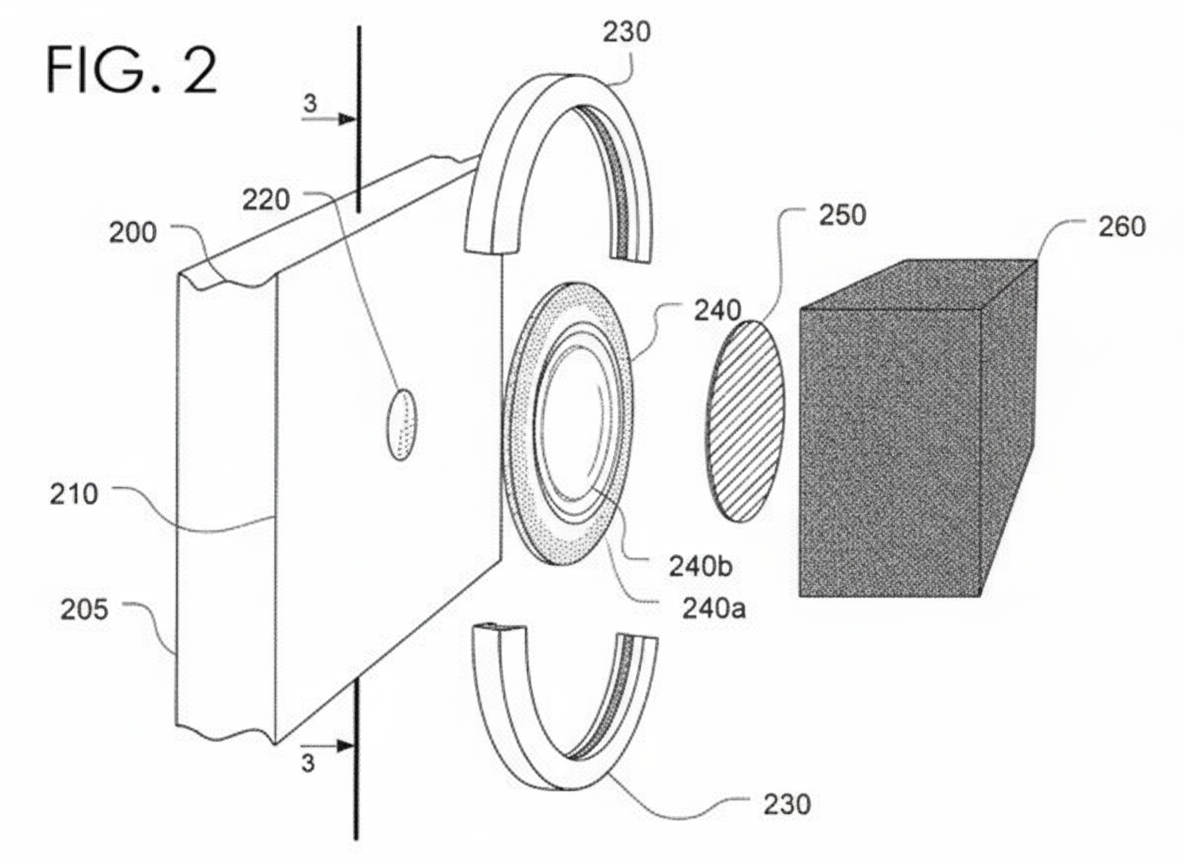

Examples include the HTC One M8, ZTE AXON, and Xiaomi Redmi Pro. In these designs the auxiliary camera typically has lower resolution and is used to record depth information rather than to directly improve image detail. The two cameras work together during capture, and computational synthesis enables more flexible background blur effects.

Bionic parallel arrangement

Around 2014, some manufacturers pursued dual rear cameras arranged in parallel, each with its own CMOS sensor to capture higher-resolution images. Coolpad and Honor released models with this approach. Technically, parallel dual cameras offer greater light intake and photosensitive area, allow refocus-after-capture, and enable virtual aperture adjustment. However, they also place higher demands on post-capture software synthesis and algorithms.

Color plus monochrome

Building on parallel dual cameras, some models pair a color sensor with a monochrome sensor; examples include the Huawei P9, Meizu Pro, Honor 8, and Coolpad Cool1. The color camera captures chroma information while the monochrome sensor provides additional luminance and detail. The two sensors work together to produce a combined image with improved tonal range and texture.

Wide-angle assist

Dual cameras can be used to provide a secondary wide-angle lens, as in the LG G5. In this setup the main camera handles most captures while the auxiliary lens serves as a wide-angle option for expansive outdoor scenes. The two lenses are used separately rather than simultaneously to improve single-image quality.

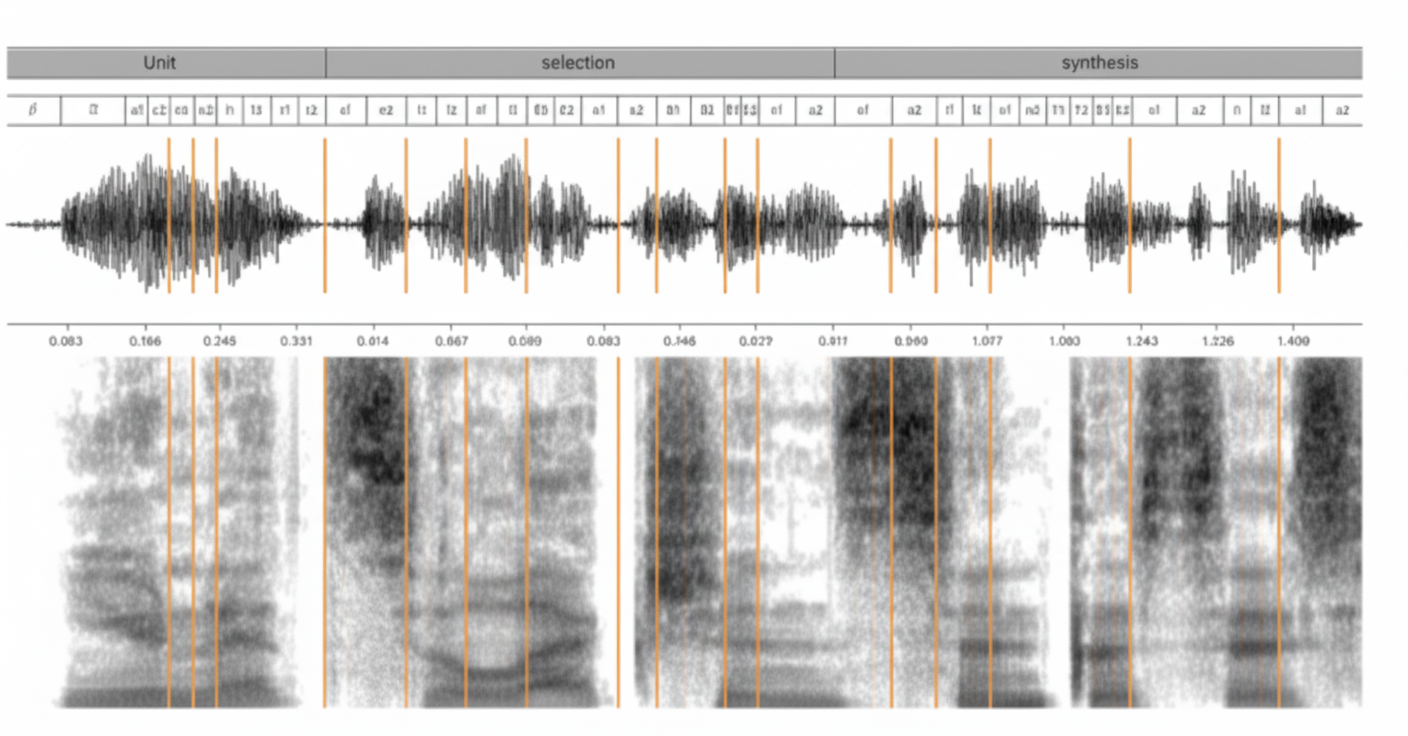

Optical zoom via computational imaging

Companies such as Corephotonics have demonstrated dual-camera prototypes that capture the same scene with different focal lengths simultaneously, then use software to synthesize images approximating optical zoom. This computational approach can increase effective resolution and reduce noise in low light. To date this technique has not become widespread in commercial devices.

Augmented reality

Some devices combine multiple cameras for motion tracking, depth sensing, and environment learning to support augmented reality. For example, Lenovo's Phab 2 Pro integrates three cameras: a primary camera for imaging, plus a wide-angle lens and a motion-tracking depth sensor. Together they enable spatial tracking and depth perception for AR applications.

Summary

Dual-camera phones primarily aim to improve photographic quality by adding complementary optical and sensing capabilities. Where dual cameras provide clear user benefits, they should be presented based on technical merit rather than marketing. The improvements achieved so far in light intake and noise control are incremental rather than transformative. However, with more mature technology, stronger support from industry leaders, developers, and supply-chain partners, dual-camera architectures are likely to become more widely adopted this year.