Structured-light 3D shape measurement has become widely used in reverse engineering, aerospace, biomedical imaging, and cultural heritage preservation. Phase unwrapping is a key step in structured-light measurement that directly affects accuracy, speed, and robustness.

Overview of the review

This review summarizes recent advances in phase unwrapping for structured-light 3D measurement. It introduces the basic principles of phase unwrapping, classifies existing methods, reviews the state of the art and applicability of each class, compares main characteristics, and outlines likely future research directions.

Phase fundamentals

Phase-shifting methods are widely used in optical metrology because of their simple operation, efficiency, and high accuracy. A typical 3D measurement workflow includes pattern projection, camera capture of deformed fringes, fringe analysis, phase unwrapping, system calibration, point cloud generation, and 3D reconstruction. Fringe analysis aims to obtain the wrapped phase, which lies in the interval (-π, π] and shows a sawtooth pattern. Common fringe analysis methods are phase shifting, wavelet transform, and Fourier transform; phase shifting yields accurate, robust results and is less sensitive to noise. In this review the wrapped phase is obtained using phase-shifting methods unless otherwise stated.

Phase-shifting moves the projected fringe pattern uniformly by N steps per period (N ≥ 3), each step 2π/N. From the N captured fringe images the wrapped phase φ(x,y) is computed. Phase unwrapping restores the continuous absolute phase from the truncated phase produced by inverse tangent, enabling subsequent 3D shape recovery.

Classification of phase unwrapping techniques

Phase unwrapping methods are commonly categorized as: 1) temporal phase unwrapping, 2) spatial phase unwrapping, 3) deep-learning-based unwrapping, and 4) other specialized techniques.

Temporal phase unwrapping

Temporal phase unwrapping projects a sequence of fringe patterns with different spatial frequencies over time while the camera captures the corresponding deformed fringes. For each pixel the absolute phase is recovered independently along the time series. Because temporal unwrapping is pixelwise and independent between adjacent pixels, it can handle discontinuous surfaces.

Since Huntley and Saldner introduced temporal unwrapping, extensive work has improved its accuracy and efficiency. Their original method suppresses error propagation by using a sequence of fringe spatial frequencies that vary linearly over time (period counts 1, 2, 3, ..., N0, where N0 is the maximum projected period). The algorithm performs pixelwise unwrapping in time, confining phase error to low-SNR regions and enabling correct unwrapping across discontinuities. A key limitation is slow data acquisition, making it more suitable for static objects rather than fast dynamics. Later work simplified calibration and made temporal unwrapping more practical.

Temporal approaches are effective for highly discontinuous surfaces and their measurement error decreases as the number of projected patterns increases. Shadow regions are limited to local errors that do not propagate into high-SNR areas. Zhao et al. proposed a method that assigns fringe orders using two phase maps of differing precision, enabling automatic phase map generation without locating fringe centers or explicitly assigning orders. The method is relatively insensitive to discontinuities and noise, though under-sampling robustness remains to be validated.

To reduce required projection images and computation, Huntley and Saldner proposed exponential sequence unwrapping where spatial frequencies change exponentially with time (period counts 1, 2, 4, ..., N0). This reduces the number of patterns needed and improves noise tolerance. A reverse-exponential sequence was later proposed to increase SNR and, using linear least-squares, improve unwrapping precision approximately by a factor of sqrt(s) where s is the total number of projected fringes. Recursive schemes have been developed to improve resolution and recover steep local surface slopes where Shannon sampling is violated, enabling multiresolution reconstruction.

Temporal unwrapping methods can be grouped into four main approaches: Gray-code-based, multi-frequency, multi-wavelength, and number-theory-based unwrapping. The next sections describe these in detail.

Gray-code-based unwrapping

Gray code, introduced in the 1940s, encodes values so adjacent codes differ by only one bit. In structured light, Gray-code patterns are binary patterns projected onto the object and captured by the camera. Combining decoded Gray-code indices with calibration yields 3D reconstruction. Because Gray-code transitions uniquely identify stripe locations, decoding relies on accurately extracting stripe edges or centers; edge extraction accuracy strongly affects decoding correctness.

Gray-code-based unwrapping variants include binary Gray code, multi-gray-level Gray code, combined Gray code and phase shifting, and other Gray-code-inspired methods. Combining Gray code with phase shifting leverages Gray code for coarse encoding of discontinuous surfaces and phase shifting for fine detail. However, nonuniform reflectance, ambient light, noise, and defocus can blur binary transitions and cause decoding errors. Complementary Gray-code patterns offset boundaries to avoid edge ambiguity, improving robustness at the cost of additional patterns.

Self-calibrating Gray-code methods avoid extra patterns by exploiting wrapped-phase information to constrain the search range for fringe orders, reducing sensitivity to noise. Adaptive median filtering has been used to detect and remove erroneous pixels before unwrapping. While effective for static or slowly varying scenes, such post-correction approaches are less suitable for dynamic scenes with large error regions.

Defocus-tolerant binary techniques have enabled high-speed structured-light measurement by overcoming projector nonlinearity and supporting rapid pattern switching. Cyclic complementary Gray-code combined with binary dithering has extended measurement range and improved precision for dynamic scenes, though depth range remains limited. Mobile Gray-code strategies and staggered Gray-code schemes have been proposed to mitigate reflectance nonuniformity and defocus, avoiding extra patterns while improving accuracy.

To improve encoding efficiency, multi-gray-level Gray codes were introduced. Zheng et al. proposed ternary Gray code to reduce the number of patterns from log2(f) to log3(f) for a sinusoidal fringe of frequency f. He et al. extended this to quaternary Gray code, further increasing efficiency. Combined schemes have reduced projected patterns to as few as 3 to 5 images in some implementations, improving speed for dynamic measurement.

Gray-code methods offer high robustness and noise tolerance and are relatively insensitive to system nonlinearity. Ongoing work focuses on reducing the number of projected patterns and mitigating boundary errors so Gray-code approaches can be applied to faster measurements.

Multi-frequency phase unwrapping

Multi-frequency phase unwrapping (MFPU) projects two or more fringe frequencies over time. Wrapped-phase maps from lower-frequency patterns provide coarse information to resolve fringe order k for higher-frequency wrapped phases. MFPU was originally used in interferometry and adapts well to fringe projection systems.

Li et al. proposed a dual-frequency approach where a high-frequency pattern and a low-frequency pattern are constrained in relative size so the low-frequency map is less sensitive to discontinuities, enabling accurate unwrapping of the high-frequency phase. Choosing optimal frequencies is critical for accuracy. Towers et al. introduced an optimization criterion that yields a geometric series of wavelengths maximizing dynamic range for a given number of frequencies and identified an optimal "three-fringe" selection that attains large range and high reliability using only three frequencies. Experiments showed over 99.5% reliability in fringe-order determination using three frequencies across the field of view when 100 fringes were deployed. Zhang et al. adapted the optimal three-fringe approach to color fringe projection, achieving pixelwise absolute phase computation with relatively few patterns and good robustness.

Although MFPU offers high precision and reliability, it typically requires many patterns and longer measurement time. Efforts to reduce pattern count include three-frequency methods, combined heterodyne schemes, and hybrid approaches that lower data quantity while maintaining precision. Error-correction methods and refined selection of fringe frequencies, phase shifts, and pattern sequences have been proposed to reduce jump errors and improve computational efficiency. Examples include phase correction via multi-frequency heterodyne principles, block-wise fitting to increase local accuracy, and constraint-based improvements that eliminate the need for post-correction while improving speed.

MFPU generally outperforms Gray-code-based methods in terms of pattern efficiency, precision, and unwrapping range, but further reductions in pattern count remain an active area of development.

Multi-wavelength phase unwrapping

Multi-wavelength phase unwrapping generates reference phase by taking the wrapped-phase difference between two phase functions. For dual-wavelength schemes the effective wavelength formed by beat frequency expands the unambiguous phase range. Due to poor noise immunity of dual-wavelength setups, three or more wavelengths are commonly used, with three-wavelength schemes balancing pattern count and robustness. Compared with multi-frequency methods, multi-wavelength schemes can achieve faster unwrapping with fewer projected patterns while maintaining accuracy.

Number-theory-based phase unwrapping

Number-theory-based unwrapping uses properties of coprime periods or frequencies. It is simple and robust in ideal conditions, but requires frequency choices that are pairwise coprime, limiting flexibility. Its robustness and noise tolerance are affected by low-frequency components, and measurement range may be constrained by frequency selection.

Spatial phase unwrapping

Spatial unwrapping applies local or global optimization in the spatial domain to compare adjacent pixels and add or subtract 2π where needed so that phase differences between neighboring pixels fall in (-π, π]. In practice spatial unwrapping may fail across discontinuities, allowing errors in high-noise regions to propagate into low-noise regions. Despite error accumulation risks, spatial unwrapping is fast and widely used when measurement speed is critical.

Spatial methods fall into path-following local algorithms and path-independent global algorithms. Path-following methods include quality-guided unwrapping and branch-cut algorithms; global methods include unweighted and weighted least-squares approaches.

Quality-guided unwrapping

Quality-guided unwrapping ranks pixels by a reliability measure and unwraps from the highest-quality pixels outward, typically using a flood-fill approach. The reliability map is often built from amplitude, fringe modulation, local phase gradient, or SNR. Quality-guided methods limit error spread from low-quality regions, operate quickly, and are suitable for many practical tasks, but they can still suffer if the chosen unwrapping path is incorrect.

Improvements include reliability-mapping strategies that combine multiple parameters, multilevel thresholds, and using filtered amplitude as the quality measure. Data structures such as adjacency lists and indexed interleaved lists help speed up traversal. Wavelet-based reliability measures, windowed Fourier filtering, and bicubic interpolation filtering have been proposed to reduce noise and constrain error propagation. While quality-guided algorithms are fast and effective in many scenarios, their ability to handle severe discontinuities remains limited.

Branch-cut (residue-compensation) unwrapping

Branch-cut or residue-compensation methods identify residues (phase inconsistencies) and connect them with branch cuts to prevent error propagation during path-following unwrapping. Goldstein's branch-cut algorithm, introduced in 1988, suppresses noise propagation by routing unwrapping paths around residues. Challenges include branch-cut placement in dense-residue regions, nonoptimal branch paths, closed-loop cuts causing islanding effects, and sensitivity to noise.

Many enhancements address these issues: improved branch-cut placement, shortest-cut search via randomized search techniques, strategies to avoid looped cuts and islanding, residue-injection methods to improve accuracy, and tabu-search-based optimizations that reduce cut length and speed up processing. Fourier-based residue augmentation accelerates convergence but can be computationally expensive; simpler residue-increasing algorithms speed up computation by over 50% in some cases. Overall, branch-cut methods are robust against noise but require careful handling to avoid local error amplification.

Deep-learning-based phase unwrapping

Spatial methods are fast but sensitive to severe noise and discontinuities; temporal methods are accurate and robust but require many projected patterns and long acquisition time. To combine speed and accuracy, researchers have applied machine learning, especially deep neural networks, to find direct mappings from wrapped phase to absolute phase or fringe order. Deep learning can synthesize spatial and temporal information and has shown promise in reducing pattern count while maintaining accuracy.

Early work used convolutional neural networks (CNNs) to recast unwrapping as a semantic segmentation problem. PhaseNet and PhaseNet 2.0 are fully convolutional frameworks that perform dense classification of fringe-order or unwrapped-phase segments; PhaseNet 2.0 improved noise robustness and reduced need for post-processing. Other approaches generate synthetic labeled datasets to avoid time-consuming manual annotation.

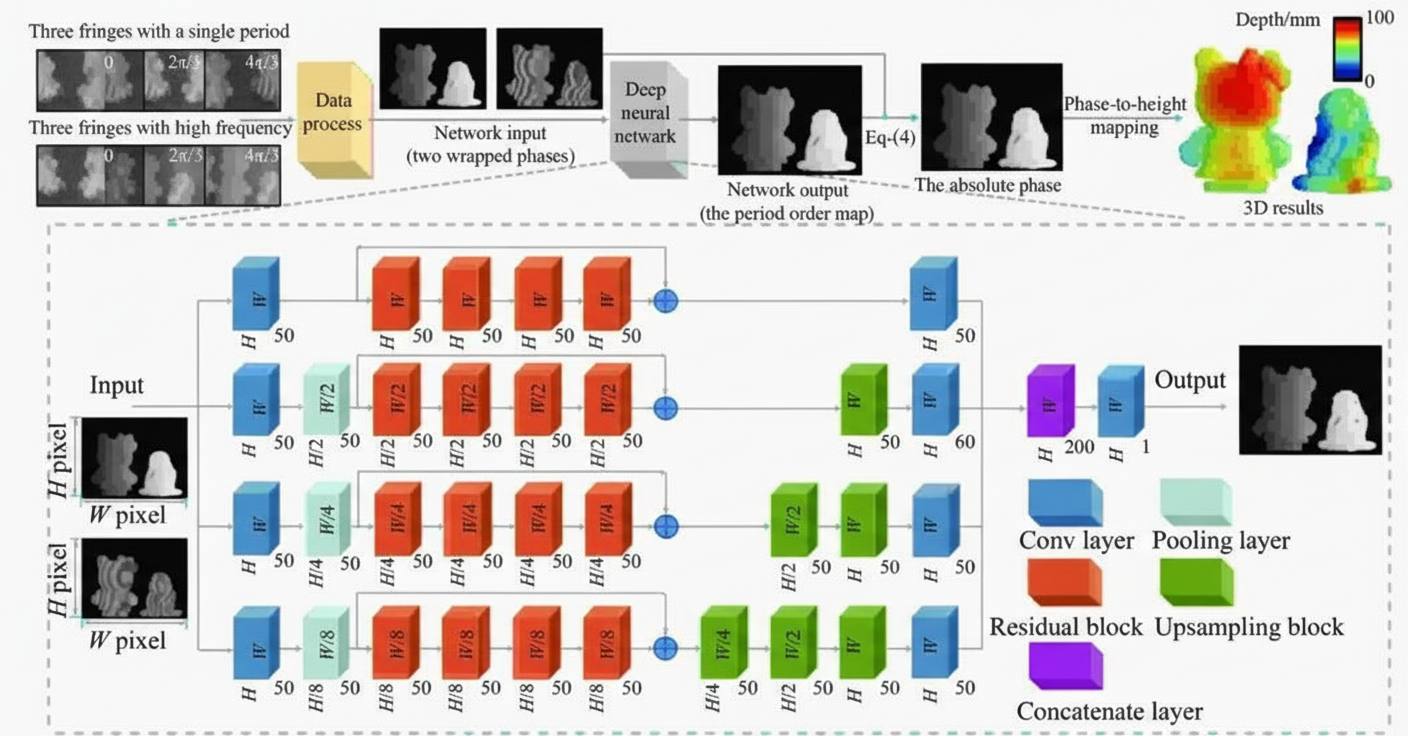

Deep models have been trained to denoise wrapped phases, predict fringe orders, or directly regress absolute phase from few images. Multi-task networks such as TriNet simultaneously denoise and predict fringe order, improving results in very high-noise situations. Deep-learning temporal unwrapping combines time-domain information with learned priors to improve reliability under projector nonlinearity, intensity noise, and motion artifacts; for example, using two wrapped-phase inputs (single-period and high-frequency) with a network trained to output absolute phase reduces required pattern count compared with traditional multi-frequency methods.

Figure Schematic of a deep-learning temporal unwrapping method

Researchers have also trained networks to perform fringe analysis from single or few patterns. Deep networks can recover phase from a single fringe image, sometimes producing uncertainty maps that quantify pixelwise confidence. Geometric-constraint networks and frequency-multiplexed encoding combined with deep networks have shown single-shot or few-shot absolute phase recovery for complex scenes without motion artifacts. Lightweight per-pixel temporal unwrapping networks reduce training time and model size, enabling use in small or mobile 3D systems.

Limitations of deep-learning approaches include the need for representative training data, potential lack of generalization to unseen scenarios, and hardware requirements for real-time deployment. Unsupervised and physics-constrained learning approaches are emerging to reduce reliance on labeled data. Overall, deep learning offers these advantages: fewer projected patterns, lower sensitivity to surface texture and discontinuity, and faster measurement. Addressing dataset reliability and model generalizability remains an active research area.

Other phase unwrapping techniques

Specialized methods address projector nonlinearity, speed, or specific scene constraints. Time-space binary encoding uses projector high refresh rates to display binary patterns that can be decoded into high-quality sinusoidal signals while avoiding projector nonlinearity. These methods can match sinusoidal projection precision but often increase the number of images required.

Geometric-constraint approaches exploit system geometry to derive one-to-one correspondences between wrapped phases from different views or between wrapped phase and projector coordinates. These methods can avoid extra images and achieve fast measurement, but often have limited depth range or sensitivity to phase error in high-frequency regions. Hybrid number-theory and geometric-constrained schemes improve reliability in dynamic scenes while using high-frequency fringes.

Other references use known reference objects, photometric constraints, or combined constraints to recover absolute phase from a small number of images. These methods can be effective in constrained setups but typically lack generality across diverse scenes.

Typical comparison and analysis

Temporal unwrapping offers high accuracy and robustness and can handle discontinuous surfaces, but at the cost of many projected patterns and slower measurement. Spatial unwrapping is fast and suitable for dynamic measurement but is more sensitive to noise and discontinuities. Spatio-temporal hybrid methods trade off speed and accuracy. Geometric-constraint methods can accelerate unwrapping using system geometry but may reduce depth range or increase system complexity. Deep-learning-based techniques can reduce pattern count and increase speed while maintaining accuracy, but require suitable datasets and training.

Discussion points:

- Required number of projected fringe patterns: Temporal methods typically require the most patterns, spatial methods the fewest. Deep-learning and hybrid methods trend toward pattern reduction.

- Measurement speed: Influenced by pattern count, acquisition time, computation complexity, and need for pre/post-processing. Dynamic scenes demand stricter speed requirements, often limiting allowable pattern counts.

- Noise robustness: A key determinant of accuracy and reliability. Spatial methods tend to be more noise-sensitive because they rely on adjacent-pixel phase comparisons. Many methods include filtering or postprocessing to improve robustness.

- Phase unwrapping precision: For high-precision scenarios, multi-frequency temporal methods and some deep-learning approaches are preferable.

Conclusions and outlook

Temporal unwrapping is suited for high-precision, low-speed measurements; hybrid Gray-code and phase-shift schemes have been adapted for faster dynamic scenarios. Spatial unwrapping is used when speed is critical but precision requirements are lower. Deep-learning-based unwrapping shows promise for combining accuracy and speed, though challenges remain in dataset availability, model generalization, and hardware demands. Specialized methods based on spatio-temporal coding, geometric constraints, or photometric constraints address specific limitations of traditional algorithms but often lack generality.

Future development directions include:

- Reducing the number of projected patterns while preserving accuracy and robustness.

- Enhancing noise immunity and overall algorithm robustness for adverse measurement conditions.

- Reducing computational complexity to enable faster processing with lower resource demands.

- Improving phase unwrapping precision under practical constraints of pattern count, speed, and range.

- Achieving high-speed real-time measurements by minimizing per-reconstruction image counts, improving hardware, and simplifying computation.

Phase unwrapping remains a central problem in structured-light 3D measurement. Temporal methods are well suited for high-precision static measurements but face efficiency challenges for fast scenarios. Spatial methods provide high speed but require improved noise handling. Deep learning offers a path to combine the advantages of both, but addressing dataset, training, and deployment issues is essential. Other specialized techniques provide targeted solutions for particular measurement problems. Achieving both high speed and high precision will continue to be an important research focus.

This research was supported by the National Natural Science Foundation of China (grant 52075147) and the UK EPSRC (grants EP/P006930/1 and EP/T024844/1).