Overview

In recent years, more companies have entered research on in-memory computing, and in-memory compute chips are gradually reaching commercial use. Companies are following diverse paths: using different memory types, starting from low-to-medium compute or targeting high-performance in-memory compute chips, and adopting either analog or digital in-memory computing techniques.

So how should one choose a suitable memory type, design high-compute in-memory chips, and choose between analog and digital approaches? Chen Wei, chairman of Qianxin Technology, provided detailed answers in an interview with Electronics Enthusiast.

1. Choosing the memory type

Qianxin Technology focuses on high-compute in-memory chip research and has explored SRAM, MRAM, and RRAM. Sample boards for SRAM-based in-memory products are currently under test, and the company is working with the Chinese Academy of Sciences, Tsinghua University and other institutions to optimize designs for RRAM- and MRAM-based in-memory circuits.

The technical team has long experience with various memories. More than ten years ago, Dr. Chen Wei served as technical lead for a national project to develop one of China's most advanced NOR flash chips and memory compilers. As project lead he established China's first 3D NAND flash design team and has more than a decade of experience with SRAM, RRAM, and MRAM, holding multiple patents.

Qianxin Technology has its own classification of these memories. Chen emphasized that choosing memory for in-memory computing should focus on customer and application requirements and that the memory choice should be based on the actual use case.

Although all of these are in-memory approaches, different applications emphasize either storage-centric or compute-centric characteristics. For example, edge scenarios such as voice recognition or face recognition prioritize low power and low cost, so combining storage and compute in a single device can effectively reduce cost.

In cloud computing, models are very large and training requires frequent updates, so non-volatile memories often cannot match the read/write speed of SRAM, DRAM, or RRAM. Those scenarios tend to favor memories with stronger compute characteristics.

In short, if the priority is low cost and low standby power, non-volatile memories such as Flash, RRAM, or MRAM can be appropriate. For large-scale compute, RRAM, SRAM, and DRAM are commonly chosen. The specific memory choice also depends on each vendor's patent layout and process maturity.

2. Designing high-compute in-memory chips

Fields such as autonomous driving and data centers have clear demand for high compute, so companies are investing in high-compute in-memory chip R&D and deployment. Apart from capacity limits for some NOR flash, the memory types mentioned earlier can generally be used for high-compute in-memory designs.

For flash-based in-memory computing, the primary route uses NOR flash (not the NAND used in USB drives). NOR flash typically has limited capacity, so achieving over 1 TOPS on a single NOR device is costly. Industry definitions of high compute often refer to 20–100 TOPS or more, so NOR flash is not ideal for very high compute on a single die. Other memories such as SRAM and RRAM have demonstrated feasibility for high-compute in-memory implementations.

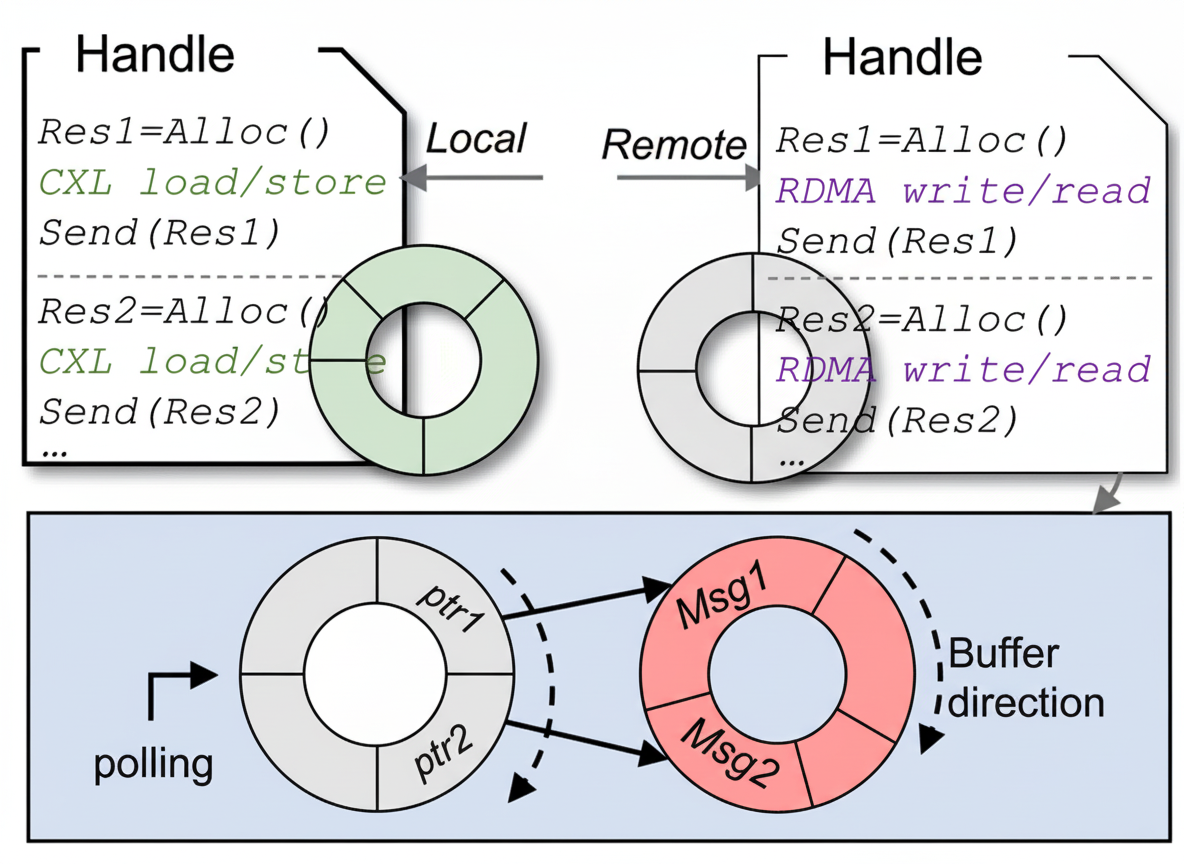

Designs for high-compute in-memory architectures differ from low-compute designs. According to Chen, the compute architecture inside an in-memory design varies with target compute. High-compute cores include specialized peripheral circuits to increase throughput. At the system level, high compute often uses many cores in parallel, integrating multiple lower-power cores into a larger compute unit, similar to how GPUs integrate many smaller compute cores.

Using non-volatile memories for very high compute faces process challenges. After roughly 10^6 to 10^8 program/erase cycles, errors can occur, so worldwide use of NVMs for designs above 200 TOPS remains limited. High-compute scenarios involve very frequent reads and writes, which accelerates device wear and can lead to computing errors. For safety-critical applications such as autonomous driving, these failures must be carefully managed.

To address this, Chen noted two approaches: improve device process technology and implement redundant device designs. For compute-centric scenarios, redundancy can mitigate wear but may lower performance compared with advanced-process SRAM and increase area, reducing some of the cost advantage of NVM-based approaches.

In summary, the memory choice for high-compute in-memory designs depends on the application scenario, customer requirements, and the process maturity of the memory device.

3. Differences between analog and digital in-memory techniques

Whether to use analog or digital techniques is a common question. Chen explained that in applications closely connected to sensors, analog in-memory computing is often recommended, while complex compute structures are usually better implemented digitally. Analog circuits incur high cost for complex calculations but have natural advantages when integrated with sensors.

Thus, the choice depends on the scenario. For low-compute needs such as voice recognition or small-scale image recognition, analog is often suitable. For large, complex cloud compute, digital approaches are more common.

For example, a memristor array can store matrix coefficients or weights, and inputs are applied as different voltages. According to Ohm's law, different voltages produce different currents. Summing those currents (Kirchhoff's law) implements a multiply-accumulate operation, which is the basic idea for using analog circuits to perform deep learning and other computations.

Simply put, the digital approach separates analog units into discrete representations. An analog cell stores an analog value spanning several bits (for example 8-bit or 4-bit effective precision). In a digital approach, a cell stores a single bit or a digital word. Analog storage offers higher density, but it is harder to perform complex operations directly. Digital circuits are more discrete, flexible, and better suited to complex computations.

4. Challenges and future

There are several current challenges. First is ecosystem development. Although the idea of in-memory computing is not entirely new, its practical adoption is still in early stages, and a general compilation and toolchain ecosystem is not yet fully mature. This creates adaptation requirements for deployment, and customers expect established tooling and support.

Second is meeting customer needs. AI chip deployment requires designing products from the customer's perspective. Many compute scenarios require not just AI inference but also other processing types. For example, a voice recognition application may require AI inference plus noise reduction algorithms. Designs need scenario-specific optimizations based on customer requirements.

Regarding the technology trajectory, Chen sees two phases. Currently the market is in a development and expansion phase, and many customers have not fully recognized the advantages of in-memory computing. If a prominent vendor demonstrates that in-memory chips outperform traditional von Neumann AI chips, rapid adoption could follow in the near term.

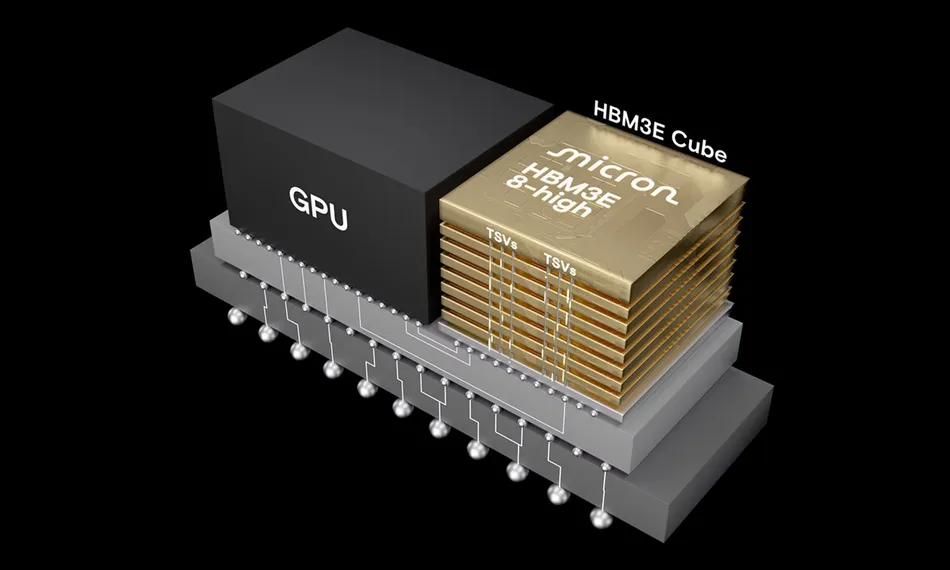

Over the longer term, in-memory computing is expected to integrate well with GPU and CPU technologies, becoming computation cores within CPUs, GPUs, DPUs, or other AI accelerators. In-memory technologies could act as compute engines that augment existing processor architectures.

From a commercialization perspective, at least two in-memory compute chips are currently in volume production in China, and more products are expected to reach commercial readiness this year. Current deployments are concentrated at the edge, such as wearable devices, voice recognition, and small vision-model scenarios.

In cloud computing, commercialization is expected to advance sooner; products could begin entering large internet companies in China within a year or two. Autonomous driving will take longer, since moving from architecture design to large-scale in-vehicle deployment typically requires about five years. Assuming design and tape-out are completed in the first two years, roughly three additional years are usually needed for adaptation, testing, and volume production before large-scale deployment.