Humans rely on vision to obtain information, judge direction, and estimate distance. When a vehicle assists with driving, what serves as its "eyes"?

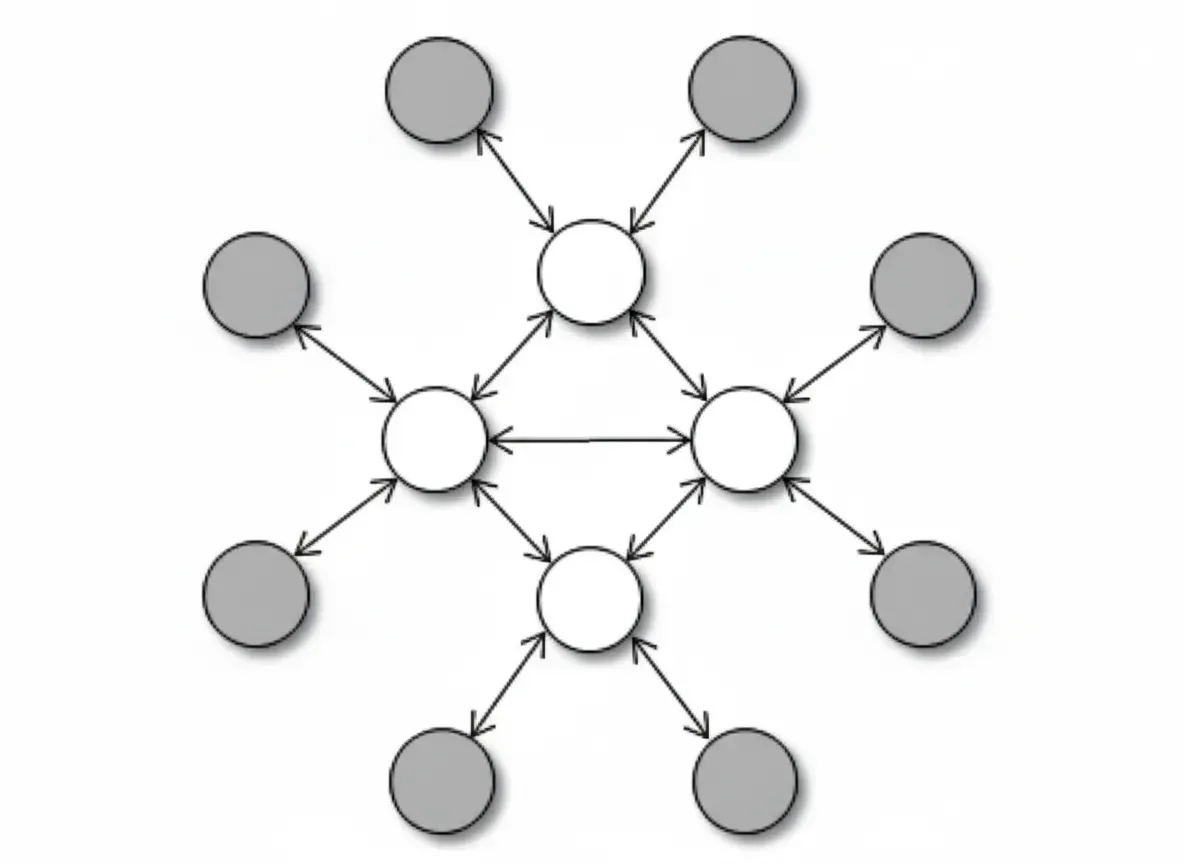

The answer is vehicle sensors. They continuously collect environmental information and send it to the vehicle's computing platform. Perception algorithms reconstruct the surrounding environment from sensor data, and decision algorithms use that perception to plan the vehicle's path.

Camera

Cameras are the most common automotive sensor. Mounted around the vehicle, they capture environmental images from multiple angles. Cameras entered commercial use in the 1990s and have become widespread. As the sensor closest to the human eye, cameras provide rich color and detail information, such as lane markings, signs, and traffic lights. However, they share human limitations: in low light, backlight, or other conditions that impair "visibility," cameras can fail to detect targets.

Visual perception relies on software algorithms to parse high-density image data, identifying objects and estimating their distance. Unknown or unusual objects, such as irregular obstacles on the road, may not be fully recognized, which can lead to incorrect perception and downstream decision errors.

For this reason, camera-only driving solutions usually remain at the L2 level, and L3 and above autonomous driving functions still face many corner cases that are difficult to solve using vision alone. Other sensors are therefore needed to provide complementary information.

Millimeter-wave Radar

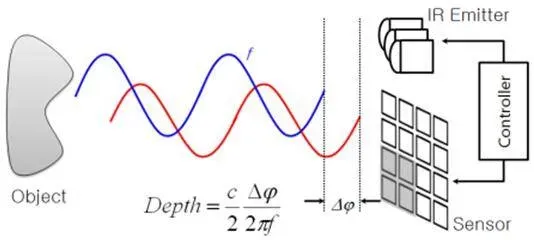

Millimeter-wave radar is an active sensor that uses millimeter-wave frequencies for ranging, detection, tracking, and imaging. It actively transmits electromagnetic waves, can penetrate smoke and dust, and is largely unaffected by light conditions and weather, enabling reliable detection of surrounding objects and providing relatively accurate distance and velocity information.

However, millimeter-wave radar has limited perception precision and cannot produce image-level detail. It detects targets by reflections, diffuse reflections, and scattering from object surfaces, so detection accuracy drops for low-reflectivity targets such as pedestrians, animals, and bicycles. Static objects on the road may also be filtered out as clutter.

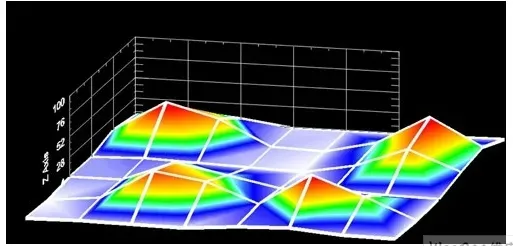

4D millimeter-wave radar is a variant that adds elevation information compared with traditional 3D radar, but its resolution still lags far behind lidar. Typical 4D millimeter-wave radar outputs on the order of 1,000 points per frame, while a 128-line lidar can output on the order of hundreds of thousands of points per frame, a difference of about two orders of magnitude in data volume.

Lidar

Lidar is also an active sensor. The most common method is ToF (time-of-flight) ranging, which measures the distance and position by emitting laser pulses and timing their return after reflection from surrounding objects. By emitting millions of laser points per second, lidar provides three-dimensional position information with image-level resolution, clearly representing details of pedestrians, crosswalks, vehicles, trees, and other objects. Higher point density yields higher resolution and a more complete reconstruction of the real world.

Because lidar actively emits light, it is largely unaffected by ambient lighting and can operate accurately in dark conditions. Lidar directly measures object volume and distance rather than inferring them, so it performs better at detecting small or irregular obstacles and in complex scenarios such as close-range cut-ins, tunnels, and parking structures. Lidar performance can still be degraded by severe weather such as heavy rain, snow, or dense fog.

Comparison and Complementary Roles

In summary, cameras, millimeter-wave radar, and lidar are the most common sensors on autonomous vehicles, each with strengths and limitations. Combined, they provide complementary capabilities.

Cameras are passive sensors that capture rich color information but are sensitive to lighting and may have lower confidence in poor-visibility conditions. Millimeter-wave radar provides high-confidence distance and velocity information but has lower resolution and limited ability to distinguish object types such as pedestrians, bicycles, or small objects. Lidar excels in ranging, confidence, and object detail, though cost has historically been a drawback.

Lidar cost has been decreasing rapidly, and more automakers have integrated lidar into production models to improve the safety and comfort of automated driving systems. Advances in integration and automated manufacturing have reduced the number of components and production cost, bringing lidar from very high price levels several years ago down to a much lower cost range.

Ultimately, whether an automated driving system is effective depends on user experience. At the perception layer, the key is to have sensors play complementary roles and make the best use of their respective strengths. As perception software continues to evolve, more hardware potential can be unlocked, improving ride smoothness and comfort for users.