This article describes a novel and cost-effective reconstruction approach that uses a pre-built LiDAR map as a fixed constraint to address scale ambiguity in monocular camera reconstruction.

Overview

Advanced monocular reconstruction techniques typically rely on structure-from-motion (SfM) pipelines. However, these methods often produce reconstructions that lack true scale and accumulate unavoidable drift over time. In contrast, maps built from LiDAR scans are widely used in large-scale urban reconstruction because they provide precise range measurements that are essentially missing in vision-only methods. Prior work has combined simultaneous LiDAR and camera measurements to recover accurate scale and color details in maps, but those results depend on precise extrinsic calibration and tight time synchronization. This paper proposes a novel and cost-effective reconstruction pipeline that uses a pre-built LiDAR map as a fixed constraint to resolve the scale ambiguity in monocular reconstructions. To our knowledge, this method is the first to register images to a point cloud map without requiring synchronized capture of camera and LiDAR data, enabling reconstruction of more detail in regions of interest. To support further research, we release Colmap-PCD, an open-source tool based on the Colmap algorithm that enables precise image-to-point-cloud registration.

Main contributions

Establishing accurate 2D-3D correspondences between images and reconstructed objects is critical for precise image localization. One promising strategy is to establish correspondences between image features and LiDAR-derived planes, where LiDAR planes act as alternative 3D features extracted from a LiDAR point cloud map. For this purpose we build on Colmap, a robust SfM-based software tool. We enhance the pipeline by incorporating a pre-built LiDAR map as a fixed constraint within the factor graph optimization. This ensures that the reconstruction scale of the images closely matches the scale of the LiDAR map, improving registration accuracy. The method is evaluated on a self-collected dataset, and results demonstrate clear improvements over the standard Colmap pipeline. Specifically, our method produces reconstructions with accurate scale that are beyond the reach of the original Colmap. The three main contributions are:

- Introduce Colmap-PCD, an image-to-point-cloud registration pipeline that optimizes image localization using a LiDAR map. It produces precise localization and reconstructions with accurate scale without synchronized LiDAR and camera data collection.

- Demonstrate the effectiveness of Colmap-PCD through comprehensive testing on a self-collected dataset.

Colmap: brief overview

Correspondence search: The correspondence search includes feature extraction and feature matching, identifying candidate overlapping regions across input images by matching 2D feature points. To improve robustness, Colmap selects the most reliable transformation model by computing the number of inliers under different transformations.

Initialization: A suitable image pair is chosen for initial reconstruction, ensuring it shares sufficient common observations with multiple images. Triangulation is then used to generate an initial set of 3D points.

Selecting and registering the next image: The next image to register is chosen based on having more points visible in the current model and a more uniform distribution of matches. The image pose is estimated using PnP.

Triangulating measurements: New images are triangulated to integrate new scene points into the existing 3D structure. Due to noise, Colmap treats the feature track of a single 3D point as a set of measurements that can be paired. Paired measurements are triangulated using the direct linear transform (DLT) method with RANSAC for robust sampling.

Incremental bundle adjustment: After each triangulation step, local bundle adjustment (BA) is performed to refine parameters of the newly registered image and other registered images that share more common observations.

Global bundle adjustment: Global BA is performed to compute the optimal 3D model and parameters for all registered images. To save time, global BA is performed only when the model grows beyond a certain size.

Colmap-PCD algorithm

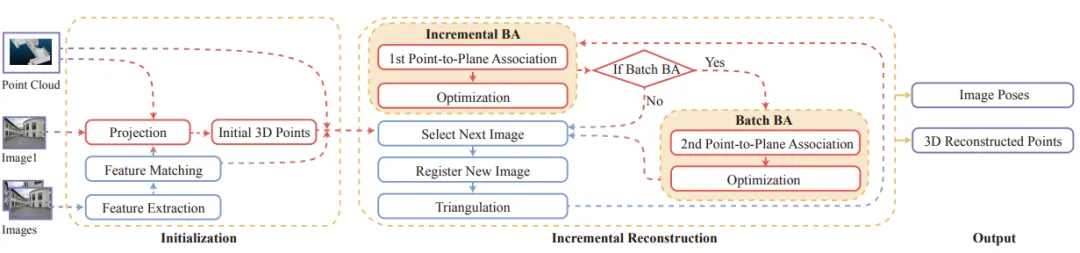

Colmap-PCD matches 3D points reconstructed from images with planes extracted from a LiDAR point cloud map and jointly minimizes reprojection error and the distance between 3D points and corresponding LiDAR planes. The overall pipeline is illustrated in Figure 2.

Figure 2: Colmap-PCD workflow. Processes in blue correspond to the original Colmap pipeline; processes in red are LiDAR-related.

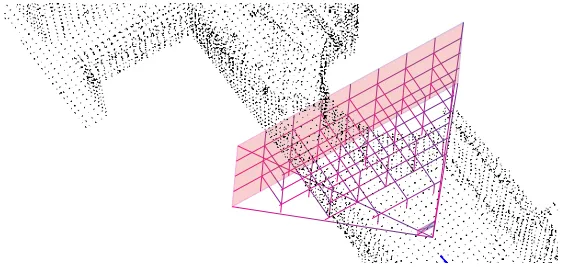

Projection

- Project LiDAR points into the known camera imaging plane.

- Partition the LiDAR point cloud into fixed-size voxels and form a quadrilateral pyramid from four lines.

- For each voxel, transform LiDAR points from world coordinates to the camera frame and project them onto the imaging plane.

Figure 3: LiDAR points projected into the camera frustum. The apex of the frustum represents the camera center.

Point-to-plane association

- Associate 3D points with LiDAR points so they align with corresponding LiDAR planes as closely as possible.

- Use two methods for point-to-plane association: depth projection and nearest neighbor search.

- In depth projection, select the LiDAR point with the smallest angular difference as the corresponding LiDAR point.

- Use nearest neighbor search for all point-to-plane association steps.

Initialization

- Image initialization requires a rough prior for camera poses.

- Initial images and their poses are set manually; poses do not need to be precise.

- Obtain corresponding LiDAR points for initial 2D feature points by projection to build an initial 3D model.

- As more images are added, the initial 3D model gradually converges to the correct state.

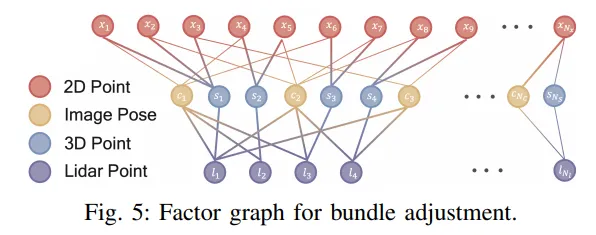

Bundle adjustment

There are three types of bundle adjustment: incremental BA, batch BA, and whole-map BA.

- Perform incremental BA after triangulating a new image to mitigate cumulative error.

- Execute batch BA to save time and build the 3D model containing camera poses and 3D points.

- Whole-map BA further optimizes the entire model by adding 2D features, image poses, 3D points, and LiDAR points into the factor graph for joint optimization.

Figure: Factor graph used for bundle adjustment.

Incremental reconstruction with known poses

If rough camera poses are available from some sensor measurements, such as GPS, incremental reconstruction can be started using those known poses.

Experiments

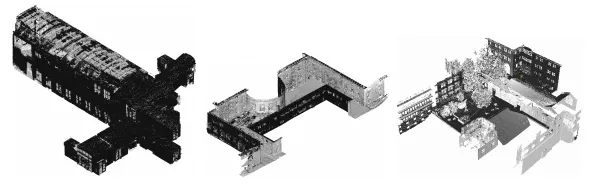

We validated Colmap-PCD's effectiveness and scale accuracy on three datasets collected on a campus, as shown in Figure 7. Each dataset includes a pre-built LiDAR point cloud map, 450 images, and corresponding camera intrinsics.

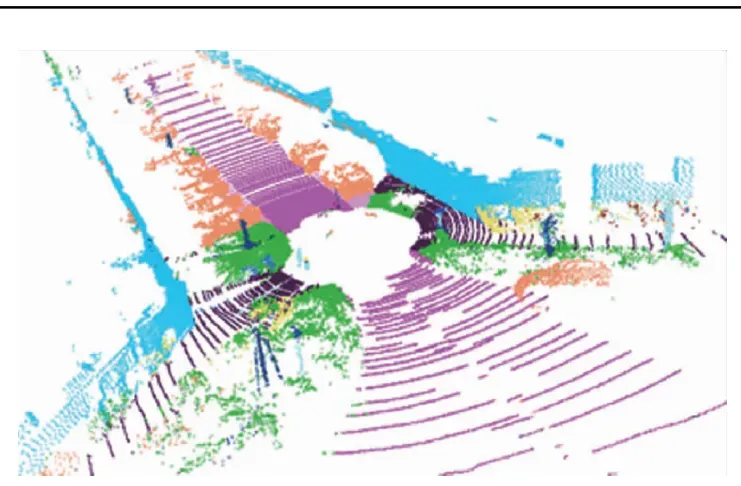

Figure: Collected LiDAR point cloud map.

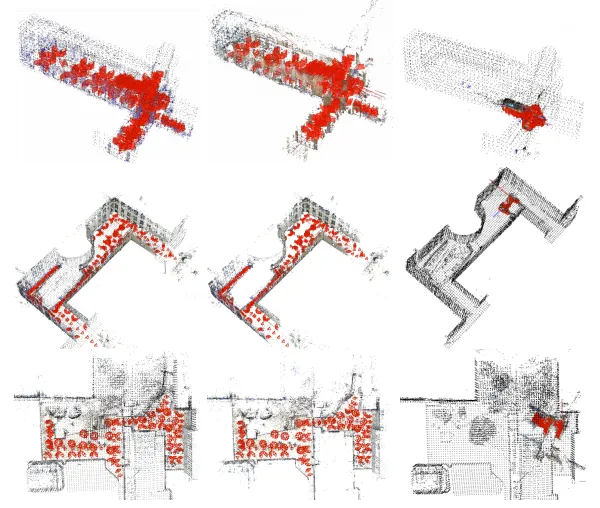

Localization results

The figure below shows reconstruction results from Colmap-PCD and the original Colmap. The left and center images show Colmap-PCD reconstructions: the left includes the LiDAR map and 3D reconstruction points, while the center shows only the 3D reconstruction points. The right image shows Colmap results including the LiDAR map and 3D reconstruction points. Camera poses are indicated by red pyramids. The Colmap-PCD reconstructions align almost perfectly with the LiDAR-generated map, indicating that the LiDAR point cloud map provides a strong constraint during image localization. By contrast, the scale of the Colmap-only reconstruction differs significantly from the real environment.

Figure: Reconstruction results from Colmap-PCD and Colmap.

We also show the result of back-projecting image points into the LiDAR point cloud; the alignment is clearly very accurate. This demonstrates that Colmap-PCD achieves the accuracy required for image localization.

Impact of LiDAR planes on image localization

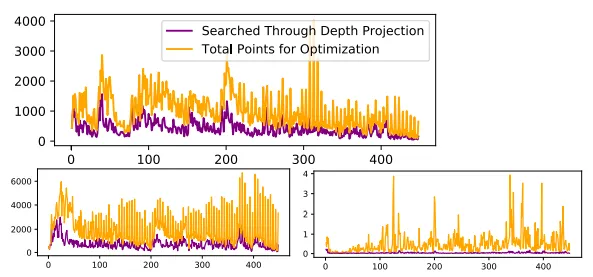

The first experiment investigates how many LiDAR planes can be used to improve pose estimation. Planes are found via point-to-plane association search. The x axis shows the number of image poses solved, and the y axis shows the number of LiDAR planes used in a single iteration. The purple line shows matches obtained via projection, while the yellow line indicates the total number of LiDAR planes successfully associated via both nearest neighbor search and projection. The results indicate that using a larger number of LiDAR planes benefits image localization.

Figure: Number of LiDAR planes used in incremental bundle adjustment (indoor, outdoor1, outdoor2).

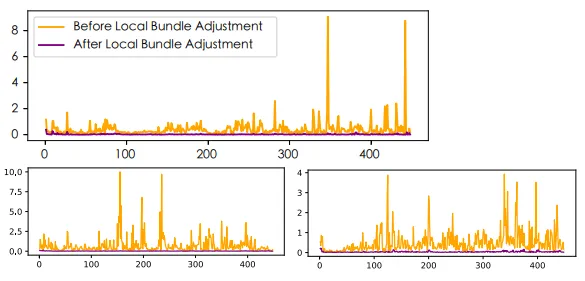

The second experiment shows the trend of point-to-plane distances before and after each local bundle adjustment. Distances converge to a minimum after each iteration, indicating that 3D points align well with appropriate locations in the point cloud map.

Figure: Distance between 3D points and corresponding LiDAR planes (indoor, outdoor1, outdoor2).

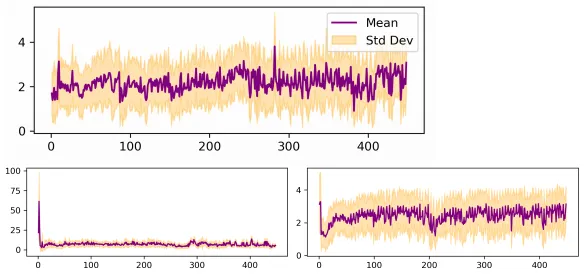

Reprojection error analysis

This experiment shows changes in reprojection error. The purple line denotes the mean reprojection error, while the yellow area depicts the distribution of reprojection error covariance.

Figure: Reprojection error (indoor, outdoor1, outdoor2).

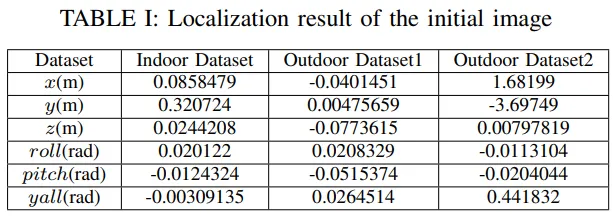

We captured the first image near the LiDAR map origin, so its initial position and orientation were set to zero. The final localization results for the initial image, summarized in Table I, show that as more images are added the initial image pose converges to the correct result even when the initial position error is relatively large.

Conclusion

This paper presents a method to align asynchronously acquired images with a LiDAR point cloud to obtain localization results with correct scale. Experiments on a self-collected dataset demonstrate the method's effectiveness and stability in image accumulation. The approach also allows flexible, detail-level adjustments within reconstruction regions, making it suitable for collaborative large-scale scene reconstruction. Overall, the algorithm contributes to image localization and large-scale scene reconstruction tasks and provides useful insights for future research in this area.