3D vision imaging is one of the primary methods for information perception in robotics. Methods can be divided into optical and non-optical approaches. The most widely used are optical methods, including time-of-flight, structured light, laser scanning, moiré fringe, laser speckle, interferometry, photogrammetry, laser tracking, shape-from-motion, shape-from-shading, and other shape-from-X techniques. This article introduces several representative schemes.

1. Time-of-Flight (TOF) 3D Imaging

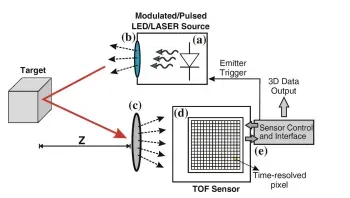

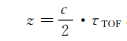

Time-of-flight (TOF) cameras determine depth per pixel by measuring the travel time of light.

In the classical TOF measurement, the detector system starts timing when a light pulse is emitted and stops when the detector receives the reflected echo, directly recording the round-trip time. The object distance Z can be estimated from the simple relation for round-trip time.

This ranging approach is also called direct TOF (D-TOF). D-TOF is commonly used for single-point ranging systems; scanning is usually required to obtain area coverage for 3D imaging.

Area TOF 3D imaging without scanning has only become feasible in recent years because implementing sub-nanosecond electronic timing at the pixel level is very challenging.

An alternative to direct timing is indirect TOF (I-TOF), where the round-trip time is inferred from time-gated intensity measurements. I-TOF does not require precise timing and instead uses time-gated photon counters or charge integrators that can be implemented at the pixel level. I-TOF is the commercially deployed TOF camera approach using mixed electronic and optical techniques.

TOF imaging is suitable for wide field-of-view, long-range, low-cost 3D acquisition. Its advantages are fast detection speed, large field of view, long working distance, and low cost. Its drawbacks are lower precision and susceptibility to ambient light interference.

2. Scanning 3D Imaging

Scanning 3D imaging methods include scanning ranging, active triangulation, and dispersive confocal techniques. Dispersive confocal is a type of scanning ranging and is widely used in industries such as mobile phone and flat panel manufacturing, so it is discussed separately.

2.1 Scanning Ranging

Scanning ranging uses a collimated beam that scans across the target surface to measure 3D shape. Typical methods include:

- Single-point TOF methods, such as frequency-modulated continuous wave (FM-CW) ranging and pulsed ranging (lidar).

- Laser scattering interferometry, including multi-wavelength interferometry, holographic interferometry, and white-light speckle interferometry.

- Confocal methods, such as dispersive confocal and self-focusing confocal.

In single-point scanning 3D methods, single-point TOF is suitable for long-range scanning but offers lower precision, typically at the millimeter level. Other single-point methods such as single-point laser interferometry, confocal methods, and single-point laser active triangulation provide higher precision, though interferometric methods are sensitive to environmental conditions. Line scanning achieves moderate precision with high efficiency. For robot arm end-effector 3D measurement, active laser triangulation and dispersive confocal methods are commonly appropriate.

2.2 Active Triangulation

Active triangulation is based on triangulation principles, using a collimated beam or one or more planar light sheets to scan the target surface and complete 3D measurement.

Beams can be generated by collimated lasers, cylindrical or aspheric beam expanders, or by incoherent light sources (e.g., white light or LED) through apertures, slits (gratings), or by diffractive elements for coherent sources.

Active triangulation can be categorized into single-point scanning, single-line scanning, and multi-line scanning. Commercial products for robot arm end-effectors are mostly single-point and single-line scanners.

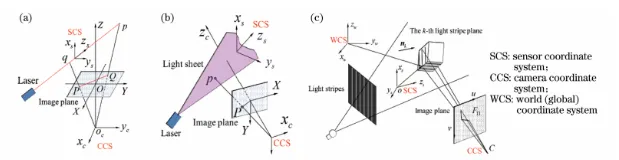

In multi-line scanning, reliably identifying stripe indices is a key challenge. To accurately identify stripe numbering, two orthogonal sets of planar light sheets can be alternately imaged at high speed. This enables a "Flying Triangulation" scan: multi-line flashing produces a sparse 3D view per frame, multiple scans with orthogonal stripe patterns produce a sequence of sparse 3D views, and these views are registered to generate a high-resolution, dense 3D surface model.

2.3 Dispersive Confocal

Dispersive confocal scanning can measure both rough and smooth opaque and transparent surfaces, including mirrored and transparent glass surfaces. It is widely used in mobile phone cover glass 3D inspection and similar applications.

Dispersive confocal scanning types include single-point 1D absolute ranging, multi-point array scanning, and continuous line scanning. Continuous line scanning is a dense array scan variant with a greater number of closely spaced sampling points. Examples of absolute ranging and continuous line scanning are shown in the figures below.

Commercial examples of spectral confocal sensors include the French STIL MPLS180, which uses 180 array points to form a line with a maximum line length of 4.039 mm (measurement point 11.5pm, spacing 22.5pm), and the Finnish FOCALSPEC product based on dispersive confocal triangulation.

3. Structured Light Projection 3D Imaging

Structured light projection is a primary method for robotics 3D vision. A structured light system typically includes one or more projectors and one or more cameras. Typical configurations include single-projector/single-camera, single-projector/dual-camera, single-projector/multi-camera, single-camera/dual-projector, and single-camera/multi-projector arrangements.

The basic principle of structured light projection is: the projector casts a known structured illumination pattern onto the target; the camera captures the pattern modulated by the target; image processing and geometric models compute the target's 3D information.

Common projector types include LCD projectors, digital light processing (DLP) such as digital micromirror devices (DMD), and direct laser or LED pattern projectors.

Based on the number of projections, structured light 3D imaging is classified as single-shot (single projection) or multi-shot (multiple projections).

3.1 Single-shot Structured Light

Single-shot structured light typically uses spatial multiplexing or frequency multiplexing encodings. Common encodings include color coding, gray-level indexing, geometric shape encoding, and random dot patterns.

For robotic hand-eye systems where high 3D measurement accuracy is not required, such as palletizing, depalletizing, and 3D grasping, projecting pseudo-random dot patterns to obtain 3D information is popular. The principle is illustrated below.

3.2 Multi-shot Structured Light

Multi-shot structured light uses time multiplexing encodings. Common pattern encodings include binary coding, multi-frequency phase-shift encoding, and hybrid methods such as Gray code combined with phase-shifted fringes.

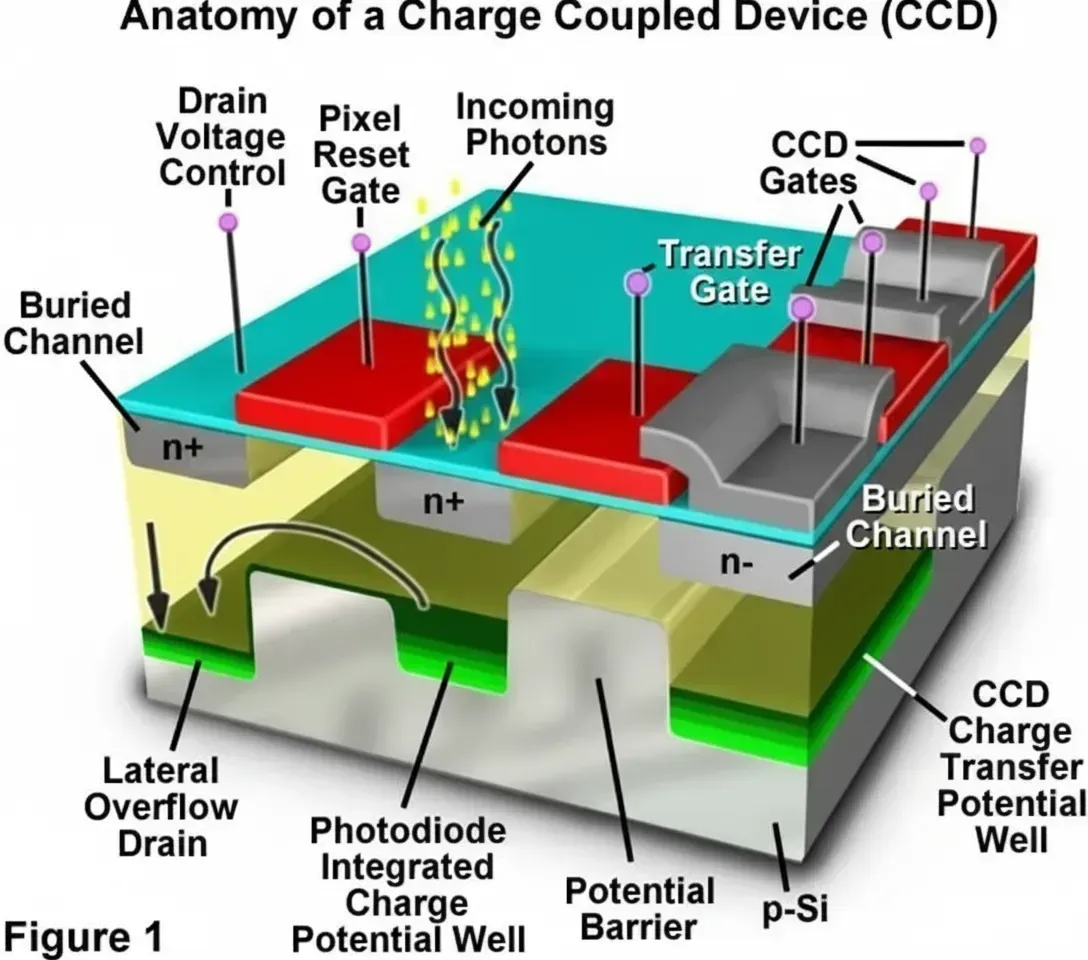

The basic stripe projection 3D imaging workflow is: generate structured light patterns via computer or optical devices; project them onto the surface via an optical projection system; capture the deformed patterns with imaging sensors (CCD or CMOS); use image processing algorithms to determine pixel-to-surface correspondences; and finally compute the 3D surface contour using the calibrated system geometry.

In practice, Gray code projection, sinusoidal phase-shift stripe projection, or a hybrid Gray code plus sinusoidal phase-shift approach are commonly used.

4. Deflection-Based Imaging for Specular Surfaces

Structured light can be projected directly onto rough surfaces, but for highly reflective smooth or specular surfaces, direct projection onto the measured surface is not effective. In such cases, mirror deflection techniques are used.

In this approach, stripes are not projected directly onto the measured surface but onto a scattering screen, or a liquid crystal display serves as the scattering screen to display the stripes. The camera captures the stripes after reflection from the specular surface, where curvature variations modulate the reflected stripe pattern. From this modulated information, the 3D surface geometry can be recovered.

5. Stereo Vision 3D Imaging

Stereo vision literally refers to perceiving 3D structure with one or two "eyes". In practice, stereo vision reconstructs 3D structure or depth from two or more images captured from different viewpoints.

Depth cues are classified as monocular cues and binocular cues. Stereo 3D can be implemented via monocular, binocular, multi-view setups, or light-field imaging (electronic compound eye or camera arrays).

5.1 Monocular Vision

Monocular depth cues include perspective, defocus differences, multi-view photogrammetry, occlusion, shading, and motion parallax. In robotic vision, other shape-from-X methods can also be applied.

5.2 Binocular Vision

Binocular depth cues include convergence and binocular disparity. In machine vision, two cameras capture images of the same scene from different viewpoints and compute disparities between corresponding points to obtain scene depth. Typical stereo processing includes image distortion correction, stereo rectification, image matching, and depth reconstruction by triangulation.

5.3 Multi-view Stereo

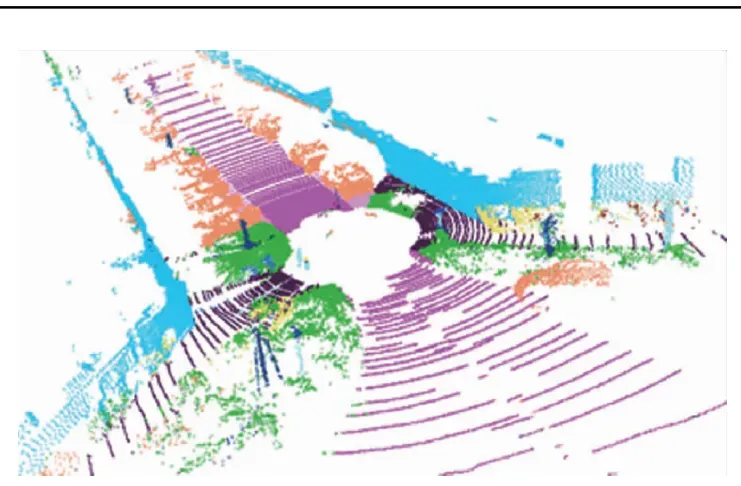

Also called multi-view stereo, this approach captures multiple images of the same scene from several viewpoints using one or more cameras and reconstructs the 3D scene. The principle is illustrated below.

Multi-view stereo is mainly applied in two scenarios:

- Using multiple cameras at different viewpoints to capture multiple images and then using feature-based stereo reconstruction algorithms to compute scene depth and spatial structure.

- Structure-from-motion (SfM), which uses a single camera to capture multiple images from different viewpoints (with fixed internal parameters) and reconstructs the 3D scene. SfM tracks many control points across frames to recover the 3D structure and camera poses over time.

6. Light-Field Imaging

Light-field 3D imaging differs structurally from conventional CCD or CMOS camera imaging. A conventional camera forms a 2D image on an imaging plane after light passes through the lens.

Light-field cameras add a microlens array in front of the sensor so that light passing through the main lens is further sampled by individual microlenses and recorded by the sensor array. This captures both directional and positional information of rays, enabling post-capture refocusing and other computational imaging operations.

7. Comparison of 3D Vision Methods

1) Cameras such as TOF and light-field cameras can be classified as single-camera 3D imaging solutions. They are compact and provide good real-time performance, making them suitable for Eye-in-Hand systems for 3D measurement, positioning, and real-time guidance.

However, TOF and light-field cameras currently face limitations for general Eye-in-Hand systems for these reasons:

- TOF cameras have low spatial resolution and limited 3D precision, unsuitable for high-accuracy measurement, positioning, and guidance.

- For light-field cameras, industrial-grade commercial options are limited. Available products can have moderate spatial resolution and precision but are currently expensive, making their use cost-prohibitive for many applications.

2) Structured light projection 3D systems offer moderate precision and cost and have a substantial application space. A structured light system composed of cameras and projectors can be regarded as a binocular or multi-view triangulation system if the projector is treated as a reverse camera.

3) Passive stereo vision 3D imaging has found applications in industry but is limited by conditions. Monocular 3D perception is difficult to implement; binocular and multi-view stereo require the target object to have clear texture or geometric features for reliable matching.

4) Structured light projection and stereo vision share certain drawbacks: they tend to be bulky and prone to occlusion. These methods rely on triangulation and therefore require a baseline distance and angular separation between cameras and projectors (or between stereo cameras), typically greater than 15 degrees, to perform measurement.

Reducing the angle between camera and projector can mitigate occlusion in some cases but significantly reduces measurement sensitivity and degrades system performance. One mitigation approach is to increase the number of projectors and cameras to cover occluded regions, forming projector-camera-projector or camera-projector-camera systems, or multi-camera/multi-projector systems to expand the visible area and reduce shadow regions. However, these multi-unit systems increase system size and reduce flexibility for Eye-in-Hand integration.

From an Eye-in-Hand integration perspective, an optimal solution would be a low-cost, moderate-precision, passive monocular 3D imaging system.