Introduction

Back-illuminated CMOS (BSI) sensors are commonly associated with smartphones and other compact imaging devices. Mainstream smartphone cameras today typically use either back-illuminated or stacked sensor designs.

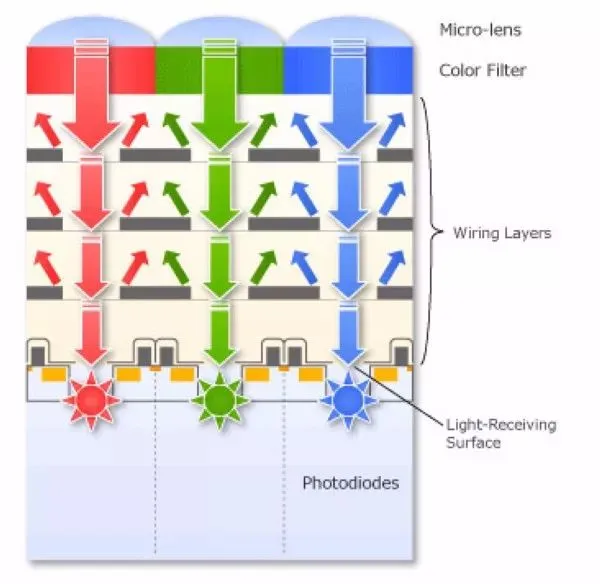

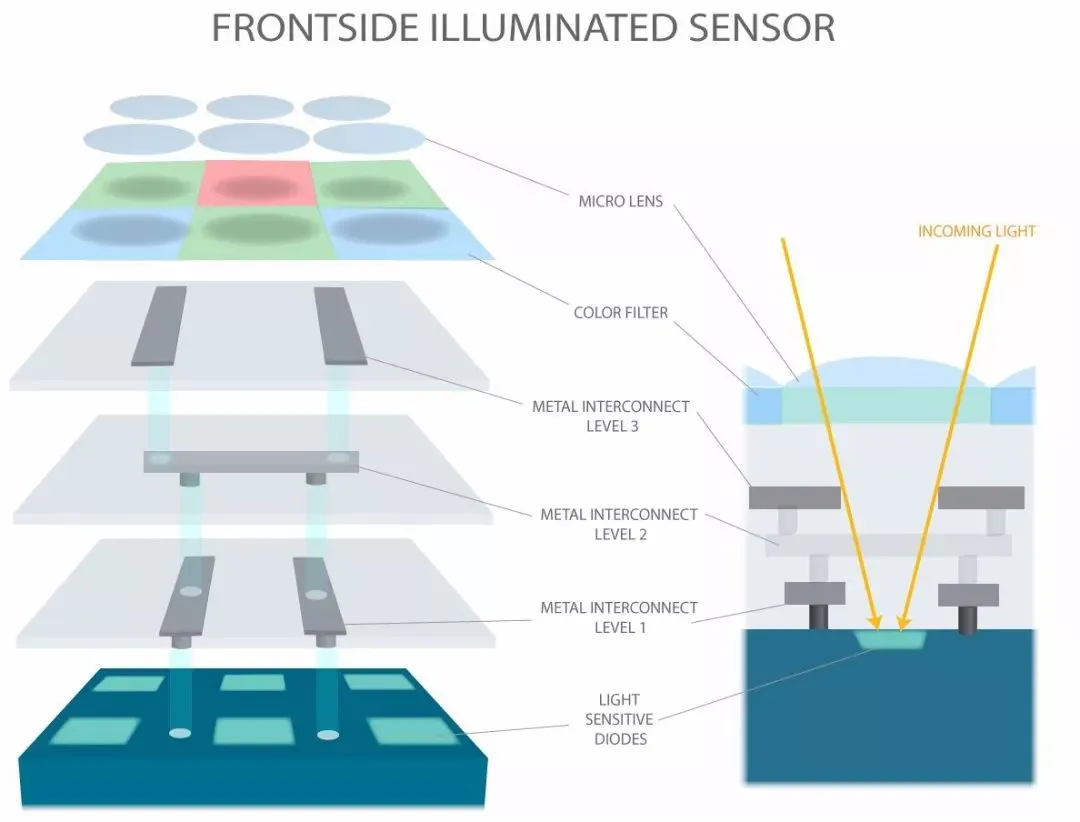

Front-illuminated CMOS (FSI)

To understand the meaning of “back” in back-illuminated CMOS, it helps to first review the traditional front-illuminated structure, known as front-side illumination (FSI). A typical CMOS image sensor is a multilayer structure. In a conventional FSI design, from top to bottom the layers are: microlenses, color filter array, wiring layer (metal interconnects), and the photodiodes.

Overall sensor area is roughly the sum of the photodiode active area and the circuit area. The photodiodes and their supporting circuitry must compete for limited pixel area. If the circuit occupies more area, the photodiode area is reduced, and the sensor collects less light. For compact imaging devices such as smartphones and pocket cameras, this limits image quality, most noticeably as higher noise at high ISO settings.

Modern CMOS sensors commonly integrate analog-to-digital converters (ADC) and readout amplifiers. Typical architectures allocate one ADC and amplifier chain per column. Increasing pixel count or readout speed therefore requires more supporting circuitry, which consumes area.

In an FSI sensor, incoming light passes through the on-chip microlenses and color filter before traversing the metal interconnect layer and then reaching the photodiode. Metals are opaque and reflective, so part of the incident light is blocked or reflected at the metal layer. As a result, the photodiode may only receive around 70% or less of the original light, and reflections can cause crosstalk between adjacent pixels, introducing color distortion. Low-cost interconnect metals such as aluminum can have reflectance around 90% across the visible band (380–780 nm), worsening these effects.

Back-illuminated CMOS (BSI)

Back-illuminated CMOS, or back-side illumination (BSI), reverses the positions of the photodiode and the wiring layers. In a BSI structure, from top to bottom the layers are: microlenses, color filter array, photodiodes, and wiring layers.

This change delivers two main benefits:

- The photodiodes receive more light (higher fill factor), improving sensitivity and signal-to-noise ratio, and reducing noise at high ISO.

- Supporting circuitry no longer competes with the photodiode area, allowing larger or more sophisticated circuits to improve readout speed and enable features such as high-speed burst shooting and high-resolution video capture.

Because the photodiode layer faces the incoming light and the fill factor is larger, BSI sensors can better capture oblique incident rays. For example, on some cameras using traditional FSI sensors, manufacturers use microlens optimization to improve edge performance; when using BSI sensors the same degree of special optimization is often unnecessary, though additional microlens tuning can still help.

There are trade-offs. By placing the wiring layer beneath the photodiode layer and improving circuit density, electrical interference between circuits can increase, which may slightly degrade signal-to-noise ratio at very low sensitivities.

Compared with conventional sensors, cameras with BSI sensors can improve light sensitivity by roughly 30%–50% in low-light conditions, enabling higher-quality images under weak illumination.

Key Development Milestones

The back-illuminated concept was proposed in the 1990s, but early manufacturing challenges prevented mass production. In 2007 OmniVision demonstrated BSI samples. In February 2009, Sony achieved BSI mass production and registered the Exmor R trademark. Early products using Exmor R sensors included camcorders and compact cameras from 2009 and some camera phones in 2010. In October 2011, a major smartphone model adopted Sony-produced BSI sensors for its main camera. Subsequent milestones include cameras that introduced 1-inch 20 MP BSI sensors and later full-frame 42 MP BSI sensors in mirrorless bodies.

BSI Sensor Characteristics

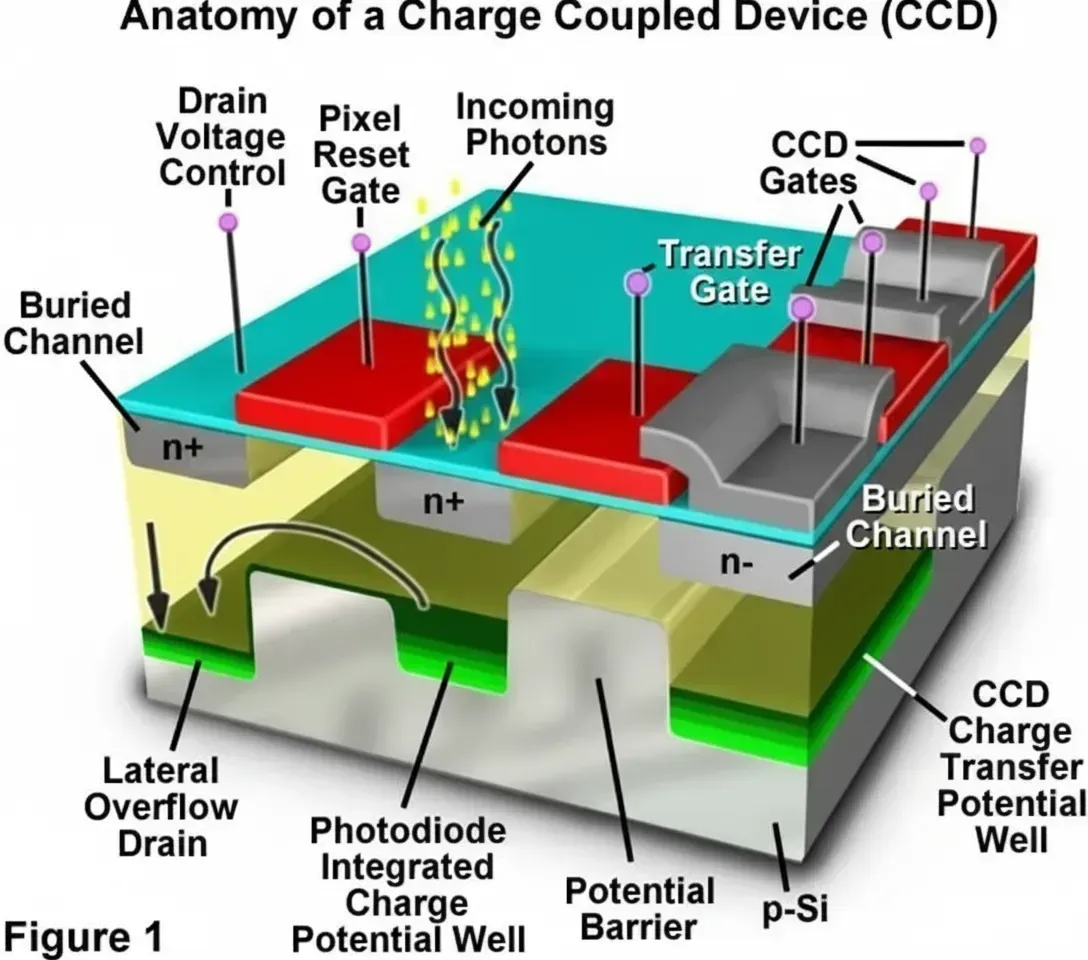

Modern BSI sensors benefit from advances in device fabrication in at least two ways. First, microlens performance has improved, making rays that reach the photosensitive surface closer to normal incidence, and reducing dispersion and flare introduced by microlenses. Second, BSI CMOS retains the per-pixel voltage conversion advantage of CMOS readout, allowing higher frame rates for large-pixel sensors compared with CCDs, which must transfer pixel charge off-chip for centralized conversion and therefore struggle to increase speed without increasing noise.

These advantages are not unique to BSI alone but reflect general improvements in modern CMOS sensor designs, which is why many cameras now prefer CMOS-based sensors: large pixels and high-speed operation directly affect end-user performance.

Does BSI Guarantee Better Image Quality?

Although BSI provides clear benefits in sensitivity and low-light performance, image quality depends on more than just the sensor. Optics and image processing algorithms are also critical. Light must pass through the lens before reaching the sensor, so lens quality directly affects final image quality. Additionally, raw sensor data typically require on-board processing to produce final images (except when outputting RAW), so the camera's image processor and its algorithms play a major role in rendering the final result. Different manufacturers apply different processing pipelines, producing varied image quality and styles.

Comparisons between cameras with BSI sensors and those with other sensors generally show little difference at low ISO, but notable improvement for BSI at high ISO. Another important improvement with BSI sensors is enhanced autofocus performance in low light, which is a practical benefit.

Applying BSI to large DSLR-sized sensors presents manufacturing yield challenges. Larger sensors have lower yields when adopting BSI processes, and until defect rates improve, adoption in large-format sensors remains limited due to cost.

Stacked CMOS

Stacked (stack) CMOS first appeared in mobile-oriented sensors. Stack integration was not introduced solely to reduce camera module thickness, although that is a beneficial side effect.

Sensor fabrication is similar to CPU fabrication: wafers are patterned to form the pixel section and the circuit section. The pixel section is where photodiodes are formed; the circuit section handles readout and processing. To improve light collection, light-guiding structures are introduced. During etch and processing, the wafer and pixel structures can be damaged, requiring an annealing thermal step to repair the silicon. However, applying heat to the entire wafer can damage pre-formed circuit elements, altering capacitor and resistor values and affecting readout performance.

Another issue is process node limitations. For example, a mobile sensor wafer might be patterned in a 65 nm process, which is adequate for pixel formation. But circuit performance benefits from more advanced nodes, such as 45 nm or better, which allow nearly doubling transistor counts and improving control of pixel behavior. Producing pixel and circuit regions on the same wafer forces both to use the same process, which is suboptimal.

The solution is to separate pixel and circuit fabrication and then bond them together. Using differences in thermal properties between SOI and the substrate, the two parts can be separated and processed independently: the pixel layer can be fabricated on a process optimized for pixels, while the circuit layer is fabricated on a more advanced node. The layers are then stacked and bonded, giving rise to the stacked CMOS architecture.

This approach addresses the two earlier issues: circuit damage during pixel anneal, and process-node limitations when both sections are built on the same wafer.

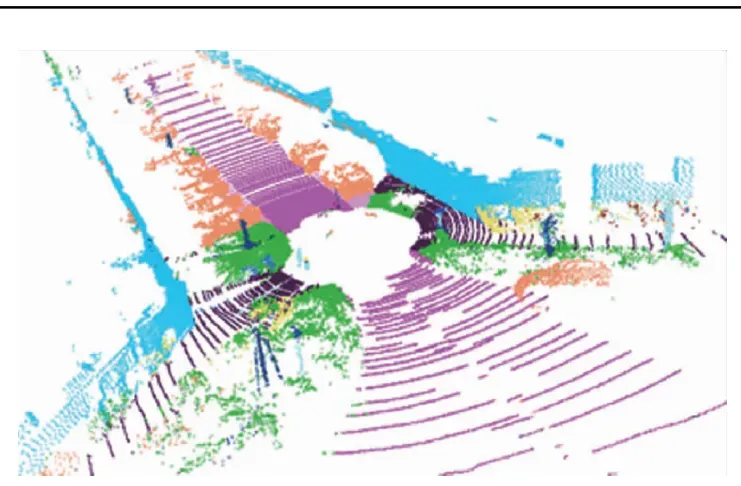

Stacked sensors inherit the advantages of BSI (the pixel layer is still back-illuminated) while removing some manufacturing constraints. Improved circuit capability enables additional features such as hardware HDR and higher-speed capture modes. Separating pixel and circuit fabrication reduces module thickness while allowing larger pixel areas or higher pixel counts and independent circuit optimization.

Advantages of Stacked CMOS

Stacked sensors evolved from BSI designs. Compared with BSI, stacked sensors can be smaller in volume while achieving improved image quality through more comprehensive optimizations. Two additional techniques commonly used with stacked sensors further enhance performance:

- RGBW encoding: Adding white (W) pixels to the traditional RGB pixel array increases light sensitivity and effective dynamic range, improving low-light image quality.

- Hardware HDR (in-camera HDR): Stacked designs can precisely control exposure per pixel row at the sensor level, enabling native high dynamic range capture without multi-frame software blending. This allows faster HDR image generation and HDR video capture.

Overall, stacked sensors refine and extend BSI advantages, addressing BSI limitations and enabling additional functionality. As a result, many smartphone manufacturers have adopted stacked sensors for their main camera modules, and stacked designs are increasingly common in mobile imaging.