Overview

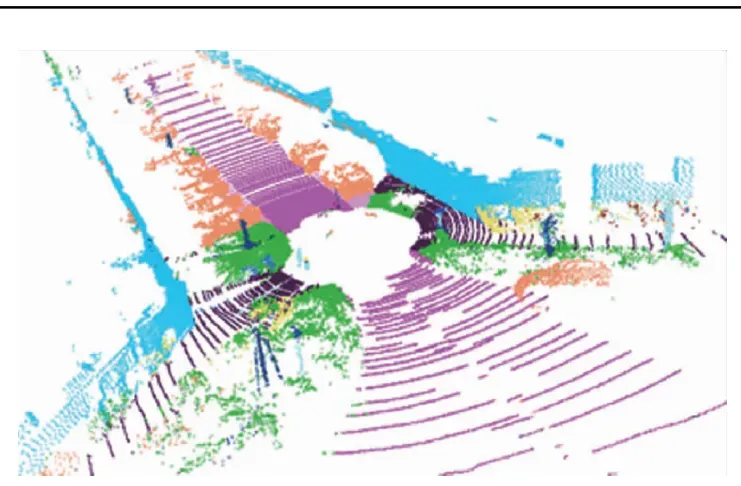

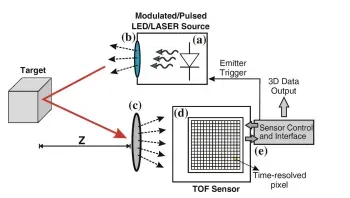

Pedestrian re-identification aims to recognize identities of uncooperative pedestrians across large-scale scenes. Each identity often has few samples and multiple capture devices are typically required. This study investigates using low-cost LiDAR to address challenges in pedestrian re-identification. We constructed the LReID dataset, the first LiDAR-based pedestrian re-identification dataset, to support research on using LiDAR point clouds for re-identification.

Research Scope

This work studies LiDAR-based pedestrian re-identification. A low-cost LiDAR setup was used to address re-identification challenges, a LiDAR dataset named LReID was built, and a LiDAR-based ReID framework called ReID3D was proposed.

Key Contributions

- First proposal of a LiDAR-based pedestrian re-identification approach.

- Construction of the first LiDAR-based ReID dataset, LReID.

- Proposal of a LiDAR-based ReID framework, ReID3D.

Method

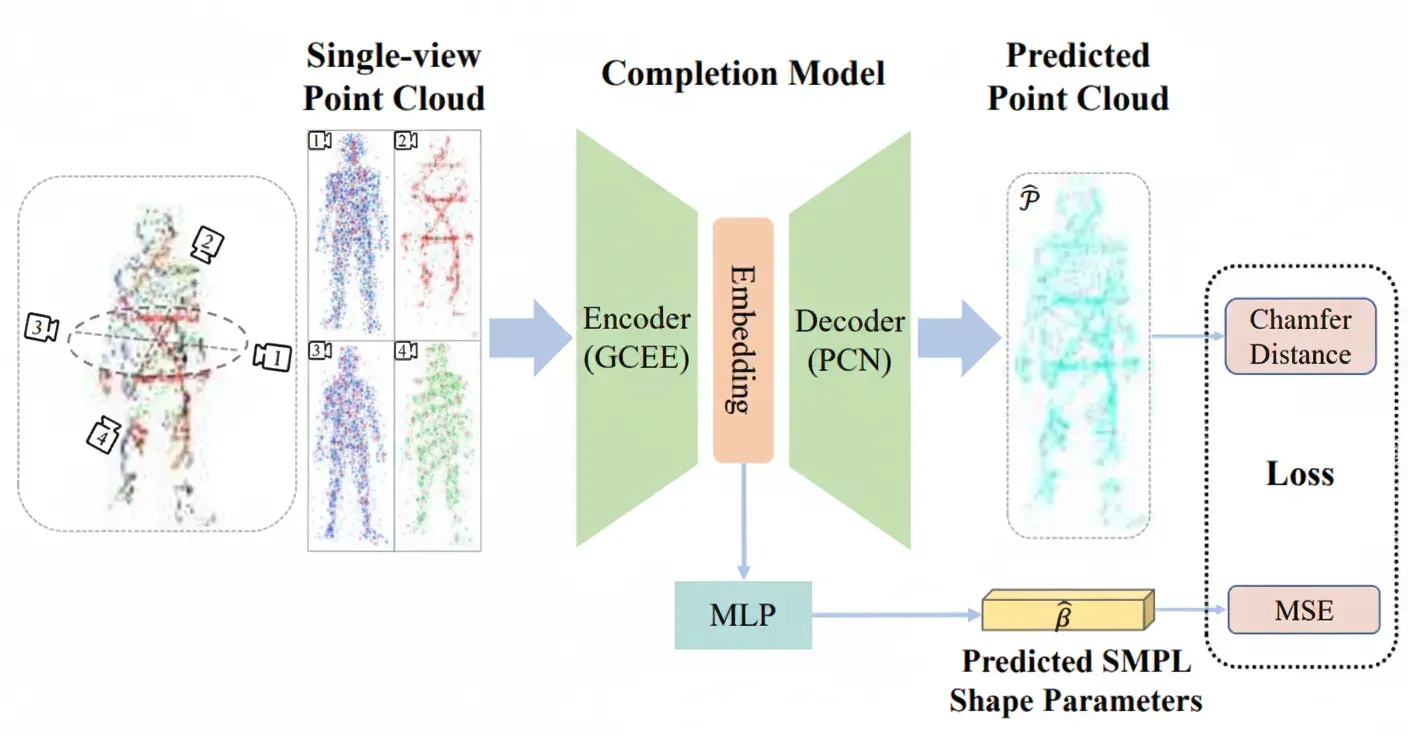

We propose a LiDAR-based pedestrian re-identification method named ReID3D. The method uses pretraining to guide a graph-enhanced complementary encoder (GCEE) to extract comprehensive 3D intrinsic features.

ReID3D employs multi-task pretraining to guide the encoder to learn 3D human features based on the LReID-sync dataset, as shown in Figure 1. The ReID network consists of a graph-enhanced complementary encoder (GCEE), a complementary feature extractor (CFE), and a temporal module. The pretrained GCEE initializes the ReID network.

Our observations indicate two key factors that may affect ReID performance: (1) inter-view appearance variations under cross-view settings, and (2) incomplete information from single views. Collecting and annotating real data incurs significant cost, while simulated data is inexpensive and can provide rich and accurate annotations. Therefore, we pretrain the encoder on simulated data to perform point cloud completion and SMPL parameter learning. The overall pretraining strategy is illustrated in Figure 1.

Mathematical Formulation

Several mathematical formulas describe the algorithms and model operations used in the method.

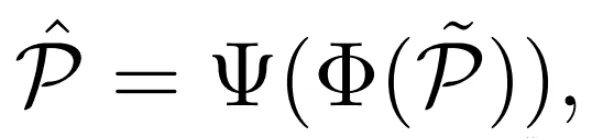

Point cloud completion formulation

Human shape parameter learning

Point cloud completion loss

The completion loss uses Chamfer Distance (CD) to measure differences between predicted and ground-truth point clouds.

Shape parameter loss

The shape parameter loss uses mean squared error (MSE) between predicted and ground-truth shape parameters.

These formulas describe the training objectives, loss functions, and feature extraction methods from point clouds.

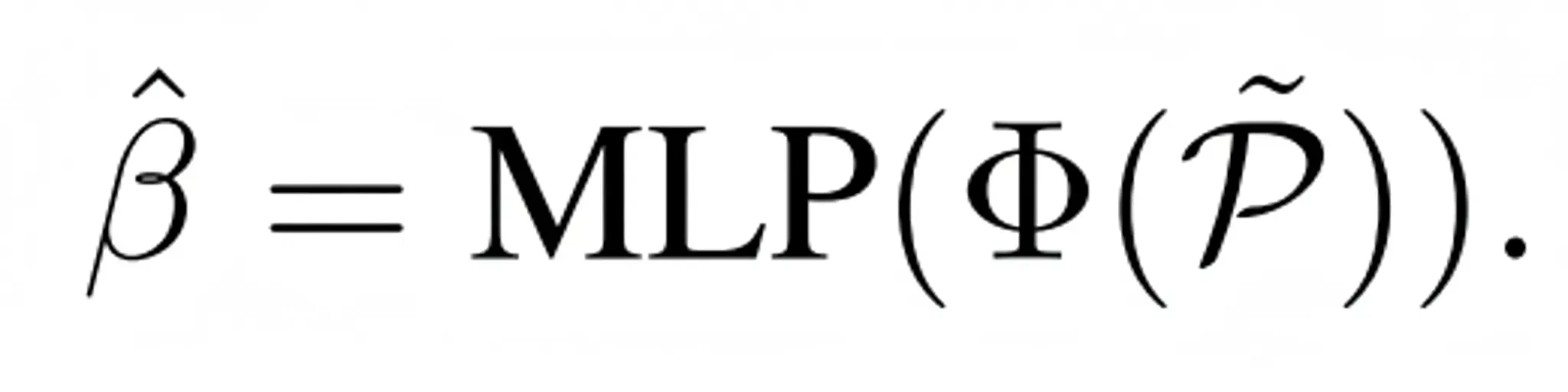

LReID Experiment

- Dataset split: The LReID dataset is split into a training set with 220 identities and a test set with 100 identities. In the test set, 30 identities were captured under low-light conditions and 70 under normal lighting.

- Query and gallery: For testing, one sample per identity is selected as the query and the remaining samples form the gallery.

- Evaluation metrics: Cumulative Matching Characteristics (CMC) and mean Average Precision (mAP), consistent with camera-based datasets.

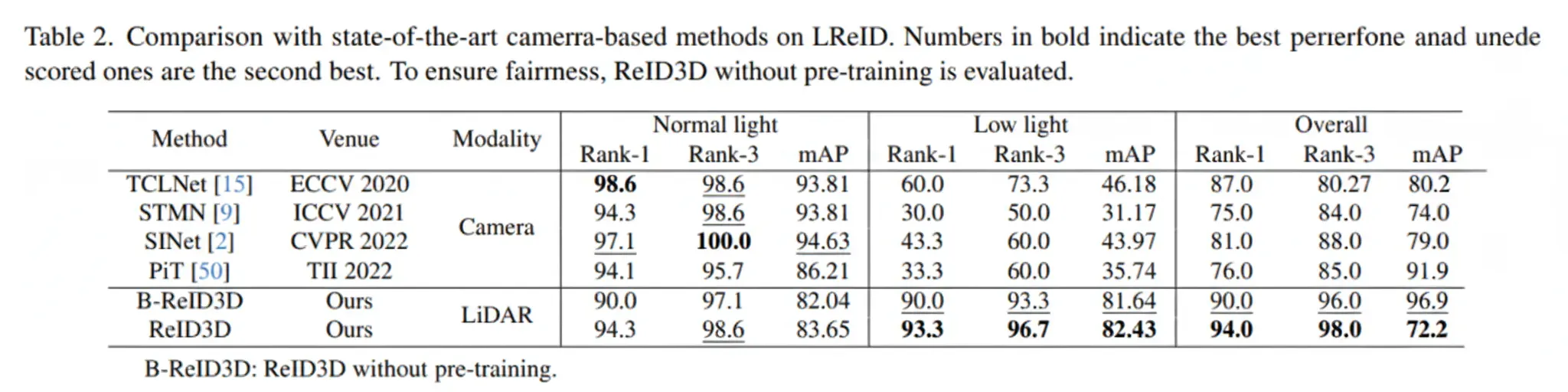

LReID-sync Experiment

Dataset generation: The LReID-sync dataset was generated using Unity3D to simulate scenes captured by multiple synchronized LiDAR sensors from multiple viewpoints. The dataset includes annotations for completion between full-view and single-view point clouds, and SMPL parameters.

Dataset characteristics: LReID-sync contains point cloud data for 600 subjects, each demonstrating different actions, unique body shapes, and gaits to ensure diversity.

Pretraining: The encoder is pretrained on LReID-sync to guide 3D body feature learning.

Evaluation metrics: Same as LReID, using CMC and mAP.

Potential Improvements

- Algorithmic enhancements: Further improve LiDAR-based ReID algorithms to increase accuracy and robustness.

- Dataset expansion: Expand the LiDAR-based ReID dataset to include more scenes, seasons, and lighting conditions.

- Multimodal fusion: Fuse LiDAR data with other sensor data such as RGB and infrared images to improve recognition accuracy and robustness.

- Real-time performance: Optimize LiDAR-based ReID algorithms for real-time operation in practical deployment scenarios.

- Cross-dataset generalization: Validate and evaluate LiDAR-based ReID algorithms on other datasets to assess generalization across different scenes and collections.

Conclusion

This work is the first study on LiDAR-based pedestrian re-identification and demonstrates the feasibility of using LiDAR for person re-identification in challenging outdoor real-world scenes.