There has long been debate between the LiDAR camp and the pure computer vision camp over perception-layer solutions for autonomous driving. The LiDAR proponents argue that pure vision algorithms are insufficient in data format and accuracy, while the pure-vision camp considers LiDAR unnecessary and too expensive. This article summarizes the main sensors used in autonomous driving—LiDAR, cameras, and millimeter-wave radar—describes approaches adopted by different autonomous driving companies, and outlines the sensor market landscape.

Context and recent developments

Tesla CEO Musk recently introduced the "full self-driving computer" during a company event, the previously announced Autopilot hardware 3.0. Compared with the previous Autopilot generation driven by Nvidia chips, the new hardware reportedly increases per-second frame processing by 21 times while reducing per-vehicle hardware cost by about 20% compared with Autopilot 2.5.

Unlike many autonomous driving companies that prioritize LiDAR-led solutions, Tesla has consistently favored a vision-dominant approach. The debate between LiDAR-led and pure vision approaches centers on whether higher-level passenger vehicle autonomy (Level 3 and above) requires LiDAR due to limitations in pure vision data and accuracy.

Tesla's release of its FSD update suggests its pure-vision approach could advance further, potentially reaching Level 3 or even Level 4 autonomy under certain definitions.

Fundamental principle of autonomous driving

The basic principle of autonomous driving is that perception-layer sensors capture vehicle position and external environment information. The decision layer models the environment based on perception input, forms a global understanding, makes decisions, and issues control signals. The execution layer translates decision-layer signals into vehicle actions.

Both LiDAR-led and vision-led approaches are methods for vehicles to perceive their surroundings; the difference lies in which sensor modality is dominant. Vision-dominant systems are centered on cameras combined with millimeter-wave radar, ultrasonic sensors, and low-cost LiDAR. LiDAR-dominant systems center on LiDAR combined with millimeter-wave radar, ultrasonic sensors, and cameras. Some argue for a hybrid approach in which neither modality is strictly dominant.

Notably, Tesla's vision approach is more extreme: the company has abandoned LiDAR entirely. Musk has been outspoken against LiDAR, stating that relying on it is a mistake.

Technical principles of LiDAR and cameras

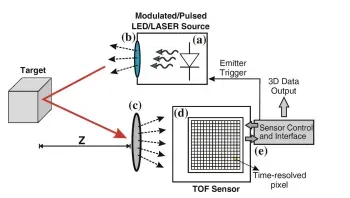

LiDAR works by emitting laser pulses and measuring the time of flight of reflected light to detect and range objects. Units are often mounted on the vehicle roof and can provide 360-degree coverage. Each LiDAR module contains an emitter and a receiver. A laser diode emits pulsed light; a photodetector near the diode detects the return signal. Calculating the time difference between emission and detection yields distance to the target.

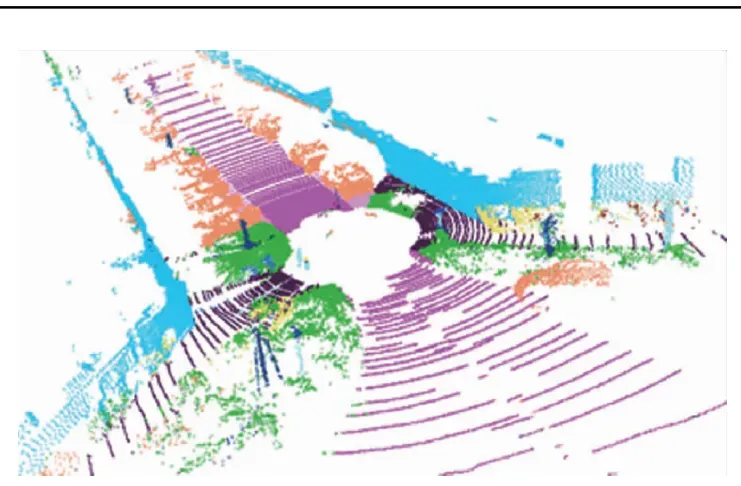

Time-of-flight pulse systems collect large point clouds once activated. Objects present in the point cloud appear as clusters or shadows; from these clusters it is possible to estimate distance and size and to generate a 3D model of the surrounding environment. Higher point-cloud density produces clearer imagery.

In general, the two most important characteristics of LiDAR are ranging and accuracy. Unlike passive camera systems, LiDAR is an active sensing modality and can detect obstacles accurately even at night. Because laser beams are highly collimated, LiDAR typically offers higher detection precision than millimeter-wave radar.

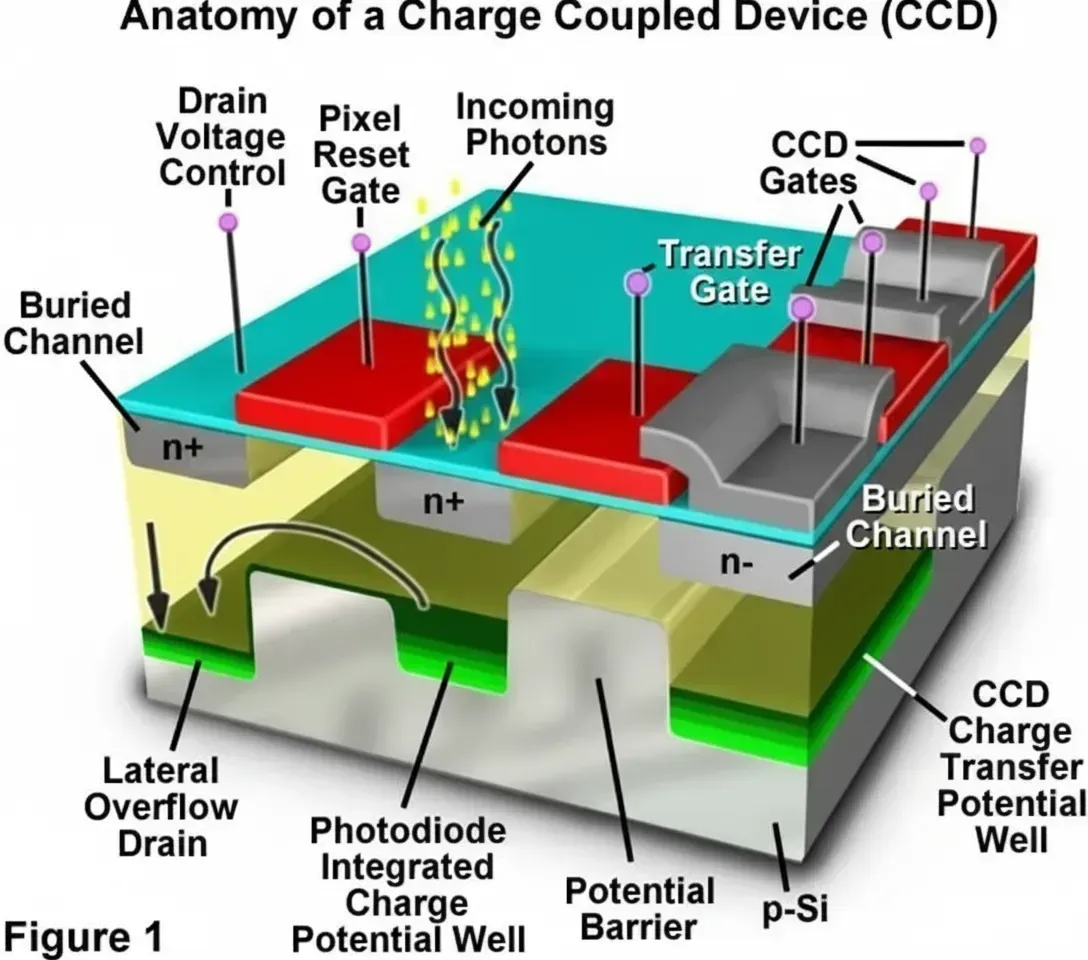

Cameras operate like the human eye: light reflected from objects passes through a lens and forms an image on a sensor. Cameras do not provide the direct ranging capability of LiDAR and are sensitive to lighting conditions. However, their major advantage is semantic richness: images are human-interpretable, which makes cameras well suited to object classification.

Tesla's former head of AI and vision Andrej Karpathy has argued that the world is structured for visual recognition and that LiDAR struggles to distinguish some objects that vision can, for example plastic bags versus tires. In his view LiDAR can act as a shortcut that avoids solving key visual recognition problems needed for autonomous driving.

Other mainstream sensors include radar, which can be divided into millimeter-wave radar and ultrasonic radar. Millimeter-wave radar offers the longest detection range, often exceeding 200 meters, and operates effectively in adverse weather and at night, making it a near-standard part of autonomous driving stacks. Ultrasonic sensors have the shortest range and are used for near-field monitoring within a few meters for blind-spot detection, lane-change assistance, and parking support.

What is the core dispute between the camps?

Different sensors emphasize different capabilities. Early autonomous driving efforts focused on LiDAR-centric perception, but most modern systems use multi-sensor fusion; a minority favor vision-centric architectures.

Waymo, which currently operates at a high level of autonomy, uses cameras, millimeter-wave radar, LiDAR, and audio detection systems. Waymo's camera system comprises several high-resolution cameras designed for long-range performance under varying light conditions; its millimeter-wave radar senses objects and motion effectively in day, night, rain, and snow; LiDAR provides 360-degree ranging.

Only Tesla among major players has fully rejected LiDAR. Besides LiDAR's strong detection capability, its most significant drawback is cost: according to an industry report, LiDAR can cost over 20,000, while cameras cost at most 2,000 and radar is even cheaper. This reflects the current maturity level of LiDAR technology and its impact on vehicle aesthetics.

Recent reports indicate Apple is negotiating with multiple companies to find smaller, cheaper, and more easily mass-produced next-generation LiDAR sensors for automotive use; some sources suggest Apple may be developing LiDAR technology internally.

Musk has offered technical reasons for rejecting LiDAR. He argues that some companies use inappropriate wavelengths for active sensing and has criticized active systems operating in the 400 nm to 700 nm range, preferring longer wavelengths for automotive sensing.

While Musk's view diverges from much of the industry, there is broad industry recognition that cameras play a central role. Cameras provide the richest linear information density among sensors, with much larger data volumes than other sensor types, positioning them centrally in perception fusion.

The disagreement is whether current computer vision and AI maturity can independently deliver full perception. Many autonomous driving companies acknowledge vision has great potential and may reduce LiDAR reliance over time, but they do not believe today's vision and AI systems can handle perception alone. Musk, by contrast, believes integrating LiDAR diverts development toward a suboptimal technical path and that the ultimate goal is camera-based perception.

Tesla and the pure vision approach

Why is Tesla confident in not using LiDAR?

Tesla's Autopilot perception relies mainly on three forward-facing cameras, two forward-side cameras, two rear-side cameras, one rear camera, 12 ultrasonic sensors, and one forward millimeter-wave radar. The eight cameras provide 360-degree visual coverage, while radar measures forward object distance and relative velocity regardless of weather. This configuration replaces LiDAR functionally while lowering system cost.

Crucial to Tesla's approach is its neural-network-based image recognition, supported by Tesla's in-house compute chip and extensive software stack. Every Tesla driver contributes data for neural-network training: each vehicle's driving behavior and edge cases help improve the system.

According to MIT estimates based on Tesla's disclosed deliveries and driving distances, by 2019 Tesla had accumulated around 480 million miles of road-test data, with an estimated 1.5 billion miles by 2020. Tesla claims its road data accounts for the majority of the industry's total driving data.

Karpathy has explained that Tesla's AI software processes lane markings, traffic, and pedestrian information from vision sensors, matches these signals to known objects, and then makes decisions. For labeling, Tesla also experiments with localized automated labeling to improve recognition rates. Only images that cameras cannot interpret or that produce ambiguity are uploaded to the cloud for engineers to label and then feed into the neural network for training until the network learns the scenario.

Different countries have widely varying road conditions, traffic rules, severe weather, and rare long-tail events such as floods, fires, or volcanic ash. Each human takeover while Autopilot is active is recorded; the system learns from human decisions and driving behavior.

Sensor market landscape

Finally, a brief overview of current market structure for cameras, LiDAR, and radar.

For automotive cameras, barriers mainly involve module packaging and customer relationships. International suppliers such as Panasonic and Sony hold substantial shares, but the market is not highly concentrated: Panasonic holds about 20% market share, and the next eight major competitors have relatively similar shares. Chinese suppliers such as Sunny Optical, OFILM, and Desay SV are entering automotive camera module packaging and manufacturing.

Foreign suppliers currently dominate, but as domestic suppliers mature and scale, their advantages in responsiveness and cost may enable substitution of foreign vendors.

For millimeter-wave radar, the global market is largely dominated by established automotive suppliers led by Bosch, with 77 GHz radar being mainstream. According to industry statistics, the Chinese 24 GHz automotive radar market is mainly led by Valeo, Hella, and Bosch, which together account for over 60% of shipments. The Chinese 77 GHz automotive radar market is led by Continental, Bosch, and Delphi, which together account for over 80% of shipments. Overall, the Chinese market remains under the dominance of foreign oligopolies.

The millimeter-wave radar market in the Chinese market is currently dominated by foreign component giants such as Continental, Delphi, and Bosch, which continue to introduce improved products. When single-product performance and price cannot clearly outcompete foreign suppliers, packaging full solutions, including system-level redundancy and customized services, is a viable path for domestic suppliers to differentiate.

Although LiDAR is not yet mature in the automotive domain, it has long been used in military and meteorological applications, albeit with limited demand. With growing demand for Level 3 autonomy, LiDAR may begin to penetrate consumer automotive markets.

For LiDAR manufacturers, the focus remains on lowering cost while maintaining basic performance. Only by reducing cost sufficiently can LiDAR enter the consumer market at scale.