Abstract

Extrinsic calibration between LiDAR and camera is a key step for sensor fusion in autonomous vehicles and robots. Most calibration methods rely on manually designed features, requiring many extracted features or specific calibration targets. With the development of deep learning, some approaches attempt to regress the six degrees of freedom (DOF) extrinsic parameters using convolutional neural networks. However, reported results show that such learning-based approaches often perform worse than non-learning methods. This article presents an online extrinsic calibration method that combines deep learning and geometric techniques. A two-channel image called a calibration flow is defined to represent the deviation from the initial projection to the ground truth. Using the calibration flow, 2D-3D correspondences are constructed and the EPnP algorithm within a RANSAC scheme estimates the extrinsic parameters. Experiments on the KITTI dataset show that the proposed method outperforms current state-of-the-art approaches. A semantic initialization method that introduces instance centroids is also proposed.

Introduction

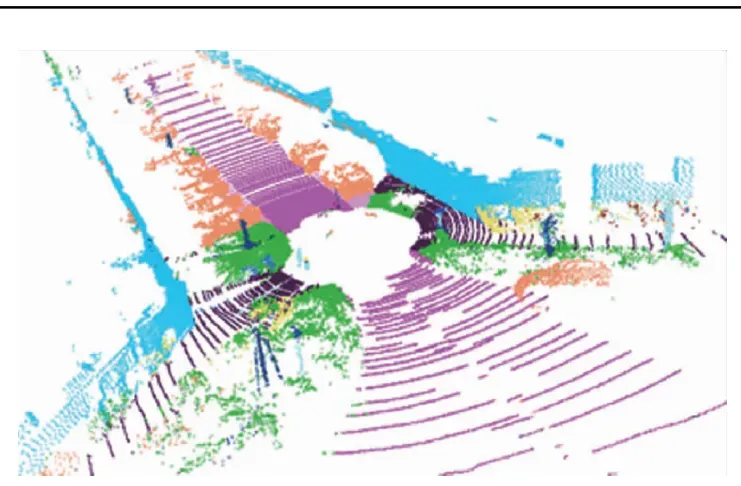

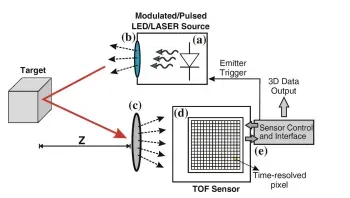

Scene perception is an important aspect of autonomous driving and robot navigation. Robust perception of objects in the environment relies on multiple onboard sensors mounted on the platform. LiDAR sensors provide large-scale, high-accuracy spatial measurements of the scene, but have lower resolution, especially in the horizontal direction, and lack color and texture. Camera sensors provide high-resolution RGB images but are sensitive to illumination changes and do not directly provide distance information. Fusing LiDAR and camera data compensates for these shortcomings. Effective fusion of these two sensors is critical for 3D object detection and semantic mapping. Extrinsic calibration, which estimates the rigid-body transform between a LiDAR and a camera, is an essential part of this fusion.

Early LiDAR-camera calibration methods relied on specific calibration targets such as checkerboards or custom objects. They obtained 2D feature points (in camera images) and 3D feature points (in LiDAR point clouds) through manual or automatic annotation, then computed the extrinsic parameters from those correspondences. During vehicle operation, external factors such as vibration can introduce uncontrolled drift in extrinsic parameters. To address this, a series of online self-calibration methods without targets have been proposed to improve adaptability. These online calibration methods mainly use intensity and edge correlations between images and point clouds to compute extrinsic parameters. They typically depend on an accurate initial extrinsic estimate or additional motion information for initialization. Moreover, the features used to build calibration cost functions are often hand-crafted, scene-dependent, and fail when such features are absent.

Recently, some methods have attempted to predict the transform between LiDAR and camera using deep learning. Compared with traditional methods, these approaches still exhibit large calibration errors and therefore do not meet practical calibration requirements. In real applications, when sensor parameters change, substantial new training data and fine-tuning are required, which limits generalization.

This article proposes an automatic online LiDAR-camera self-calibration method, CFNet. CFNet is fully automatic and does not require specific calibration scenes, calibration targets, or an initial calibration. We define a calibration flow that represents the offset between the initial projected points and the ground truth. The predicted calibration flow corrects the initial projections to build accurate 2D-3D correspondences. EPnP within a RANSAC scheme computes the final extrinsic parameters. To make CFNet fully automatic, we propose a semantic initialization algorithm based on instance centroids. The main contributions of CFNet are:

- To our knowledge, CFNet is the first method that integrates deep learning and geometric techniques for LiDAR-camera extrinsic calibration. Compared with directly predicting calibration parameters, CFNet demonstrates stronger generalization.

- We define a calibration flow to predict the displacement between initial projection points and ground truth. The calibration flow can move initial projections to the correct positions and thereby build accurate 2D-3D correspondences.

- We introduce 2D/3D instance centroids (IC) for estimating initial extrinsic parameters. With this semantic initialization, CFNet can perform LiDAR-camera calibration automatically.

Summary of Related Work

Early LiDAR-camera calibration methods: rely on specific targets and obtain 2D-3D correspondences through manual or automatic annotation; targetless online methods use intensity and edge correlations between images and point clouds to compute extrinsics, but depend on accurate initial parameters or additional motion information for initialization.

Drawbacks of deep learning methods: calibration errors remain too large for practical use; when sensor parameters change, large amounts of training data are required to fine-tune the network, limiting generalization.

We introduce 2D-3D instance centroid estimation to initialize the extrinsic parameters.

Method

In this section, we first define the calibration flow. Then we describe the CFNet calibration method, including the network architecture, loss functions, semantic initialization, and training details. The proposed CFNet estimates the transform between a 3D LiDAR and a 2D camera in an automatic online extrinsic calibration framework.

Experiments and Results

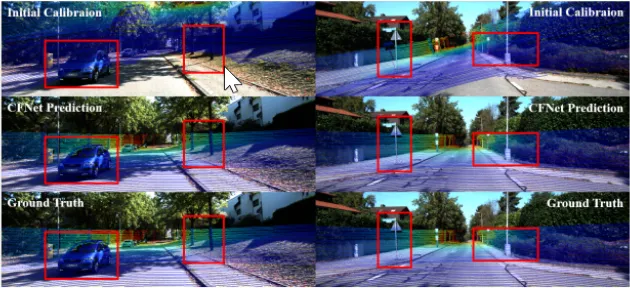

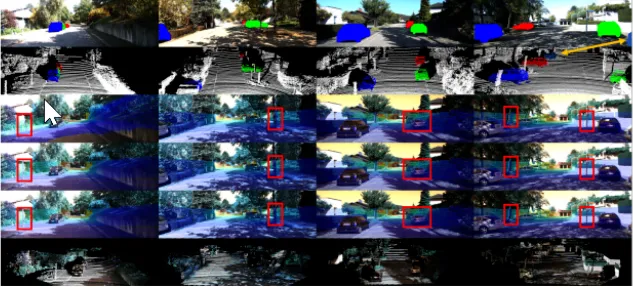

Across all tested sequences, the average translation error is below 2 cm and the average rotation error is below 0.13 degrees. Figure 5 shows two CFNet prediction examples. After recalibration, projected depth maps and RGB images align accurately with reference objects. Sequence 01 exhibits the largest calibration error among the tested sequences, primarily because it was collected on a highway scene that was not included in the training data.

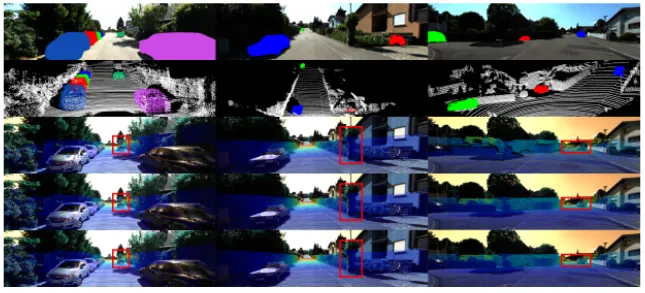

Compared with random initial extrinsic parameters, semantic initialization yields better initial estimates and consequently much smaller final calibration errors. The semantic initialization is automatic and requires at least three valid instance correspondences in the scene. Results show that semantic initialization has larger translation error than rotation error. Even when the semantic initialization deviates substantially from the ground truth, CFNet can still predict accurate calibration parameters. Figure 6 shows CFNet prediction examples with semantic initialization. In the first column, heavy occlusion among detected instances leads to inaccurate 2D-3D instance matching and large initial calibration error. The second column has less occlusion and smaller initial error. In the third column, detected 2D and 3D instances are complete and unoccluded, yielding highly accurate initial parameters with small deviation from ground truth. In the last column, mismatches between 2D and 3D instances are observed, but a good initial calibration is still obtained. With semantic initialization, CFNet accurately recalibrates regardless of initial error magnitude.

Calibration results on the original KITTI recordings indicate that CFNet's average translation error is 0.995 cm (X, Y, Z: 1.025 cm, 0.919 cm, 1.042 cm), and the average angular error is 0.087 degrees (Roll, Pitch, Yaw: 0.059 deg, 0.110 deg, 0.092 deg). CFNet outperforms RegNet and CalibNet. Although CalibNet was evaluated within a smaller miscalibration range (±0.2 m, ±20 degrees) compared with CFNet (±1.5 m, ±20 degrees), CFNet provides substantially better calibration performance.

We also evaluated CFNet on the KITTI360 benchmark dataset. Results are shown in Figure 7. Even after re-training on a single cycle of data, CFNet achieves good results on the test sequences. This indicates strong performance when sensor parameters such as camera focal length or LiDAR-camera extrinsics change.

Conclusion

This article presents a new online LiDAR-camera extrinsic calibration algorithm. To represent the deviation between the initial projection of LiDAR points and ground truth, we define a calibration flow image. Inspired by optical flow networks, we design a deep calibration flow network, CFNet. CFNet's predictions correct initial projection points to build accurate 2D-3D correspondences. EPnP within a RANSAC scheme iteratively refines and estimates the extrinsic parameters. Experiments demonstrate the advantages of CFNet. Additional experiments on KITTI360 validate the method's generalization.