0. Introduction

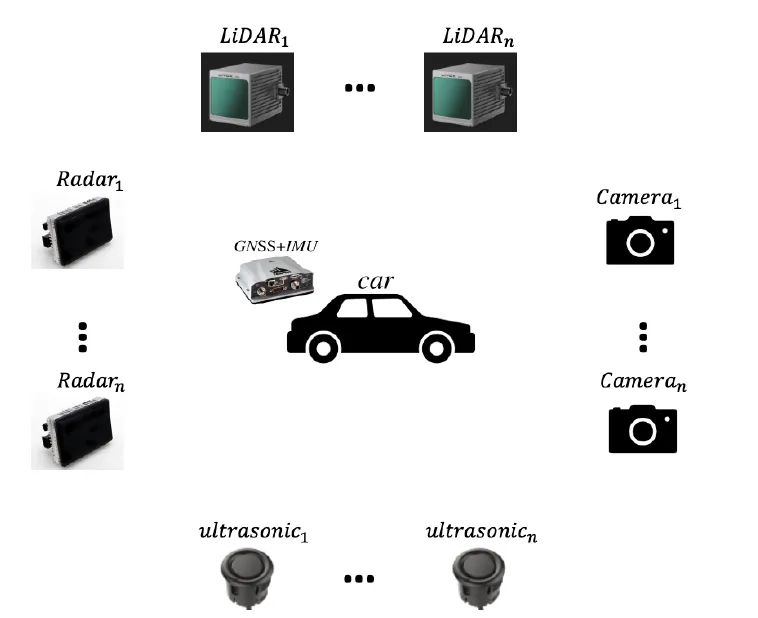

Extrinsic calibration between sensors has long been a challenging task for autonomous vehicles and mobile robots. This article reviews methods for offline extrinsic calibration and online calibration for common sensor combinations.

Common coordinate frames and typical extrinsic calibration problems include: IMU/GNSS to vehicle, lidar to camera, lidar to lidar, and lidar to IMU/GNSS.

1. Offline Extrinsic Calibration

1.1 IMU/GNSS to Vehicle Calibration

The goal is to estimate the transform Tcarimu. Many IMU/GNSS units provide tightly coupled, preprocessed pose outputs. Because IMU output rates are high, interpolation can be used to increase the effective fused output frequency.

Typical calibration obtains sequences of observed vehicle poses and uses GNSS/IMU to observe vehicle motion. In some cases, a handheld survey or circular trajectories can be used to match vehicle-frame coordinates to GNSS coordinates.

Collecting many observations and GNSS-relative transforms allows inclusion of a cost function in an optimization to solve for extrinsics.

The following approach jointly refines odometry in the vehicle frame and IMU poses. Because IMU and wheel-odometry timestamps are often not fully aligned, introduce a time offset delta_t to represent sampling time error. For each candidate delta_t, resample wheel odometry and compare it against IMU pose estimates, selecting the offset that minimizes alignment error. The chosen delta_t is treated as the sampling-time bias between the two streams.

For planar vehicle motion, only x, y, and yaw need to be observed. Linear constraints can be applied to derive calibration formulas.

1.2 Camera-to-Camera Calibration

Camera-to-camera calibration is essentially the same as stereo calibration: reconstruct 3D points from images and solve for the transform Tcam_acam_b. Using a calibration board, the mapping from board coordinates to image uv coordinates is established via intrinsics and extrinsics. Solve for Tcamerachessboard using PnP and nonlinear optimization, then combine multiple frames to constrain Tcam_acam_b.

Common tools and implementations exist, including OpenCV utilities and community stereo-calibration projects.

1.3 Lidar-to-Camera Calibration

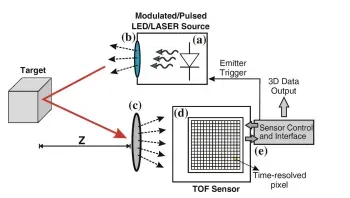

Calibration between lidar and camera is a key component of perception pipelines. The main idea is to obtain corresponding 3D points from lidar and projectable 3D points from camera images, then solve for the transform between lidar and camera frames.

Because lidar scans can introduce distortion and uneven corner extraction, methods often use robust geometric fitting. One approach uses RANSAC to detect the calibration board region in the point cloud and estimate an initial pose. If the board has protrusions or features, segmentation and clustering can extract feature centers and associate them with nearest image-derived points to estimate Tlidarchessboard.

The camera estimates Tcamerachessboard from the board images. Combining the two yields the transform between lidar and camera frames.

1.4 Lidar-to-Lidar Calibration

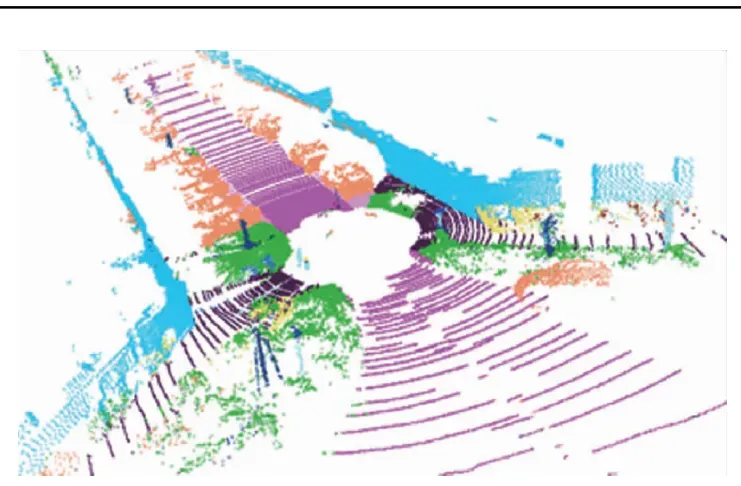

Lidar-to-lidar calibration aligns two point clouds, typically using point cloud registration libraries such as PCL. Standard ICP or feature-based matching methods are commonly applied.

1.5 Lidar to IMU/GNSS Calibration

Lidar to IMU/GNSS calibration follows a similar approach to vehicle-frame calibration: estimate relative displacements to infer pose changes and align coordinate frames.

1.6 Lidar to Radar Calibration

Radar provides polar coordinates without reliable height information, so lidar-to-radar calibration often focuses on x, y, and yaw alignment. Radar tends to respond well to conical targets, which can improve calibration accuracy. Registration methods can also be applied to align radar detections to lidar point clouds.

1.7 Data Synchronization

After extrinsic calibration, data synchronization among sensors is necessary.

2. Online Extrinsic Calibration

Online calibration updates relative pose parameters between sensors while the vehicle is in operation. Unlike offline methods, online calibration cannot rely on calibration targets, making it more challenging. Vibration or external impacts during operation can change sensor mounting, so online methods can detect abnormal parameter changes and raise alerts.

2.1 Hand-Eye Calibration

Hand-eye calibration formulates the problem as AX = XB to estimate the transform X. This approach applies to lidar to RTK or other pose-providing systems.

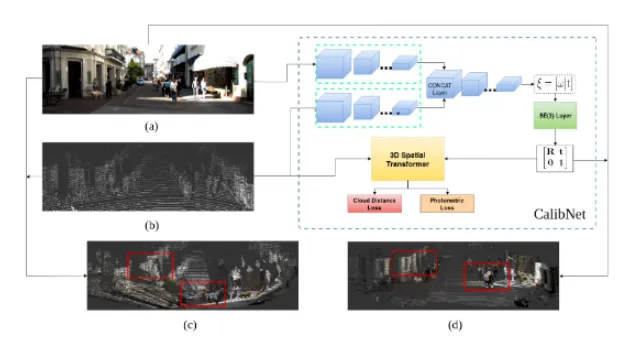

2.2 Deep Learning Methods

Learning-based approaches estimate optimal projection or transform parameters from input sensor data. These methods are an active research direction for online calibration, although they require substantial training data and validation. Example projects and implementations include learning-augmented or end-to-end calibration networks.