CIS image quality and pixels

Most mobile devices today, such as phones, tablets and laptops, include one or more camera sensors. The image quality produced by these sensors is determined by electronic structures called pixels, which convert optical signals into electrical signals. Among the many image quality metrics, signal-to-noise ratio (SNR) is the most representative. Achieving a higher image SNR requires increasing the signal term and reducing noise, which mainly depend on pixel performance metrics such as full-well capacity, sensitivity, pixel dark noise, readout circuit noise and pixel crosstalk.

Under bright conditions, full-well capacity and pixel crosstalk are the main factors affecting image SNR. Under low-light conditions, sensitivity, pixel dark noise, readout circuit noise and pixel crosstalk dominate. Technically, obtaining an ideal image SNR in low light is more difficult because many pixel performance metrics must be controlled and improved.

Evolution of CIS pixels

Over the past decade, demand for higher-resolution sensors has driven development of ever smaller pixels. Pixel scaling innovations can be divided into three stages: (1) sensitivity improvements; (2) crosstalk suppression; and (3) QUAD (or TETRA) pixel technology.

In the first stage, engineers compensated for sensitivity loss caused by smaller pixel sizes using techniques such as on-chip microlenses, thicker silicon photodiodes and back-side illumination. In the second stage, as pixel sizes approached around 1 μm, focus shifted to reducing crosstalk. To suppress optical crosstalk, new techniques such as metal gate structures over color filter layers and deep-trench isolation around silicon photodiodes were developed. As pixels shrank into the submicron range, researchers proposed QUAD/TETRA pixel architectures to address sensitivity in low light. The basic operating principle of QUAD pixels is shown below, and it is expected that most submicron pixels will adopt QUAD approaches in the future.

CMOS image sensor structure

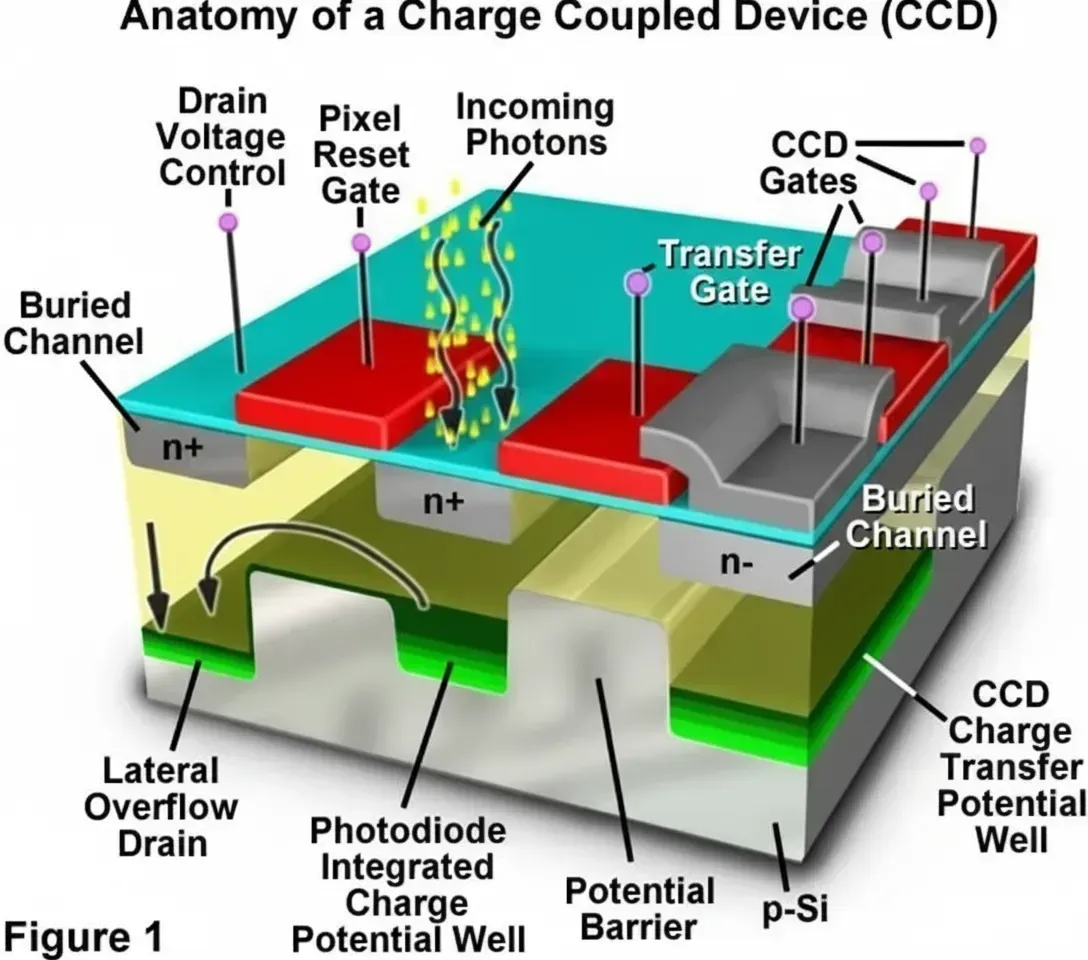

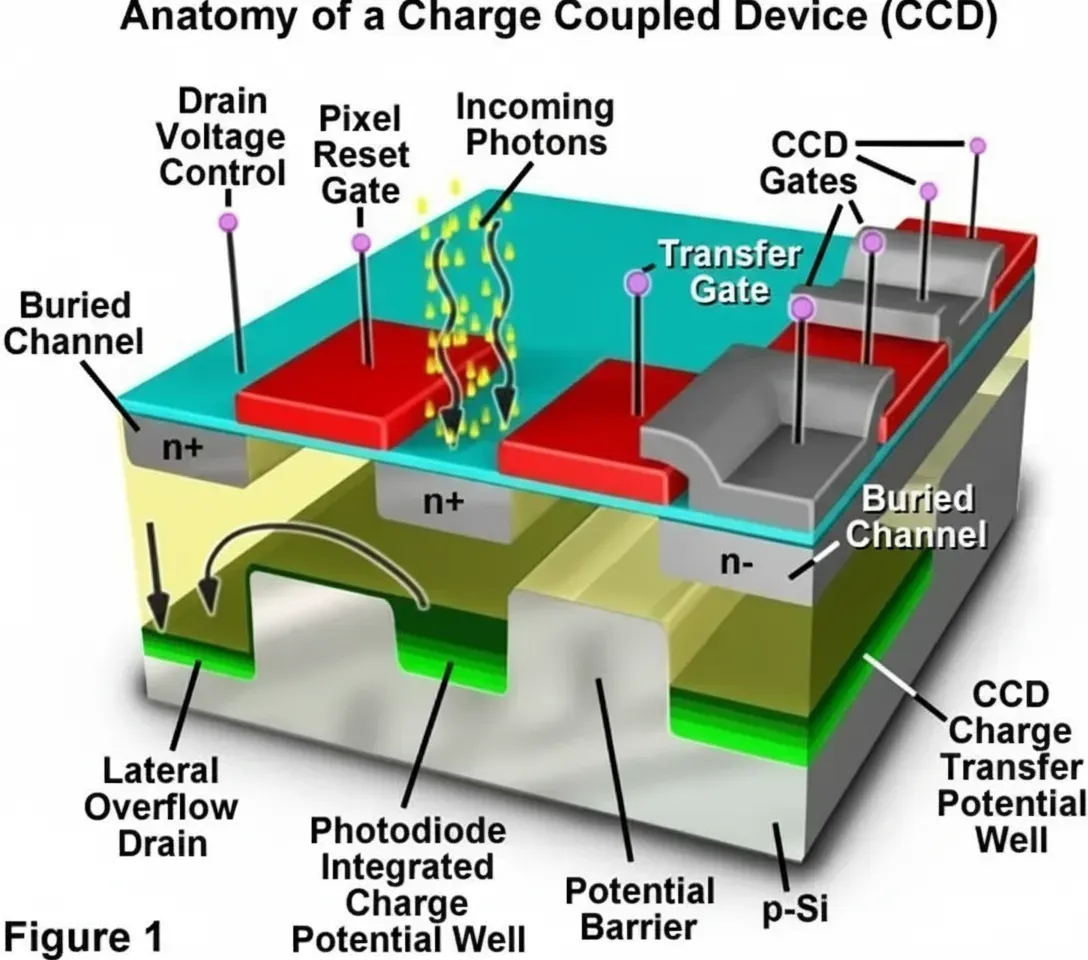

High-resolution solid-state imaging devices—mainly charge-coupled devices (CCD) and complementary metal-oxide-semiconductor (CMOS) image sensors—ushered in a new era for optical microscopy and other imaging applications. Both CCD and CMOS use the photoelectric effect: photons interacting with crystalline silicon generate electrons that are collected in potential wells. In CMOS sensors, the collected charge is converted to voltage in-pixel and then read out; in CCDs, charge is transferred through registers.

Photon flux incident on a doped silicon photodiode releases electrons proportional to the light intensity and wavelength. These electrons are collected in potential wells during the integration period and then converted to a voltage (for CMOS) or transferred to storage registers (for CCD). The measured voltage or charge is then converted by analog-to-digital converters to form the sensor's digital representation.

Photodiodes (pixels) are the key elements. Sensitivity is determined by the photodiode's maximum charge capacity, quantum efficiency, and the device's ability to accumulate charge without leakage. These factors are determined by photodiode physical size, aperture and the spatial and electronic relationship with adjacent elements. Another important factor is the charge-to-voltage conversion ratio, which determines how accumulated charge is converted to measurable voltage. Photodiodes are organized in rectangular grids, with array sizes ranging from small matrices (for example 128×128) to large arrays for HDTV and other high-resolution applications.

Color filters, microlenses and fill factor

To assemble an image, the signals from every pixel row and column must be accurately read out. In an optical microscope or camera, the objective gathers light and projects it onto the sensor surface where a 2D array of photodiodes forms the image elements. Array and pixel sizes determine spatial resolution. CMOS and CCD circuits are inherently monochrome, responding only to the total accumulated electrons unless color filters are used. Color is detected by passing incident light through red, green and blue filters or by depositing a mosaic pattern of micro color filters over the pixel array.

The most common color filter mosaic is the Bayer pattern, named after Kodak engineer Bryce E. Bayer. The mosaic arranges red, green and blue filters such that green appears twice as often as red or blue, reflecting human visual sensitivity which peaks near 550 nm. Microlenses are typically placed above each color filter to concentrate incident photons onto the photodiode active area.

Examining a photodiode element shows that a large portion of each pixel (in the example about 70%) is occupied by support transistors (amplifier, reset and row select) that are relatively opaque and do not contribute to photon detection. The remaining portion is the photosensitive area. This results in a fill factor—the fraction of pixel area that is photosensitive—often ranging from 30% to 80% depending on the device. Lower fill factors reduce sensitivity and SNR and limit dynamic range. Microlenses help by focusing more light onto the photosensitive region and can roughly double the effective sensitivity, but their efficiency varies with wavelength and incident angle.

Wavelength-dependent losses and quantum efficiency

Photon reflection and transmission depend on wavelength. Shorter wavelengths (<400 nm) are more likely to be reflected, and some sensor process layers, such as yellow polyimide coatings, can absorb much of the blue spectrum. Shorter wavelengths are absorbed near the front surface while longer wavelengths penetrate deeper; the longest visible wavelengths (>650 nm) may pass through the photosensitive region without being absorbed, introducing another loss channel. Minimizing polycrystalline silicon and polyimide layers is an important consideration for improving quantum efficiency across the visible spectrum.

Typical CMOS active pixel sensor (APS) pixels include an amplifier transistor acting as a source follower, a reset transistor and a row select transistor. During operation, the reset transistor initializes the photodiode to clear charge and bias it; during integration, photons generate electrons stored in silicon potential wells. At the end of integration, the row select connects the amplifier to its load and the source follower converts the charge to a voltage that appears on the column bus and is detected by the readout amplifier.

Fixed-pattern noise and correction

One limitation of three-transistor APS designs is fixed-pattern noise (FPN), caused by variations in amplifier gain and offset across the array due to process variations. This results in visible, reproducible noise patterns. In many cases, FPN can be reduced or eliminated by circuit design in the analog processing at the periphery or by electronic subtraction using a dark frame (flat-field correction).

Bayer mosaic and demosaicing

The Bayer filter's unbalanced nature—twice as many green filters as red or blue—raises questions about precise color reproduction per pixel. The typical transmission spectra of dyes used in Bayer filters show that the red filter often has higher quantum efficiency than green and blue, and the spectra overlap significantly, especially between 520 and 620 nm. Because of spectral overlap and the mosaic sampling pattern, sensor outputs must be converted to standard RGB format using demosaicing algorithms such as nearest neighbor, linear, cubic or spline interpolation. These algorithms estimate each pixel's missing color components by averaging selected neighboring pixels' color and intensity values.

For scenes dominated by wavelengths where two filters transmit similar amounts (for example yellow light around 585 nm), three out of four Bayer filters in a quadruplet may transmit similar amounts, simplifying color estimation. In practice, demosaicing works well in smooth regions but can produce artifacts such as aliasing at edges. Some designers use CMY (cyan, magenta, yellow) filter arrays to increase sensitivity because CMY dyes can transmit more light, but CMY requires more complex color correction to transform sensor data to RGB for display or printing.

Noise sources and mitigation

CMOS sensors often exhibit noticeable noise in images. Advances in sensor integration and on-chip processing have suppressed many noise sources, but others remain challenging, such as photon shot noise, dark current, reset noise and thermal noise.

Reset noise, often quantified by kTC, arises during photodiode reset and depends on Boltzmann's constant k, temperature T and the total capacitance C at the amplifier input. Reset noise can limit SNR and is typically mitigated using correlated double sampling (CDS), which requires adding a fourth transistor per pixel to perform a reference measurement and subtraction.

Photon shot noise appears as random fluctuations due to the statistical nature of photon arrival and becomes dominant when the signal is much larger than the sensor's intrinsic background noise. Dark current generates spurious charge in the absence of illumination and varies across pixels and with operating conditions; it is temperature sensitive and difficult to eliminate. Fixed photodiode techniques, where a shallow P-type layer is applied to an N-well photosensitive region, can reduce dark current by shifting collected electrons away from the surface where defects cause leakage, and can improve blue response.

Thermal noise arises from transistors, capacitors and metal interconnects between pixels. It can be reduced by tuning imaging bandwidth, increasing output current or cooling the camera. Adding complex cooling like Peltier devices is not practical for low-cost sensors, so designers often limit amplifier bandwidth per pixel to reduce thermal noise.

Pixel architectures: photodiodes and photogates

Modern CMOS image sensors use two fundamental photosensitive pixel architectures: photodiodes and photogates. Photodiode-based pixels generally offer better visible and blue sensitivity, while photogates tend to have larger pixels, lower fill-factor and poorer blue response, but can achieve higher charge-to-voltage conversion gain and facilitate correlated double sampling for noise reduction.

Photogate APS designs borrow aspects of CCDs to reduce noise and improve image quality. Charge accumulated under the photogate is transferred in two stages to the output, allowing two sampling opportunities for CDS and improved noise suppression. Photogate architectures often perform better in low-light conditions, while photodiode-based CMOS sensors suit mid-performance consumer applications where low noise and wide dynamic range are not critical. Both benefit from low supply voltages and clocking similar to other integrated circuits.

CMOS sensor operation sequence

In many CMOS photodiode array designs, the active pixel region is surrounded by several rows and columns of optically shielded black pixels used for black-level compensation. The filter mosaic begins at the first unshielded pixel at the top-left. At the start of each integration cycle, on-chip timing and control circuits reset rows of pixels line by line. After integration, each pixel's integrated value is transferred to correlated double sampling circuits and then to horizontal shift registers. Once loaded, pixel information is serially shifted to analog video amplifiers whose gain is set by hardware or software. In digital-output sensors, each column may include an ADC to convert each pixel in parallel; resulting digital data are then output on a bus equal to the bit width.

Further processing removes defective pixels, compensates black level using the average signal from surrounding black pixels, and performs image reconstruction. Typical image processing steps include demosaicing, antialiasing, scaling, vignetting correction, geometric distortion correction, color balance, smoothing, sharpening and gamma correction. Processed images may be buffered in a digital signal processor before output.

Because CMOS sensors can address each pixel individually, they support selective readout or windowing (region of interest), enabling pixel-accurate access to any area within the active region. This is useful for time-tracking moving objects within a sub-region, electronic pan/zoom, accelerated readout, and on-chip tilt operations. High-end sensors provide multiple readout modes such as progressive scan and interlaced readout. Electronic shutter implementations typically require adding transistors per pixel, which reduces fill factor; rolling shutter is a common compromise though it can produce motion distortions, while global shutter requires additional pixel transistors or larger pixels to maintain acceptable fill factor.

Dynamic range and frame rate

Sensor dynamic range is the ratio of the maximum signal electrons a photodiode can accumulate to the total background noise (excluding photon shot noise) during a specific integration time. Background noise includes dark current noise and read noise. Dynamic range is reported in grayscale levels, decibels or bits; for example, a 12-bit ADC corresponds to slightly over 4,000 gray levels (~72 dB), while 10-bit corresponds to 1,000 gray levels (~60 dB).

Greater sensor dynamic range improves the ability to capture both dark and bright parts of a scene and enhances quantitative measurement capability. CMOS sensors are also capable of high frame rates, suitable for timelapse and real-time video. Typical frame rates are 30–60 fps, while specialized high-speed imagers exceed 1,000 fps. Building a complete camera system also requires supporting circuitry such as coprocessors and external RAM.

Fabrication and process considerations

CMOS image sensors are manufactured in high-volume silicon fabs using standard silicon processes that also produce microprocessors, memory, microcontrollers and DSPs. The advantage is that timing logic, ADCs and digital circuitry can be integrated on the same die as photodiodes. However, standard CMOS processes often require modification to achieve low-noise, high-performance image sensors. For instance, using standard CMOS transistor junctions can produce high dark current and poor blue response when applied to photodiodes. Optimizing sensor processes requires trade-offs that make a standard logic process suboptimal without special steps.

Pixel sizes have shrunk from 10–20 μm dominant in the mid-1990s to 6–8 μm and below. Miniaturization for mobile and surveillance devices drives pixel sizes to 3–4 μm for multi-megapixel chips, requiring 0.25 μm or finer process nodes. Narrower line widths allow more transistors per pixel while maintaining acceptable fill factor. Many fabs do not natively include color filter and microlens steps, but these steps are increasingly adopted as market demand grows. Optical packaging for image sensors requires cleanroom glass processing equipment often not present in standard logic fabs, adding manufacturing cost.

Applications and sensor types

CMOS image sensors have rapidly expanded into many applications since the late 1990s, including fax machines, scanners, security cameras, toys, PC webcams and consumer cameras. Future sensors will appear in phones, barcode readers, optical mice, automotive systems and appliances. CMOS sensors are also used in industrial inspection, weapon systems, fluid dynamics and medical diagnostics because of high frame rate capability.

Sensor process families include front-side illuminated (FSI) and back-side illuminated (BSI) technologies. BSI places metal interconnects on the opposite side of the photodiode to reduce light scattering and improve SNR. Sony and others have developed stacked or 3D-integrated BSI processes that separate photodiode and pixel transistor layers onto different substrates, doubling photodiode surface area and improving dynamic range and noise performance. 3D integration uses through-silicon vias and bonding techniques to connect separate silicon layers, enabling more complex readout and processing without compromising pixel area.

Trends: low-light, high-speed and 3D imaging

Low-light imaging: New processes have improved photo-conversion efficiency and, combined with low-noise readout circuits, significantly improve low-light performance.

High-speed imaging: 3D-integrated BSI enables pixel-level high-speed buffering that increases instantaneous frame rates, storing images in fast caches and later outputting at lower speeds. Event-based (neuromorphic) imaging offers an alternative by reporting only per-pixel changes, reducing data and enabling ultra-high-speed capture.

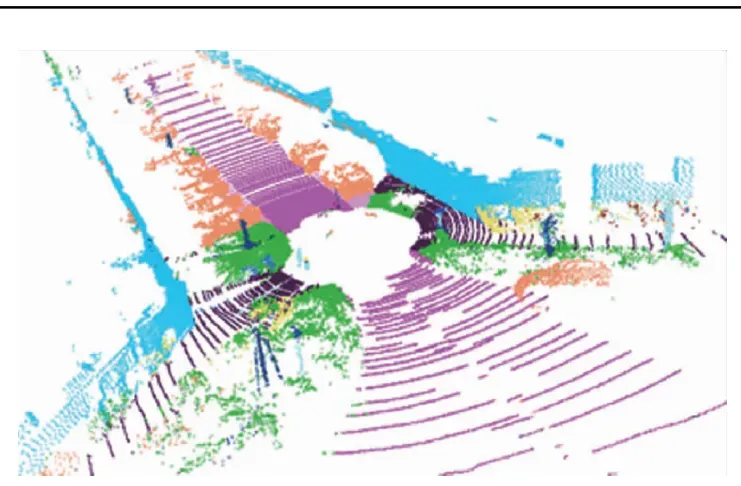

3D imaging: Compared to 2D images, 3D imaging adds distance information. Main 3D methods include stereovision, structured light and time-of-flight (ToF). The latter two are often embodied in LiDAR systems and use CMOS image sensors as the signal receiver, making the sensor a core component in 3D imaging.

CCD overview and hybrid approaches

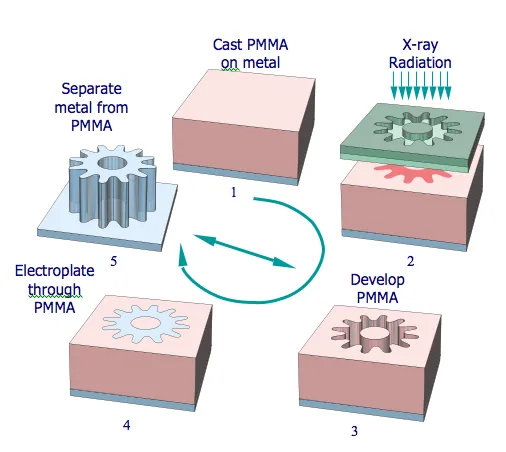

Charge-coupled devices (CCD) were invented in the late 1960s. In CCDs, charge generated by photons is stored in potential wells and transferred across the chip using gate voltages to be read out serially at an output amplifier. CCD fabrication uses multiple lithography steps, ion implantation, film deposition and metallization. After wafer singulation, die are packaged with a glass or quartz window to illuminate the photodiode array.

Electrons generated by incident photons are collected in depletion-region potential wells, isolated by channel stops. Clocking the poly-silicon gate electrodes modulates potentials to transfer charge. CCDs typically implement multi-phase clocking to move charge across each pixel. After parallel transfer to a serial register, electrons are serially shifted to the output amplifier and converted into an analog raster scan.

Some specialized sensors, such as time-delay integration (TDI) imagers, still use CCD principles. As CCD process lines have largely exited commercial production, hybrid approaches embed CCD-like functions into CMOS processes—known as embedded CCD (ECCD) or TDI-CMOS. ECCD aims to combine CCD low-noise pixel characteristics with CMOS on-chip signal collection and quantization, leveraging both technologies' strengths. These hybrid processes are complex and currently implemented by few vendors.

High-sensitivity camera modules

High-sensitivity camera modules are core devices for low-light imaging applications. In moonlit or starlit environments, faint natural illumination is reflected by objects and collected by optics onto a high-sensitivity image sensor, which performs photoelectric conversion into raw digital images. On-chip image processing then produces images with moderate brightness, natural contrast and rich detail. Processed images can be displayed, stored, transmitted or used for recognition depending on the application.

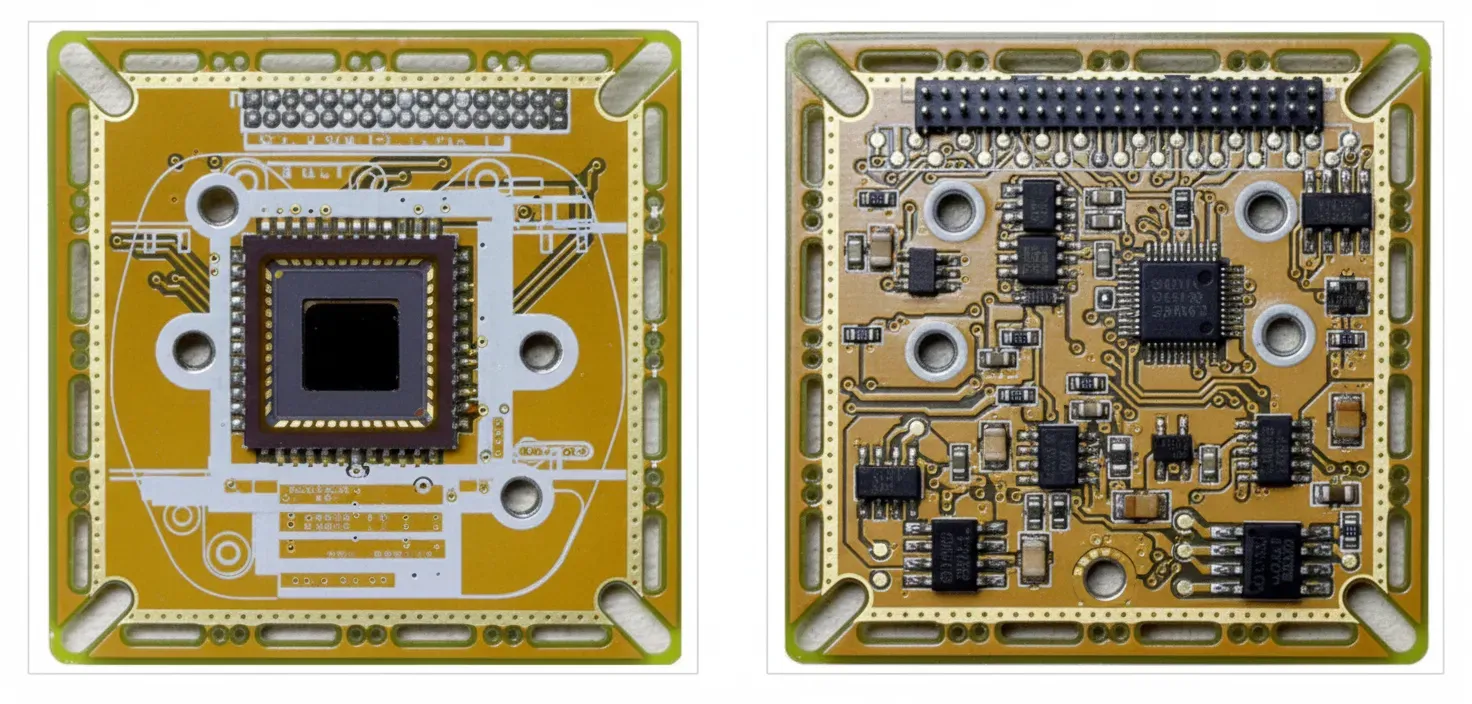

Chip and module product design

Chip design includes top-level system design, pixel design, analog circuit design, digital circuit design, full-custom layout and routing, parasitic extraction and post-layout simulation and verification.

- Top-level design: refine project specifications into functional modules and system architecture.

- Pixel design: design pixel structure, layout and timing to optimize sensitivity, dark current and other metrics.

- Analog circuit design: design ADCs, PLLs, row/column drivers, reference voltages and bandgap references.

- Digital circuit design: control logic for pixel timing, analog circuits and image processing, with simulation verification.

- Layout: custom layout and standard-cell placement and routing, followed by parasitic extraction.

- Post-layout simulation: verify design correctness and adjust designs that do not meet specifications.

- Full-chip functional verification and tape-out after passing verification.

Module design follows chip design and includes system design, hardware, FPGA development, software and design validation. Key steps include selecting power supplies, video output formats, FPGA model, mechanical dimensions and environmental/reliability requirements; schematic and board layout; FPGA implementation of image processing algorithms and Verilog code; and software development for OS drivers, application code and firmware. After development, full system testing ensures functionality, performance, environmental resilience and reliability meet goals.

After chip and module design, prototypes pass initial sample and production sample stages. After review and approval, final product documentation, technical files, process files and acceptance specifications are prepared and the product enters production qualification and mass production when quality and yield are stable.

CMOS image sensor industry and market overview

Image sensors convert optical signals into electrical signals using arrays of photosensitive elements and support circuits. They are widely used in imaging, digital TV and visual communication. Image sensors are mainly CCD and CMOS types. In CCDs, light is stored as charge and then transferred for readout. In CMOS sensors, charge is converted to voltage within each pixel and read out via column buses. CMOS leverages mainstream large-scale integrated circuit processes to integrate drive circuits, amplification, quantization, control and processing on the same chip. Compared to CCDs, CMOS reduces peripheral circuitry, and with high integration, low cost and low power has largely replaced CCDs in consumer electronics.

Key performance parameters

Different applications require different sensor characteristics. Main parameters include:

- Resolution: the number of pixels in horizontal and vertical directions, e.g., 640×480, 1280×720, 1920×1080. Higher resolution increases design and process complexity.

- Pixel size: smaller pixels increase resolution per area, larger pixels increase sensitivity and reduce noise.

- Sensitivity: measured in V/lx·s, the voltage output per lux-second; higher sensitivity gives higher image SNR.

- Format size: the diagonal of the sensor photosensitive area, usually in inches.

- Frame rate: frames per second; higher frame rates favor imaging fast motion but reduce exposure time and low-light performance.

- Dynamic range: ratio of maximum to minimum detectable light intensity; higher dynamic range captures richer scene detail.

Market scale

Global CMOS image sensor demand spans consumer electronics, security, automotive, industrial and medical fields. According to market research, the global CMOS image sensor market was estimated at $14.16 billion in 2018 and expected to grow to $23.52 billion by 2024, at a CAGR of about 8.83% between 2018 and 2024.

For the Chinese market, demand from consumer electronics, security and automotive sectors has grown rapidly. China has increased investment in AI and related fields, further driving downstream demand for image sensors. Market research estimated China’s CMOS image sensor market at $7.36 billion in 2018, growing to $12.81 billion by 2024 at a CAGR of roughly 9.68%—higher than the global average, indicating robust growth prospects.

Downstream industries and trends

Security: Video surveillance systems require large numbers of cameras. Global video surveillance market size is growing and is expected to expand significantly, driving camera demand.

Automotive: Image sensors are used in rear-view cameras, surround-view systems, camera monitoring systems, front-view cameras, driver monitoring systems and cabin monitoring. As automotive automation and intelligence progress, sensors must deliver clear imaging across varied lighting conditions for driver assistance and autonomous driving.

Consumer electronics: Mobile devices have adopted multi-camera systems and biometric features like under-display fingerprint sensing and face recognition. These trends increase demand for diverse sensor types.

Overall, CMOS image sensors are core components across security, automotive and consumer applications, and demand will continue to grow.

Process innovation and customization

CMOS sensor manufacturing processes include front-side illuminated (FSI) and back-side illuminated (BSI) technologies. BSI reduces scattering and improves SNR. Stacked two-layer transistor pixels (stacked BSI) separate photodiodes and pixel transistors onto different substrates to double photodiode surface area and increase saturation signal, improving dynamic range and noise. This relies on precise alignment and advanced bonding processes allowing higher thermal budgets for integration.

3D integrated BSI further separates readout circuits and pixel arrays onto different silicon layers connected by through-silicon vias and other bonding methods. This reduces interference from processing circuits, improves SNR and allows more complex on-sensor processing in the same area. Different application domains show strong customization: mobile devices push for miniaturization and stacked BSI, while high-sensitivity imaging, machine vision and medical imaging emphasize sensitivity, dynamic range, artifact suppression and longevity, often requiring thick epitaxial layers, deep-trench isolation and other process refinements.

Emerging directions: low-light, ultra-high-speed and 3D

Low-light imaging: New processes and low-noise readouts have significantly improved low-illumination performance.

High-speed imaging: 3D integration enables pixel-level buffering to increase instantaneous frame rates. Event-based imaging significantly reduces redundant background information for ultra-high-speed capture.

3D imaging: Techniques such as stereovision, structured light and time-of-flight are used for depth sensing. CMOS sensors play a key role as receivers in LiDAR and other 3D systems.

Sensor design and fabrication trends

Historically, image sensors were fabricated using CCD processes. CCDs dominated consumer cameras in the 1980s and 1990s, but CMOS largely replaced CCDs in consumer applications after 2005. Some TDI sensors still rely on CCD principles, but with shrinking CCD capacity, companies have explored embedding CCD-like functions in CMOS—embedded CCD (ECCD) or TDI-CMOS—to realize TDI capability on CMOS platforms. ECCD combines CCD pixel low-noise characteristics with CMOS integration of image acquisition and quantization circuits, improving overall image quality. Few companies worldwide currently master ECCD.

CCD pixels can exhibit blooming when charge overflows from saturated wells into adjacent channels under bright conditions. Anti-blooming methods are required to prevent neighboring-channel interference. Hybrid approaches such as MCCD combine CMOS and CCD features to increase sensitivity, near-infrared response and dynamic range while integrating acquisition and quantization circuits on-chip. MCCD claims include pixel designs with high sensitivity, high-speed parallel operation exceeding 2000 fps, hybrid exposure modes and anti-dust techniques to reduce fixed-pattern noise.

Applications driving demand

Consumer electronics: multi-camera systems, under-display biometric sensors and advanced on-device imaging drive sensor demand.

Automotive: low-cost solid-state and hybrid LiDARs based on CMOS sensors are rapidly developing for ADAS and autonomous driving.

Security: CMOS sensors are primary data sources for smart city deployment and large-scale surveillance systems.

Medical imaging: CMOS sensors have largely replaced vacuum-tube detectors in X-ray and CT imaging as receivers.

Industrial control: Machine vision applications in Industry 4.0 increase demand for high-performance image sensors.

Localization and industry outlook

High-end image sensor technologies remain concentrated among suppliers in Europe, North America, Japan and South Korea. Historically, the Chinese market developed more slowly due to technology and funding barriers, but since the early 2000s several Chinese sensor design companies have emerged. Under strategies emphasizing autonomy, security and control, local development of core image sensor technologies is expected to accelerate, with the Chinese market’s suppliers increasingly becoming core industry players.

Major players

- Sony: A leading global supplier of CMOS image sensors, with a strong position in high-end markets. Sony introduced stacked CMOS technology to increase photodiode area and improve performance.

- OmniVision: Founded in 1995, a major CMOS sensor supplier with significant market share in automotive, security and mobile. (OmniVision was acquired by another chip firm in 2019.)

- ON Semiconductor: Offers image sensor products for automotive, industrial and consumer markets and provides custom sensor design services.

- Geke Microelectronics (Goke): Founded in 2003, focuses on CMOS image sensors, LCD/driver and multimedia SoCs, with products for phones and tablets.

- SmartSens (Sitronix/Stellarsa): Focuses on CMOS image sensor R&D and design for surveillance, automotive, machine vision and consumer devices such as action cameras, drones and smart home cameras.

Concluding notes

CMOS image sensors have rapidly evolved and diversified. With increasing demand across security, automotive, consumer and industrial applications, sensor process innovation and product customization continue to accelerate. Key trends include back-side illumination, 3D integration, low-light optimization, high-speed capture and 3D imaging. Continued development of localized capabilities is expected to broaden the ecosystem of suppliers and solutions serving the market.