Summary

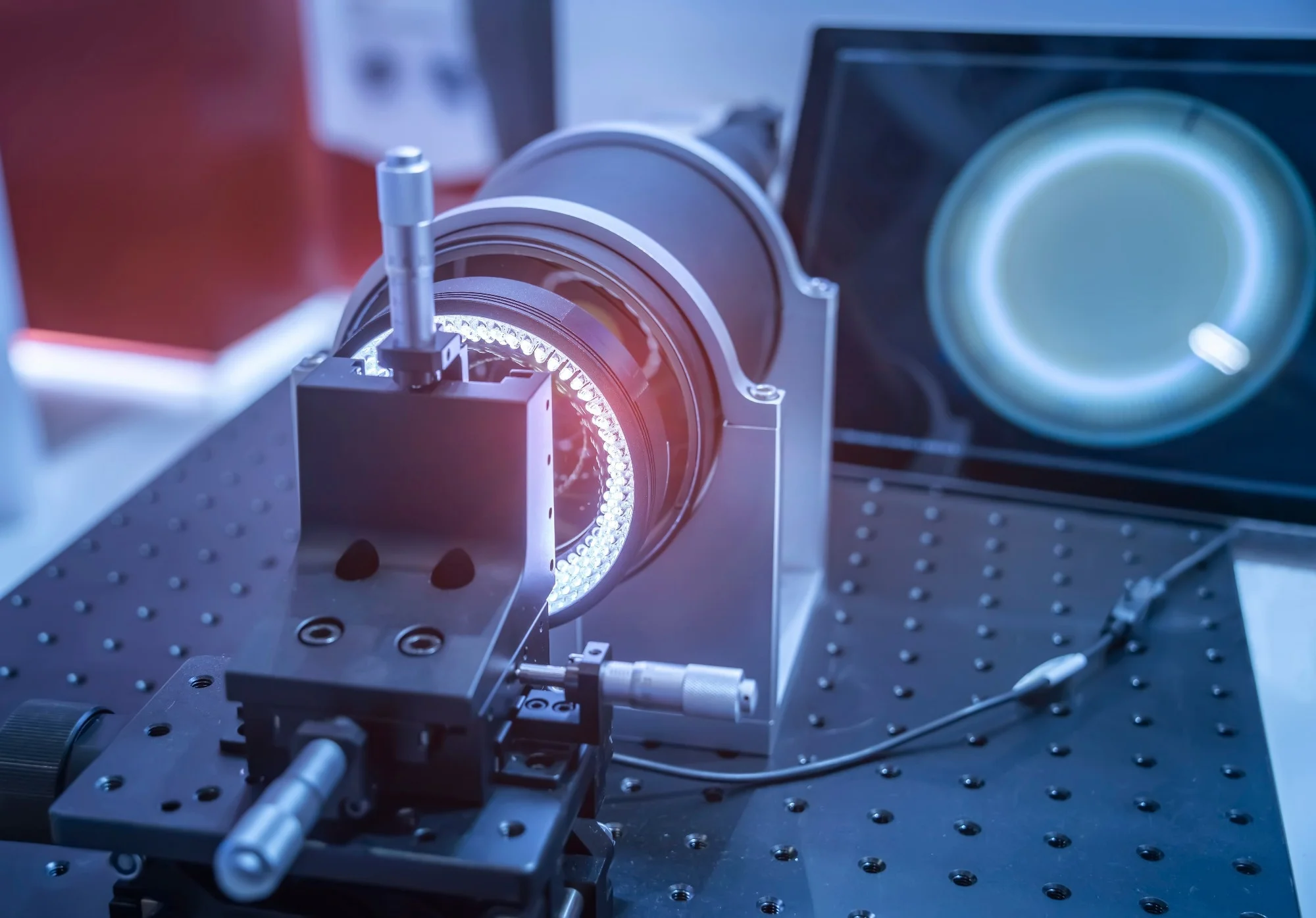

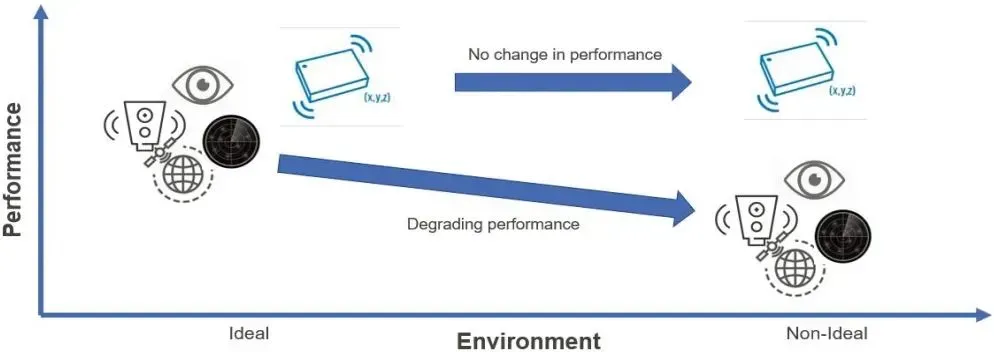

A team led by Xinming Li at South China Normal University developed a vision-based multimodal tactile sensing system. Traditional multimodal or multi-task tactile systems decouple different tactile modalities by integrating multiple sensor units, which increases system complexity and introduces cross-talk between stimuli. In contrast, vision-based tactile sensors can use various optical designs to sense multiple tactile modalities, but increasing the number of contact information dimensions typically requires specific optical designs and decoupling strategies for each stimulus.

Approach

The team converted tactile information into visual signals and designed a deep neural network model capable of decoupling multiple contact modalities. Thanks to the high-density features in visuo-tactile images, this approach avoids the need for custom decoupling designs for each tactile modality and enables more efficient extraction of multimodal tactile information.

Validation Results

In system validation, the technique achieved micron-level spatial resolution comparable to human touch. With the neural network, simulated grasping experiments reported a mean absolute error of 0.2 N for force estimation and an error of 0.41° for orientation angle estimation. The system also demonstrated strong performance in object localization and classification.

Potential Applications

The system could be applied to multimodal tactile sensing tasks in fields such as biomedical devices and robotics.