Abstract

Autonomous driving vehicles require accurate localization and mapping in diverse driving environments. SLAM is a key technique for this purpose. LIDAR and camera sensors are commonly used for localization and perception. Over decades, LIDAR-based SLAM methods have changed relatively little. Compared with LIDAR-based solutions, visual SLAM offers lower cost, easier installation, and stronger scene recognition capability. Researchers are exploring replacing or augmenting LIDAR with cameras in autonomous driving systems.

This review summarizes the state of research on visual SLAM. It first outlines a typical visual SLAM architecture. Then it reviews recent work on purely visual and vision-based SLAM variants, including visual-inertial, visual-LIDAR, and visual-LIDAR-IMU systems, and compares localization accuracy reported in prior papers on public datasets. Finally, it discusses key challenges and future directions for visual SLAM in autonomous vehicles.

1. Introduction

With advances in robotics and artificial intelligence, autonomous driving has become a major research topic in industry and academia. For safe navigation, autonomous vehicles must create accurate representations of their surroundings and estimate ego-vehicle state. Traditional localization relies on GPS or real-time kinematic (RTK) systems. However, GPS measurement errors caused by signal reflection, timing errors, and atmospheric conditions can reach tens of meters, which is unacceptable for vehicle navigation, especially in tunnels and urban canyons. RTK can correct these errors using fixed base stations, but such systems require expensive additional infrastructure.

SLAM methods are a promising solution for vehicle localization and navigation, estimating pose in real time while building a map of the environment. Based on sensor type, SLAM is typically classified as LIDAR SLAM or visual SLAM. LIDAR SLAM matured earlier and is relatively established for autonomous driving. Compared with cameras, LIDAR is less sensitive to lighting and nighttime conditions and provides wide-FOV 3D information. However, cost and long development cycles limit LIDAR adoption. Visual SLAM is information-rich and easier to install, making systems cheaper and lighter.

Modern visual SLAM can run on small PCs and embedded devices and even on smartphones. Unlike indoor or outdoor mobile robots, autonomous vehicles present more complex conditions, especially in urban settings, including larger areas and dynamic obstacles. These factors challenge accuracy and robustness of visual SLAM.

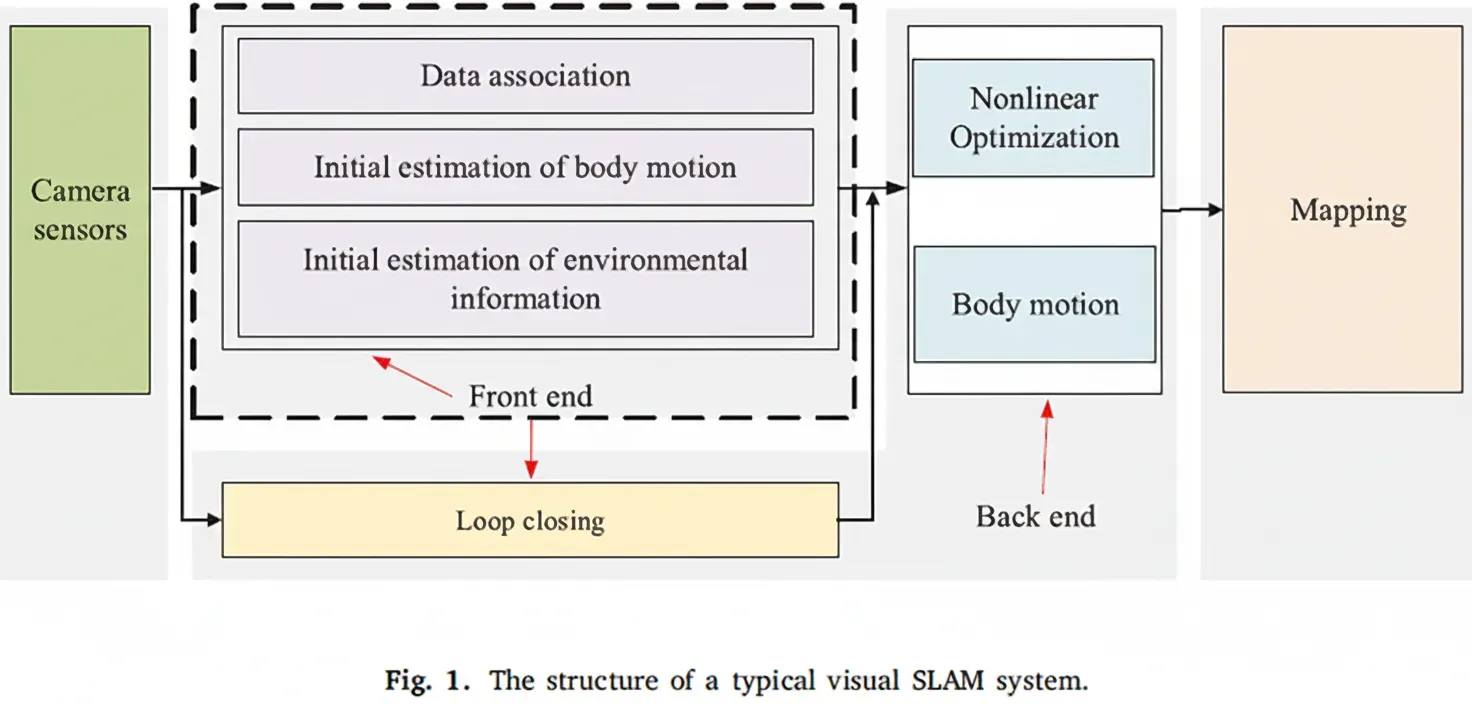

Issues such as drift accumulation, illumination changes, and fast motion can degrade estimates. Various methods have been proposed to address problems for autonomous vehicles, including feature-based, direct, semi-direct, and point-line fused visual odometry algorithms, and state estimation approaches such as EKF and graph-based optimization. Multi-sensor fusion methods based on vision have received significant attention to improve accuracy. In visual SLAM systems, sensor data collection (camera, IMU), VO or VIO frontends, and back-end optimization, loop closure, and relocalization modules form the typical pipeline.

This review focuses on localization accuracy of visual SLAM systems, covering pure visual SLAM, visual-inertial SLAM, and visual-LIDAR-IMU SLAM, and compares reported localization accuracy on public datasets. It aims to provide a detailed guide for newcomers and a reference for experienced researchers exploring future directions.

2. Visual SLAM Principles

The classic visual SLAM architecture is composed of five parts: camera sensor module, frontend, backend, loop-closure module, and mapping module. The camera sensor module collects images. The frontend tracks image features between adjacent frames for initial motion estimation and local mapping. The backend performs numerical optimization and refined motion estimation. The loop module eliminates accumulated drift by computing image similarity in large-scale environments. The mapping module reconstructs the surrounding environment.

2.1 Camera Sensors

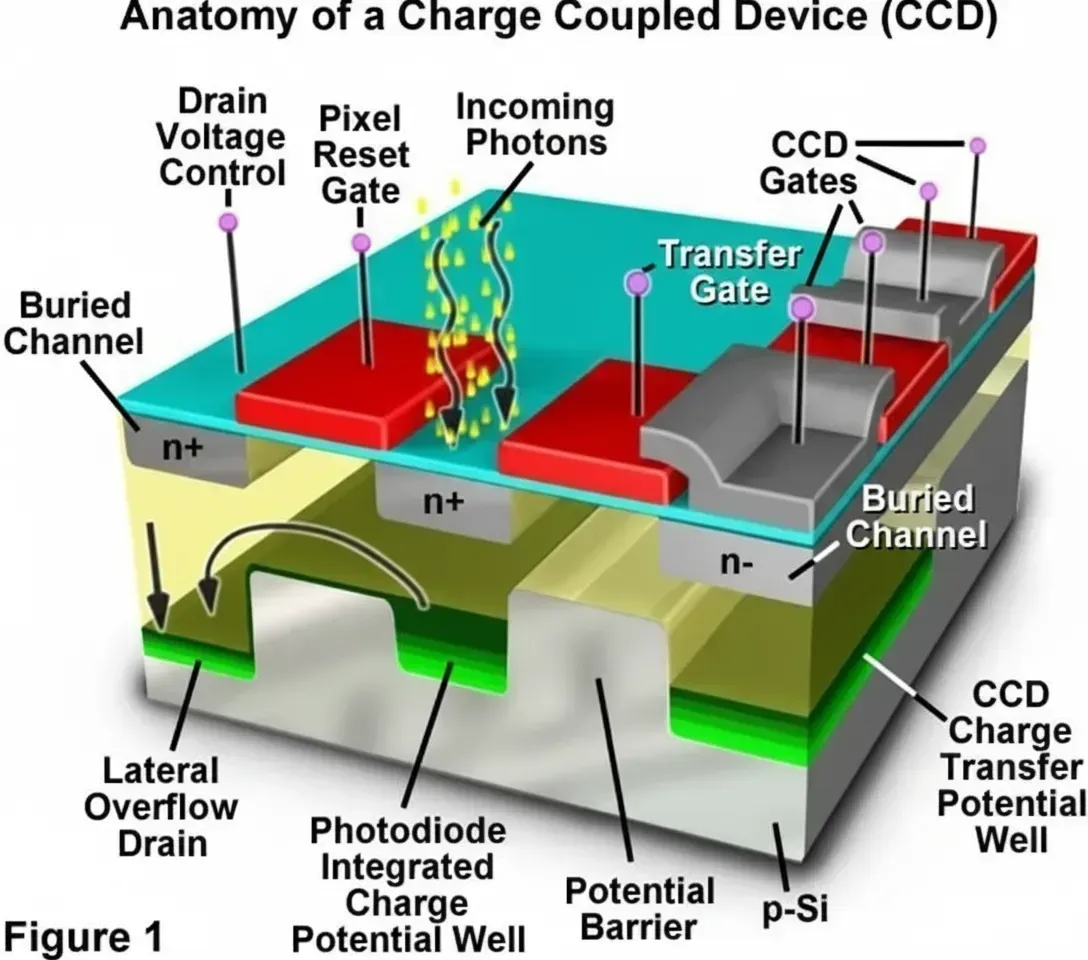

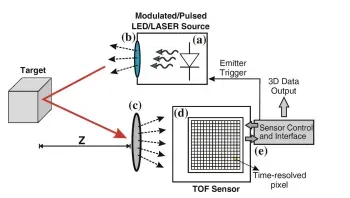

Common visual sensors include monocular, stereo, RGB-D, and event cameras. Popular sensors and manufacturers include but are not limited to:

- MYNTAI: S1030 series (stereo with IMU), D1000 series (depth), D1200 series (for smartphones).

- Stereolabs ZED: ZED camera (depth range 1.5 to 20 m).

- Intel: 200 series, 300 series, Module D400 series, D415 (active IR stereo, rolling shutter), D435 (active IR stereo, global shutter), D435i (integrated IMU).

- Microsoft: Azure Kinect (with IMU and microphone), Kinect-v1 (structured light), Kinect-v2 (TOF).

- Occipital Structure: Structure Camera for iPad.

- Samsung: 2nd and 3rd generation dynamic cameras and event-based solutions.

2.2 Frontend

The frontend, known as visual odometry (VO), estimates coarse camera motion and feature directions using information between frames. To achieve accurate pose with fast response, an efficient VO is required. Frontends are mainly feature-based or direct/semi-direct methods. Feature-based VO is more stable and less sensitive to illumination and dynamic objects. Robust feature extraction with scale and rotation invariance improves VO reliability.

SIFT was proposed to extract and describe scale-invariant features, but it is computationally expensive. SURF improved SIFT performance with reduced computation, but still has limitations for real-time SLAM. FAST corner detection emphasizes speed by detecting corners using a circular pixel test; however, FAST lacks orientation and scale information. ORB combines oriented FAST with rotated BRIEF descriptors, offering fast computation, rotation and scale invariance, and robustness to noise, making it suitable for embedded platforms. Comparative evaluations on KITTI indicate SIFT is most accurate while ORB has lower computational cost.

For embedded systems with limited compute, ORB is considered more suitable for autonomous vehicles. Other descriptors include DAISY, ASIFT, MROGH, HARRIS, LDAHash, D-BRIEF, Vlfeat, FREAK, Shape Context, PCA-SIFT, and others.

2.3 Backend

The backend receives camera poses from the frontend and optimizes them to obtain globally consistent trajectories and maps. Backend methods are mainly filter-based (EKF, UKF, particle filters) and optimization-based (factor graph and nonlinear optimization). Filter-based methods use Bayesian principles to estimate states from prior and current observations. EKF-based SLAM worked well for small environments but scales poorly due to covariance storage growing quadratically with state size. Optimization-based methods represent poses and landmarks as vertices in a graph and constraints as edges, then solve a nonlinear least-squares problem to optimize states. Most modern visual SLAM systems use nonlinear optimization.

2.4 Loop Closure

Loop closure identifies revisited places to eliminate accumulated drift. Traditional visual loop detection uses a bag-of-words (BoW) model: cluster local features via k-means to build a vocabulary, represent images as k-dimensional vectors by word occurrence, and detect similar scenes to recognize revisited areas.

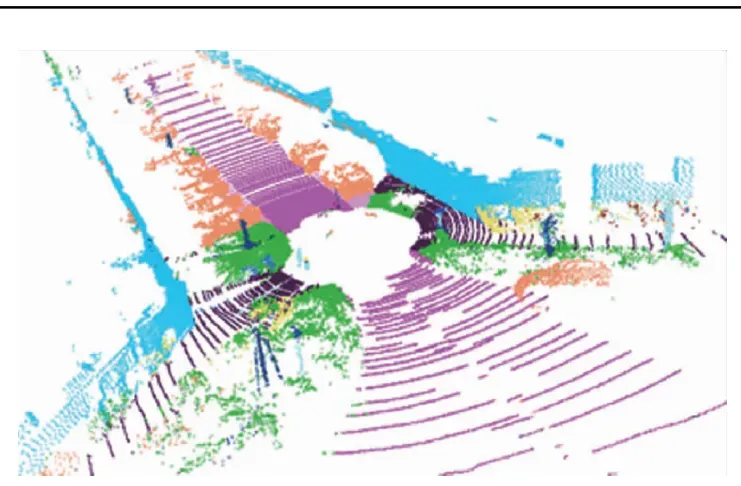

2.5 Mapping

Mapping builds environment representations for navigation and obstacle avoidance. Maps can be metric or topological. Metric maps describe relative positions, while topological maps capture connectivity. Metric maps can be sparse or dense. Sparse maps contain limited scene information suitable for localization; dense maps contain richer information helpful for navigation and obstacle avoidance.

3. State of the Art

3.1 Visual SLAM

Pure visual SLAM is divided into feature-based and direct methods. Feature-based methods extract and match keypoints to estimate motion and build maps, but descriptor computation is time-consuming. Direct methods avoid keypoints and descriptors by operating directly on intensities.

Based on sensor type, visual SLAM can be monocular, stereo, RGB-D, or event-camera-based. Based on map density, methods are sparse, semi-dense, or dense.

3.1.1 Feature-based Methods

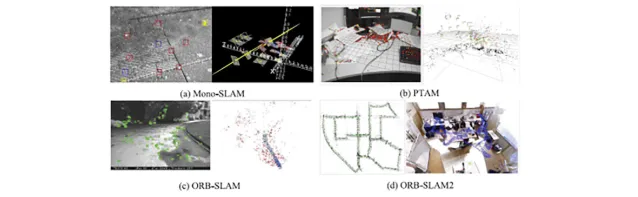

Mono-SLAM (2007) was the first real-time monocular SLAM using EKF to track sparse features. PTAM introduced parallel tracking and mapping and separated frontend and backend using nonlinear optimization and keyframe mechanisms. ORB-SLAM (2015) is a complete keyframe-based monocular SLAM system dividing the system into tracking, mapping, and loop threads and using ORB features for extraction, matching, mapping, and loop detection. ORB-SLAM2 extended the framework to support stereo and RGB-D cameras and provided real-time map reuse, loop detection, and relocalization.

3.1.2 Direct Methods

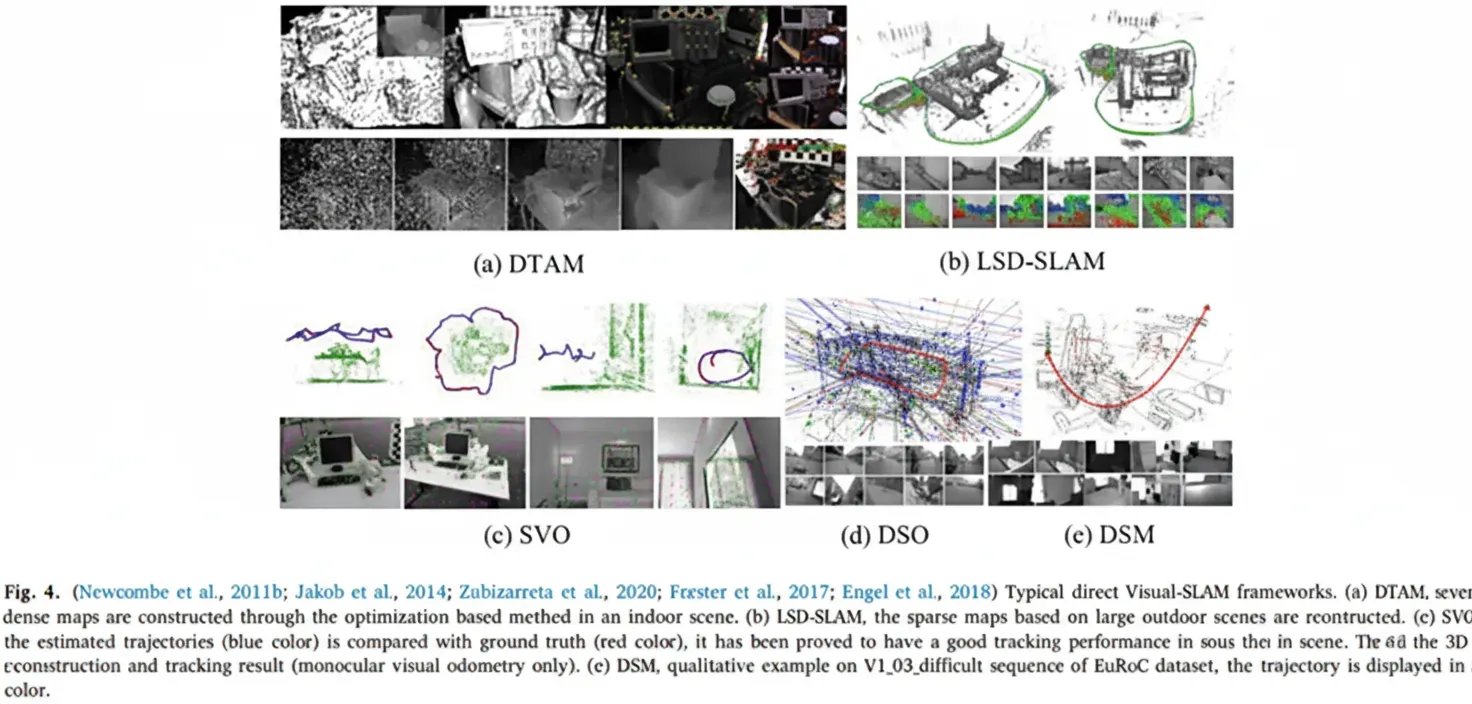

DTAM (2011) introduced a direct monocular SLAM framework using inverse depth to estimate feature depth and build dense maps via optimization. LSD-SLAM applied pixel-wise methods to semi-dense monocular mapping. SVO uses sparse direct tracking to achieve low complexity and strong real-time performance. DSO is a semi-direct method offering higher accuracy at speed but functions mainly as visual odometry without backend optimization and loop closure, so drift accumulates. Direct methods are fast and perform well in weak-feature conditions but are sensitive to illumination changes due to assumptions of photometric invariance. DSM proposed a direct sparse mapping system based on photometric bundle adjustment, representing a complete monocular SLAM built on direct photometric optimization.

The visual SLAM field includes sparse, semi-dense, and dense approaches and many variations with trade-offs between accuracy, robustness, and computational cost.

3.2 Visual-Inertial SLAM

IMUs help when cameras face challenging conditions like low texture or lighting changes, while vision corrects IMU drift. The combination, commonly called visual-inertial odometry (VIO), is promising for autonomous driving. VI-SLAM methods are filter-based or optimization-based.

3.2.1 Filter-based Methods

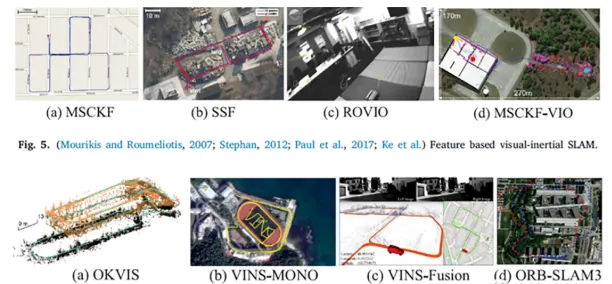

MSCKF introduced a multi-state constraint Kalman filter approach for visual-inertial SLAM, improving robustness to aggressive motion and texture loss. Later work addressed consistency and improved performance and efficiency. Other filter-based works include ROVIO and MSCKF variants that remain notable for real-time performance.

3.2.2 Optimization-based Methods

OKVIS is a classic optimization-based VI-SLAM that integrates IMU measurements with visual reprojection errors. VINS-Mono is a widely used monocular VI-SLAM employing a sliding-window nonlinear optimization backend and an initialization strategy that first initializes the pure visual subsystem and then estimates IMU biases, gravity, scale, and velocity. VINS-Mono demonstrated accuracy comparable to OKVIS and robust initialization. VINS-Fusion extended this to stereo and GPS integration for improved outdoor performance.

ORB-SLAM3 is a tightly integrated feature-based VI-SLAM system using MAP-based initialization and multi-map capability with improved place recognition. It supports monocular, stereo, and RGB-D configurations and can operate in visual, visual-inertial, and multi-map modes. ORB-SLAM3 can create new maps and seamlessly merge them when revisiting areas, improving long-term robustness.

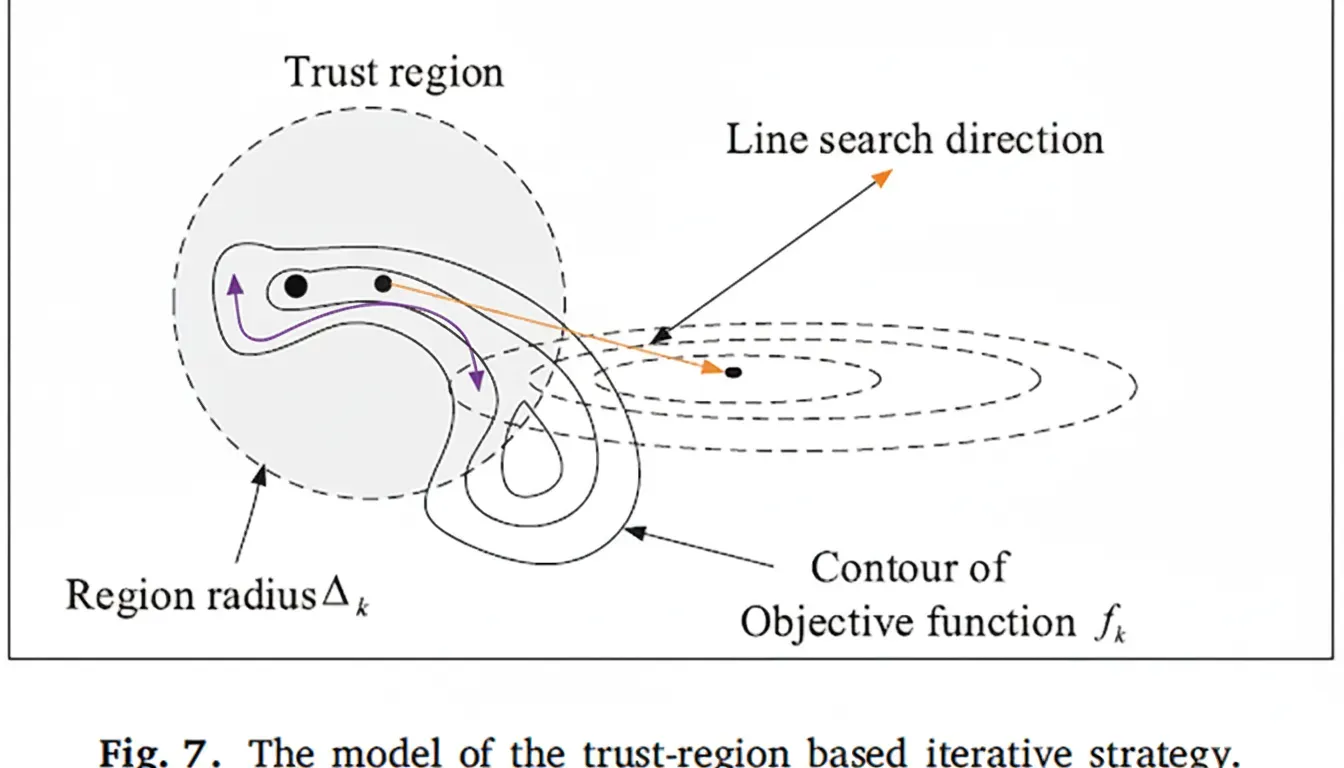

3.3 Testing and Evaluation

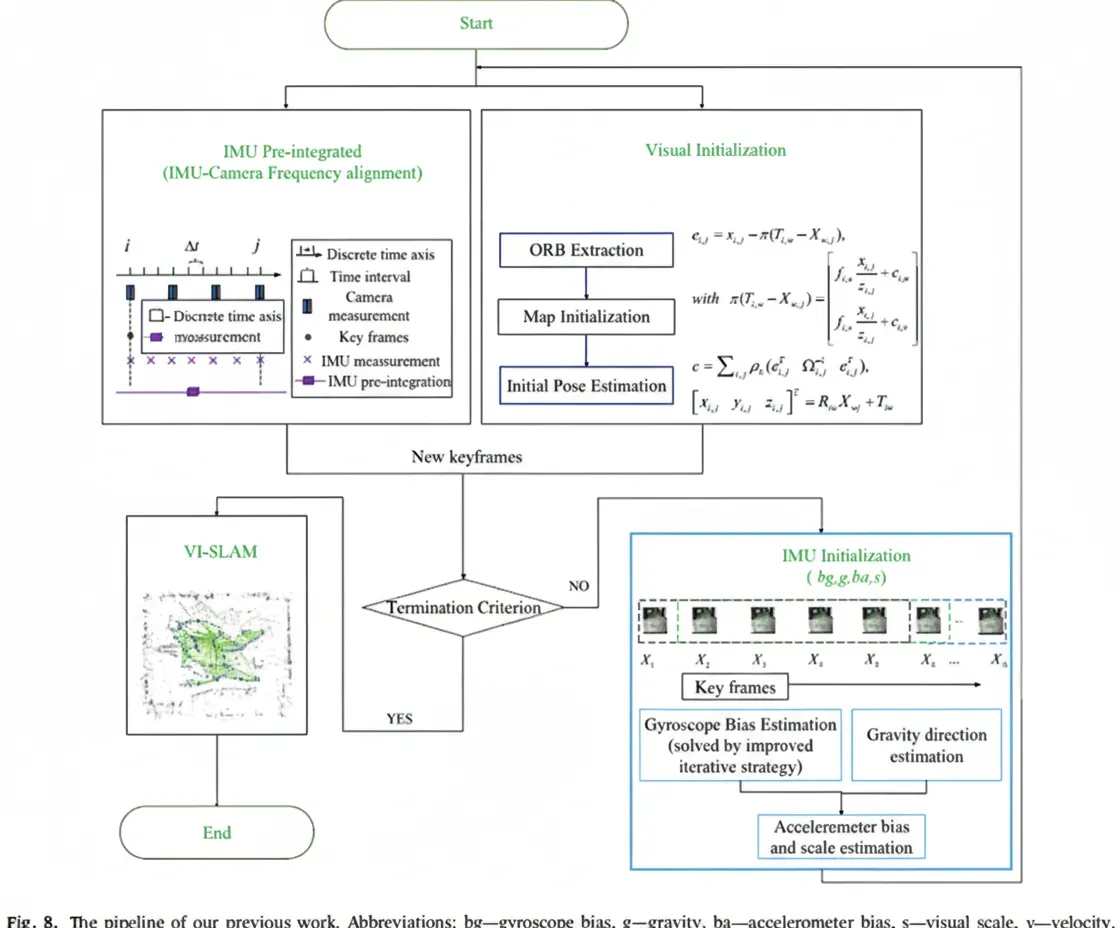

To compare localization performance, typical algorithms were tested on the same onboard computer configuration (Intel Core i7-9700 CPU, 16 GB RAM, Ubuntu 18.04 + ROS Melodic) and compared with previous work. A prior paper proposed an improved trust-region iteration strategy integrated into a VI-ORB-SLAM framework for faster initialization and higher localization accuracy. The trust-region model blends steepest-descent and Gauss-Newton steps, solving a minimized subproblem per iteration when the model is considered locally valid.

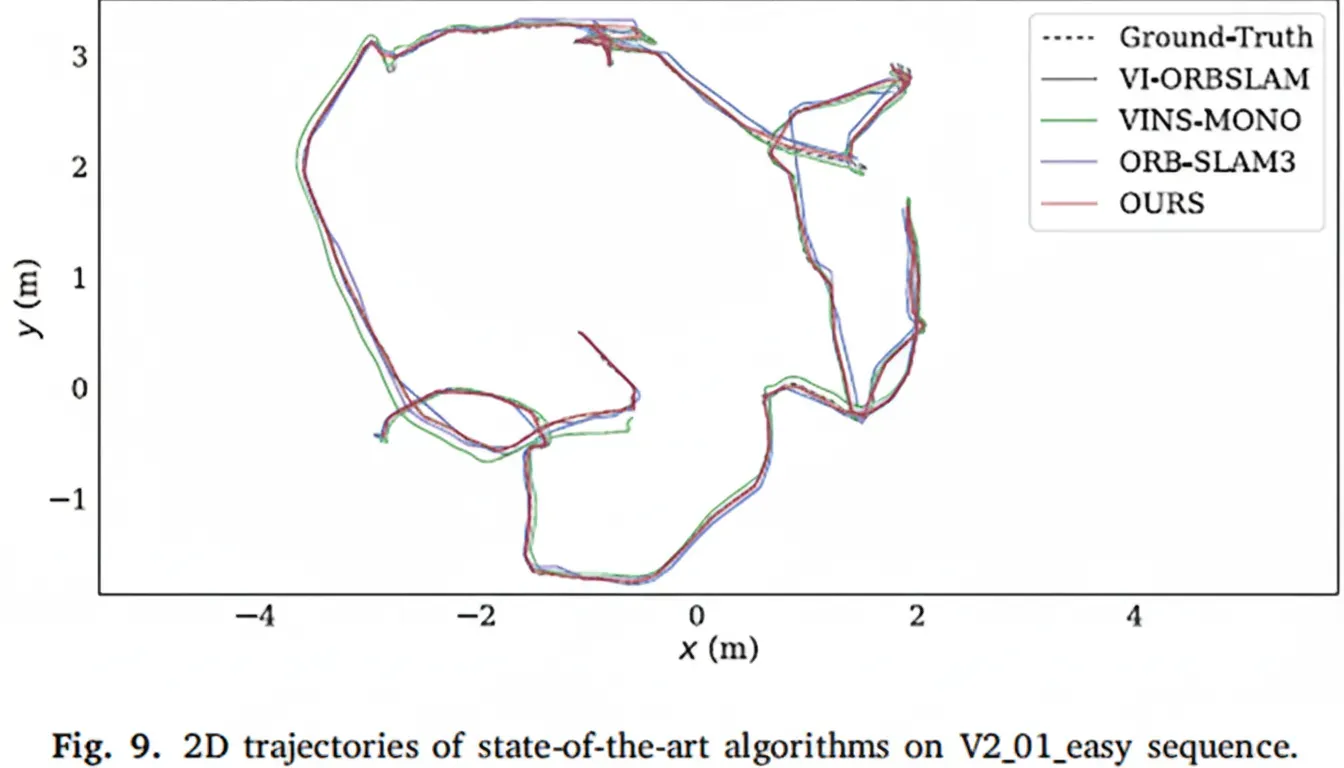

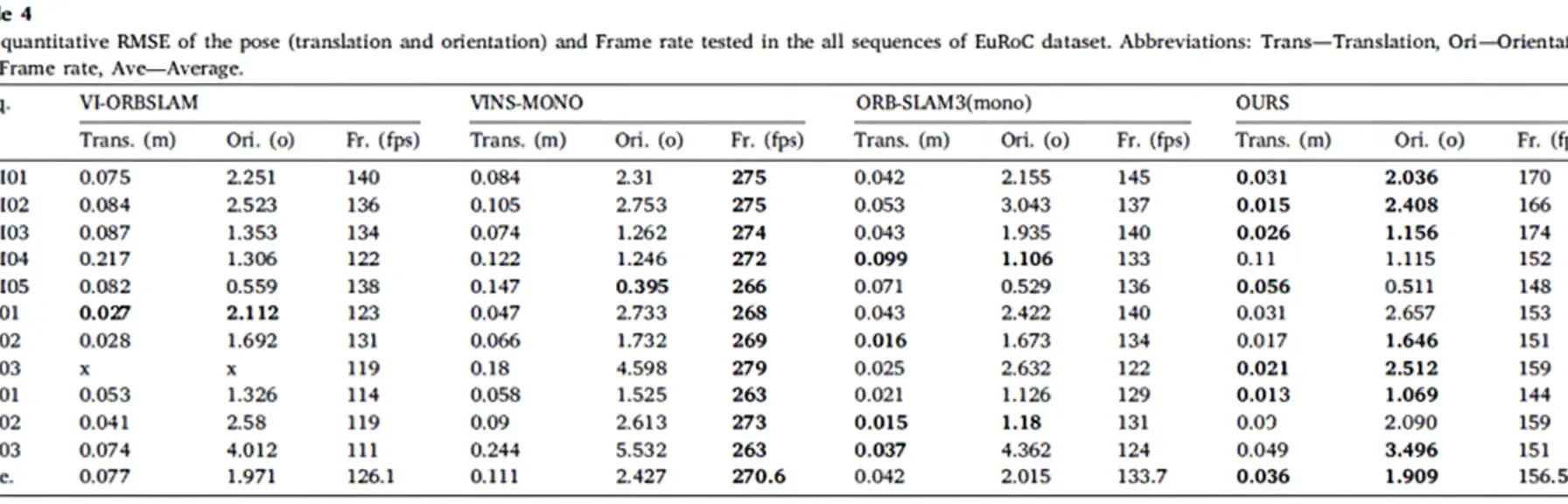

Initialization estimates include scale, velocity, gravity, and IMU biases. A robust visual initialization is followed by IMU preintegration to align frequencies and generate keyframes. An improved trust-region strategy refines gyroscope bias and gravity direction, followed by accelerometer bias and visual scale estimation. Experiments on EuRoC V2_01_easy show trajectories, position and orientation comparisons, and quantitative RMSE and frame-rate results across sequences. The improved initialization led to superior localization and orientation performance across many sequences.

3.4 Visual-LIDAR SLAM

Vision and LIDAR have complementary strengths: vision provides rich texture and scene recognition, while LIDAR offers reliable, light-independent distance measurement. Fusing both can provide more robust perception and state estimation. Typical pipeline stages include data processing, estimation, and global mapping. Approaches vary by the relative roles of vision and LIDAR: vision-guided, LIDAR-guided, and mutual calibration methods.

3.4.1 Vision-Guided Methods

Vision-guided methods use LIDAR to provide depth for visual features. LIMO projects LIDAR point clouds onto images to estimate feature scale and constructs optimization constraints using LIDAR-derived scales and camera-based scales. Other work interpolates missing depth from sparse LIDAR aligned to images using Gaussian regression, enabling LIDAR-based initialization of visual features similarly to RGB-D approaches. Some systems use visual pose estimates to annotate point clouds during mapping, or use 1D LIDAR to correct scale drift in monocular SLAM on low-cost hardware. Methods have also addressed occlusion and co-planarity issues in fused mapping.

3.4.2 LIDAR-Guided Methods

LIDAR-guided methods use visual information to improve loop detection or perform joint optimization of LIDAR and visual residuals. Examples include CNN-based feature extraction for loop detection, combining scan matching with ORB-based loop detection, and using visual BoW for loop detection in 3D LIDAR SLAM. ICP can also be combined with visual pose estimation to improve alignment.

3.4.3 Mutual Calibration Methods

Mutual calibration approaches combine the two modalities to correct each other. VLOAM uses visual odometry estimates to correct LIDAR motion distortion and refines visual poses using corrected point clouds. Parallel SLAM methods optimize residuals from both LIDAR and visual modalities in a shared backend. Other work constructs combined cost functions with LIDAR and visual constraints and builds 2.5D maps to speed loop detection. Visual-LIDAR fusion remains less mature than visual-inertial fusion and requires further research.

3.5 Visual-LIDAR-IMU SLAM

Multi-sensor fusion including visual-LIDAR-IMU is considered suitable for higher levels of autonomous driving and has attracted attention. LIDAR can provide extensive environmental detail but may fail in structureless scenes such as long corridors or open squares. Visual methods perform well in textured scenes and facilitate re-identification but are sensitive to lighting, fast motion, and initialization. IMUs help reduce motion distortion and provide short-term pose estimates when visual features are scarce, aiding scale recovery.

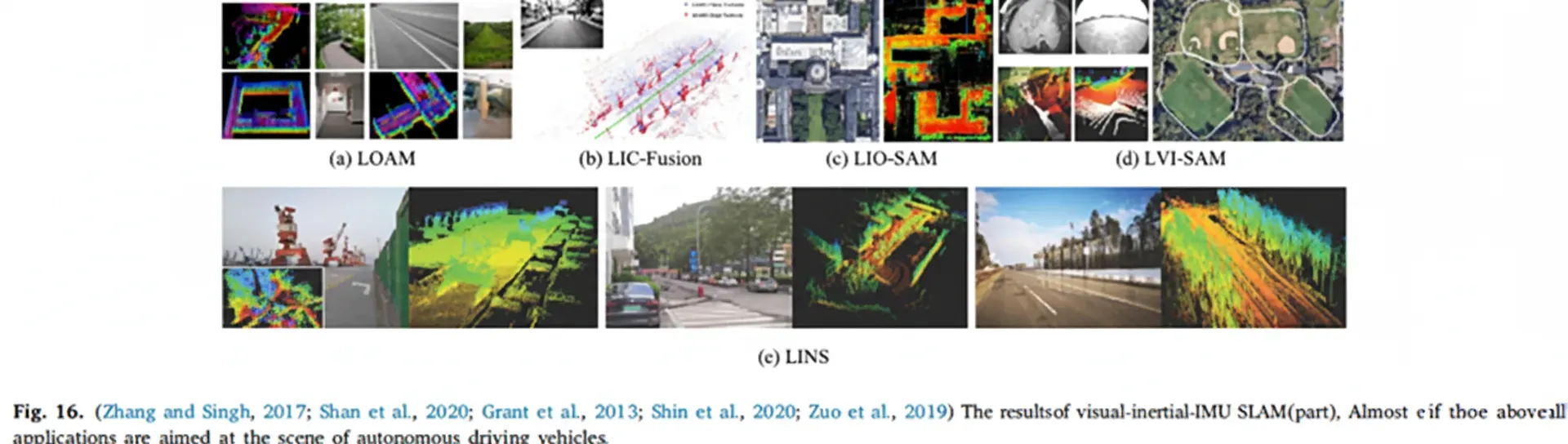

Research on tightly integrated visual-LIDAR-IMU fusion is limited. Researchers often fuse visual-IMU and LIDAR-IMU subsystems into a combined LVIS. LIDAR-IMU methods are categorized as loosely coupled and tightly coupled. LOAM and LeGO-LOAM are classic loosely coupled approaches where IMU is not used in optimization. Tightly coupled LIO systems, still under development, generally improve accuracy and robustness. Examples include LIO-Mapping using VINS-Mono optimization to minimize IMU and LIDAR residuals, LIC fusion that merges LIDAR features with sparse visual features, and LIO-SAM which introduces sliding-window optimization with factor graphs for joint IMU and LIDAR constraints. LINS uses an error-state Kalman filter for ground vehicle design.

LVIO systems like a tightly coupled LVIO using coarse-to-fine estimation start with IMU prediction, then refine with VIO and LIO. LVIO algorithms currently show top accuracy on KITTI. Some reported implementations are not open-source. Recently, LVI-SAM presented a tightly coupled visual-LIDAR-IMU scheme that improves real-time performance by treating VIO and LIO as two linked but independently operable subsystems, allowing robustness when one subsystem temporarily fails.

4. Discussion

Visual SLAM has achieved significant progress for vehicle localization and mapping, but existing technologies are not yet mature enough to address all real-world challenges. Visual-based localization and mapping remain early-stage for complex urban environments. Real-world deployment is a systems problem since SLAM is only one component among control, object detection, planning, and decision modules.

4.1 Real-time Performance

Autonomous driving requires fast SLAM response. A 10 Hz processing rate is considered a minimum for urban driving. Real-time performance can be improved with algorithmic optimizations and higher-performance hardware such as GPUs. System accuracy and robustness must consider environmental dynamics like scene changes, moving obstacles, and illumination variations. In specific scenarios such as automated valet parking, cameras are often used for obstacle detection, avoidance, and lane keeping.

4.2 Localization

Achieving centimeter-level positioning in urban roads remains a challenge. Traditional GPS receivers with meter-level accuracy are insufficient, and expensive differential GPS solutions introduce infrastructure overhead. Vision-based approaches, visual-inertial fusion, visual-LIDAR fusion, and visual-LIDAR-IMU fusion have been studied for precise relative localization. IMU-induced drift affects accuracy exponentially. Visual-LIDAR fusion lacks dead-reckoning sensors such as encoders and IMUs in some setups, reducing localization robustness. Visual-LIDAR-IMU fusion is seen as a promising approach for high-precision vehicle localization as LIDAR costs decrease.

4.3 Testing

Real-world implementations remain limited due to regulations and lack of test vehicles. Most recent visual SLAM research evaluates algorithms on public datasets such as KITTI, EuRoC, and TUM, which are valuable for validation but may not represent diverse real-world locations. Dataset evaluations may not fully indicate performance in other cities or countries. High computational requirements of visual SLAM also complicate online deployment, requiring dedicated parallel hardware. While commercial automotive computing platforms are expensive, recent high-performance, low-cost embedded devices and optimized VO frontends can facilitate practical implementations.

4.4 Future Trends

Given the computational burden of SLAM modules, multi-agent visual SLAM appears promising. Offloading backend optimization and mapping to remote servers via 5G/6G could allow on-vehicle hardware to handle frontend tasks while cloud infrastructure performs heavy optimization, accelerating practical adoption in autonomous vehicles.

5. Conclusion

Recent research has contributed substantially to addressing visual SLAM challenges. This review surveyed various visual and vision-based SLAM methods and their applications to autonomous driving. Although visual SLAM applications in vehicles are not yet mature, they remain an active area of research. Public datasets enable reproducible validation, encouraging new algorithm development, but real-world urban deployments are still limited. Dataset results may not fully indicate local real-world performance, so practical visual SLAM applications in autonomous vehicles require further work.

Trends in visual SLAM favor lightweight designs and multi-agent cooperation, encouraging deployment on low-power hardware such as embedded devices. Multi-sensor fusion is central to applying visual SLAM in vehicles. Despite outstanding challenges, increasing public acceptance of autonomous driving and emerging high-performance mobile computing hardware are likely to spur practical visual SLAM adoption in the near future.