Overview

Advances in industrial cameras and image sensors used for machine vision applications—such as inspection of flat-panel displays, printed circuit boards, and semiconductors, as well as warehouse logistics, intelligent transport systems, crop monitoring, and digital pathology—are driving new requirements for cameras and sensors. The primary challenge is balancing higher resolution and speed with lower power consumption and reduced data bandwidth. In some cases, miniaturization is also a factor.

Externally, a camera is a housing with mounting features and optical elements. While external design matters to users, major technical challenges that affect performance, functionality, and power lie inside. Hardware such as image sensors and processors, together with software, play a central role.

Based on current knowledge, what changes can we expect over the next decade in cameras, processors, image sensors, and image processing? How will these changes affect quality of life?

Image Performance

Just as one vehicle size does not fit all drivers, one image sensor size does not fit all applications.

Larger, more powerful image sensors are attractive for certain high-performance vision categories. In those cases, sensor size, power, and cost are less important than performance. Flat-panel display inspection is a good example. Some display manufacturers now look for submicron defects in premium displays, small enough to detect bacteria on a panel.

Ground- and space-based astronomy require even higher performance. Researchers at SLAC National Accelerator Laboratory demonstrated a 3-gigapixel imaging solution assembled from multiple smaller sensor arrays, producing images of such resolution that, as SLAC noted, "you could see a golf ball from roughly 15 miles away." This achievement illustrates the virtually unlimited possibilities in research laboratories.

Members of the LSST camera team have prepared to mount L3 optics onto the camera focal plane, a circular CCD sensor array capable of capturing 3.2-megapixel images.

However, regardless of resolution, we are reaching practical limits in 2D imaging. Advanced optical inspection systems do not always need higher speed or more raw data; they need more and only useful information.

Seeking More Information

Several trends are increasing the amount of information required per pixel.

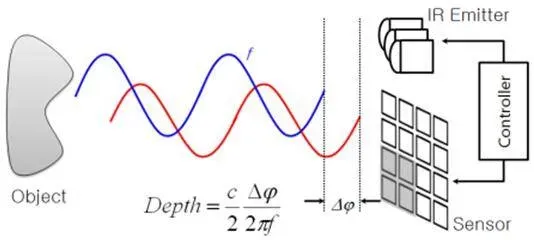

3D Image Capture

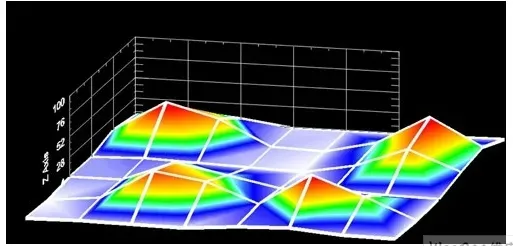

3D capture adds an extra dimension, providing finer detail and improved detection capabilities. Applications such as battery inspection and TV, laptop, and phone screen manufacturing are pushing optical inspection sensors to collect additional information. At submicron resolutions, finding a 2D defect may be insufficient; systems are forced to calculate defect height and possibly shape to determine whether the defect is removable dust, hard particles, or pins.

Application developers are leveraging color, angle, and alternative imaging modalities (for example, 3D or polarization, which add another dimension of light information) to satisfy customer needs. Camera manufacturers are responding by providing tools targeted at these requirements.

Hyperspectral Imaging

Hyperspectral imaging is another rapidly growing trend. Like many remote sensing techniques, hyperspectral imaging exploits the fact that all objects have wavelength-dependent spectral fingerprints determined by electronic structure (for the visible spectrum) and molecular structure (for SWIR/MWIR spectra). Their absorption and reflection of visible and nonvisible light reveal details that ordinary color imaging cannot. Seeing chemical properties within materials has broad applications in mineral, oil, and gas exploration, astronomy, and monitoring floodplains and wetlands. Hyperspectral resolution, separation, and speed are useful in wafer inspection, metrology, and health sciences.

In these markets, sensor and camera manufacturers are pushing the limits of speed, cost, resolution, and functionality. Technologies are extending across spectral ranges from X-ray to high-precision thermal imaging, enabling more applications. Faster and more accurate detection supports manufacturers in achieving tasks such as 100% inspection for food contamination, measuring contents, and screening for foodborne bacteria.

Smarter Inspection

Image processing is inherently data intensive. High-resolution imagers running at very high frame rates can produce continuous data exceeding 16 GB/s. Applications must capture, analyze, and process these streams. The urgency around artificial intelligence (AI) further pushes processing demands.

Challenges

Consider an AI-based camera used for traffic light enforcement. These applications typically use a 10-megapixel sensor running at about 60 frames per second, producing a continuous data stream of roughly 600 MB/s.

Typical neural network processing today is often based on small image frames, for example 224x224 pixels, which is about 150 kB per frame for a 3-channel 1-byte-per-channel image. A modern PC CPU running object-recognition neural networks might operate at around 20 frames per second, yielding about 3 MB/s of processed data throughput. This is 200 times lower than the raw throughput from the traffic camera, which severely limits the usable input stream.

Intelligent transport solutions can combine video and thermal imaging with AI, video analysis, radar, and V2X integrated into traffic management and analytics software to help cities run safely and smoothly.

It is important to view output streams as information rather than raw data. From a 600 MB/s image stream, the system may perform tracking, reading, and processing to extract a few numeric results per scene. For example, the output might be a license plate string or, in a classification task, a boolean "yes or no," reducing a massive data stream to a single bit.

While this is challenging, achieving it is attractive for downstream data capture, processing, and storage. To address input data stream constraints, a combination of sensor engineering, advanced AI processors, and integrated algorithm solutions is needed.

Powerful (and Power-Hungry) Processors

Most cameras use conventional semiconductors such as central processing units (CPU) or field-programmable gate arrays (FPGA). Higher-performance units may use more capable FPGAs or graphics processing units (GPU). To date, these processor types have largely followed Moore's Law, but past trends do not guarantee future progress.

Moreover, GPUs, CPUs, and FPGAs consume significant power and generate substantial heat. To some extent this is manageable through good design, but alternative processor architectures are required to address long-term challenges.

Quantum computing and integrated photonic/electronic processors are potential future options to meet the most demanding performance-per-power requirements for image processing. Before those technologies become commercially viable, newer architectures such as in-memory computing or integrated domain-specific accelerators will continue to extend possible performance boundaries.

When selecting processors, manufacturers should consider trillions of operations per second per watt (TOPS/W) as a useful figure of merit for raw power efficiency. However, the ultimate requirement is decisions per watt, a metric that does not yet exist in any standardized form.

Clever Processing

Algorithm advances in speed and capability, driven by major technology companies, are producing AI-based solutions that are lighter weight yet more capable. Traditional algorithmic approaches are also exploiting modern processor architectures more efficiently. Availability of commercial AI software tools that can be integrated into existing deployment pipelines is reducing power and cost while improving functionality.

Reducing Data

Paired with advanced image sensor technologies, progress is being made on low-data solutions, including event-based sensing at the spatial, temporal, or even photon level, and alternatives to intensified sensors such as photomultiplier tubes or electron-multiplying CCDs (EMCCD).

Some camera systems integrate EMCCD sensors designed for high quantum efficiency and low read noise, enabling single-molecule imaging and very low-light microscopy applications.

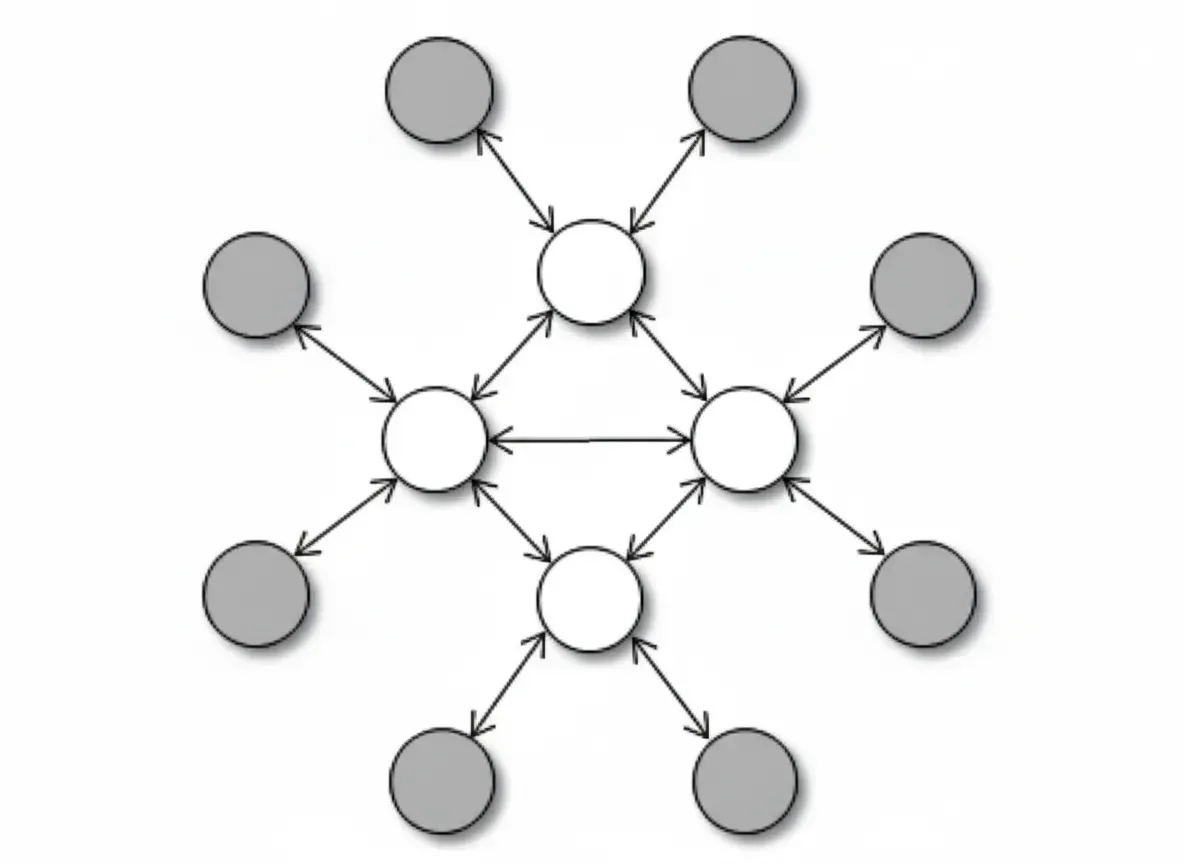

Event-based sensors respond to changes and filter irrelevant data directly on the sensor, sending only information from pixels that have changed to the processor. This contrasts with frame-based sensors that record and transmit all pixels for processing, often overloading system pipelines. This type of data flow is often referred to as neuromorphic processing because its data architecture mimics how the human brain processes information. While neuromorphic processing can be implemented on processors, optimal data reduction requires combining event-based sensors with neuromorphic processors.

Other approaches to dynamically reduce data transfer include intelligent regions of interest (ROI) and adaptive data-reduction algorithms, which are beginning to appear in high-end sensors.

Integrating specialized data-capture behavior with high-performance processors and efficient lightweight algorithms provides the combination needed to make decisions at the edge where events occur and action is required. With this approach, even high-performance, information-rich optical inspection systems can operate autonomously without slow, costly PC links, enabling faster, lower-cost 100% inspection and response.

Overall, these advances will improve the safety and quality of various products, agricultural goods, and commodities while reducing manufacturing costs.

Searching for Molecules

Another level of inspection is possible that traditional methods cannot reach. In some use cases, users need to detect bacteria at very high resolution. High-magnification microscopy combined with optical, chemical, biological, and computational methods can reveal nanoscale structures in greater detail.

Image sensors enable detection of cancer cells in human tissue. Current tissue-cancer detection methods are relatively crude, often requiring surgical removal of tissue samples for laboratory analysis, but emerging techniques such as near-real-time cytometry may allow clinicians to determine malignancy while the patient is still on the operating table. As processing approaches the pixel level, it becomes possible to image cells and inspect molecular content in clinical laboratories. In the future, near-real-time cellular inspection could move from large labs to local labs and ultimately to the operating room.

Researchers have developed imaging flow cytometry techniques to reduce time and cost in blood screening. Using pulsed laser line-scan imaging and digitizers, they processed large volumes of data—up to 100,000 single-cell images per second and terabytes of image data—combined with deep-learning neural networks and automated big-data analysis.

Providing surgeons with diagnostic tools during procedures could enable real-time tumor classification and resection instead of subjecting patients to prolonged waits and potential second surgeries. That would represent a substantive improvement in quality of life.

Miniaturization

Size imposes another set of challenges. Novel high-performance solutions are often large and costly. To enable smaller, easier-to-use solutions for clinicians and patients, challenges must be overcome to place genomics analyzers on a desktop or perform in-body diagnostics during surgery.

Ultra-compact image sensors, light sources, and processors for miniature camera applications are becoming available. "Chip-on-tip" CMOS image sensors with very small pixel pitches offer enhanced vision needed for minimally invasive endoscopic and laparoscopic procedures. Robotic-guided surgery also benefits from compact sensors with small pixel pitch and image quality optimized for specific medical procedures.

Chip-on-tip CMOS image sensors designed for disposable and flexible endoscopes and laparoscopes require compact form factors, small pixel pitch, and image quality tuned to medical applications.

Powerful and efficient tools for clinicians will make procedures faster, less invasive, and more successful, benefiting patients, surgeons, and the broader medical community.

The Near Future

Advances in camera and image-sensor technologies will affect more than factory floors, warehouses, or intelligent transport systems. Cameras deployed on drones or embedded in handheld devices, using spectral techniques, could detect toxins in produce or drinking water, or environmental toxins in the air we breathe.

Even polymerase chain reaction (PCR) testing for pathogens such as SARS-CoV-2 may become easier and cheaper as lower-cost image sensors are applied to molecular diagnostic tools. DNA sequencing once required large, expensive machines for whole-genome analysis, but innovations in imaging and analysis are making these technologies increasingly accessible and affordable.

While exact outcomes cannot be predicted with certainty, current market needs and evolving technologies suggest continued progress. Expect more powerful AI processors that deliver greater compute at lower power and lower temperatures. Combined with advanced algorithmic solutions, providers will broaden the range of suitable applications.

Neuromorphic computing platforms will emulate the efficiency of human vision. More intuitive AI algorithms will train machine-vision models more effectively. Hyperspectral imaging will continue to deepen our exploration below the Earth's surface. Miniature image sensors could enable molecular diagnostics during surgery.

Since the first camera was invented in the early 19th century, camera technology has advanced extensively. With continued innovation in cameras and image sensors, our ability to understand the world around us—and the world within us—will expand dramatically.