Apple introduced 3D depth sensing beginning with the iPhone X. This technology is the basis for Face ID. The iPhone X 3D camera uses an infrared structured-light scheme consisting of an infrared light source, optical components, and infrared sensors. The infrared light source is the most critical part. Early 3D sensing systems generally used LEDs as the infrared source, but as VCSEL chip technology matured, VCSELs became advantageous in accuracy, miniaturization, power consumption, and reliability. As a result, many modern 3D camera systems now use VCSELs as the infrared source.

3D vision measurement principles

To discuss 3D vision solutions, it is necessary to clarify optical measurement categories and their principles. Optical measurement divides into active ranging methods and passive ranging methods.

Active ranging methods use a controlled light or sound source to illuminate the target and obtain 3D information based on the surface reflection characteristics and optical or acoustic properties. Active methods typically offer higher ranging accuracy, better anti-interference performance, and real-time capability. Representative active methods include structured light, Time of Flight, and triangulation.

1. Active ranging methods

Structured light

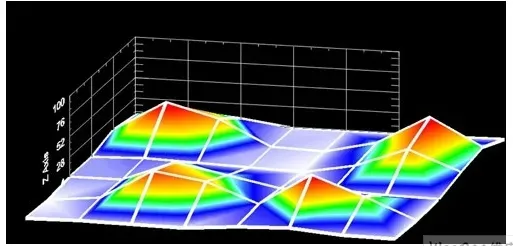

Structured light is subdivided by the projected beam pattern into point-structured, stripe-structured, and surface-structured approaches. A widely used and depth-measurement–effective approach is planar structured light. Planar structured light projects a patterned plane, for example a dense uniform grating, onto the target. Because the target surface has varying depths, the reflected grating lines are deformed according to local depth variations. This process can be viewed as modulation of the grating by the surface depth information. By analyzing the geometric relationship between the reflected grating and a reference grating, the height differences and depth for each measured point can be obtained.

Structured light advantages include simple computation and high measurement accuracy; it can provide precise measurement for flat areas without strong texture or shape variation. Its disadvantages are high requirements for equipment and ambient light control, and relatively high cost. Structured light is mainly used in controlled indoor environments.

Time of Flight (ToF)

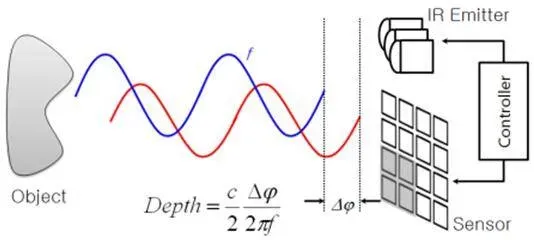

Time of Flight (ToF), also known as LiDAR, projects pulsed laser signals onto a surface. The reflected signal returns to a receiver along an approximately opposite path. By measuring the time difference between emission and reception of the pulses, the distance for each pixel on the measured surface can be determined.

ToF directly exploits light propagation properties and does not require capturing or analyzing grayscale images. Therefore, distance measurement is not affected by surface appearance and can rapidly and accurately capture complete 3D information of a scene. The drawback is that ToF requires relatively complex optoelectronic hardware and tends to be more expensive.

Triangulation

Triangulation, also called active triangulation, is based on optical triangulation principles and uses the geometric imaging relationship among the light source, object, and detector to determine 3D coordinates. In practice, a laser source and a CCD camera are commonly used. This approach is widely applied in industrial inspection, surface roughness measurement, tire inspection, and aerospace and defense fields. It has seen limited use in consumer electronics to date.

2. Passive ranging methods

Passive ranging techniques do not require an active radiation source; they reconstruct 3D information from 2D images captured under ambient illumination. These methods are flexible, adaptive, and low-cost, but they attempt to compute higher-dimensional signals from lower-dimensional inputs, which leads to complex algorithms. Passive methods are classified by the number of visual sensors used: monocular, stereo (binocular), and multi-view vision.

Monocular vision

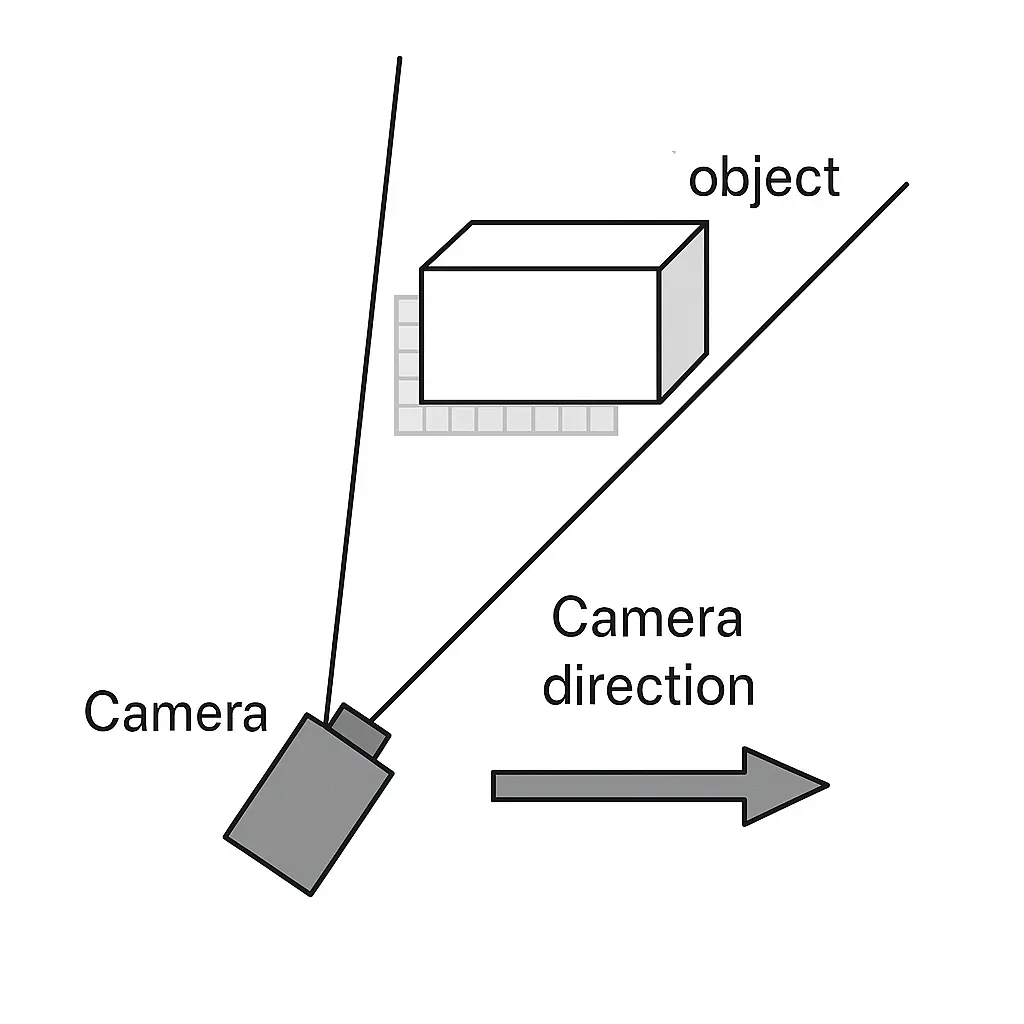

Monocular vision uses a single camera to capture one image for measurement. Its advantages are simplicity and easier camera calibration, and it avoids the narrow field-of-view and matching difficulties of stereo vision. Monocular methods include focus-based and defocus-based approaches. Focus-based methods place the camera at a focused position relative to the object and use lens imaging formulas to calculate distance; finding the precise focus is key and misfocus causes measurement error. Defocus-based methods do not require the camera to be focused on the object; instead they use a calibrated defocus model to compute object distance, which avoids focus-seeking delays but requires accurate defocus model calibration.

Stereo vision

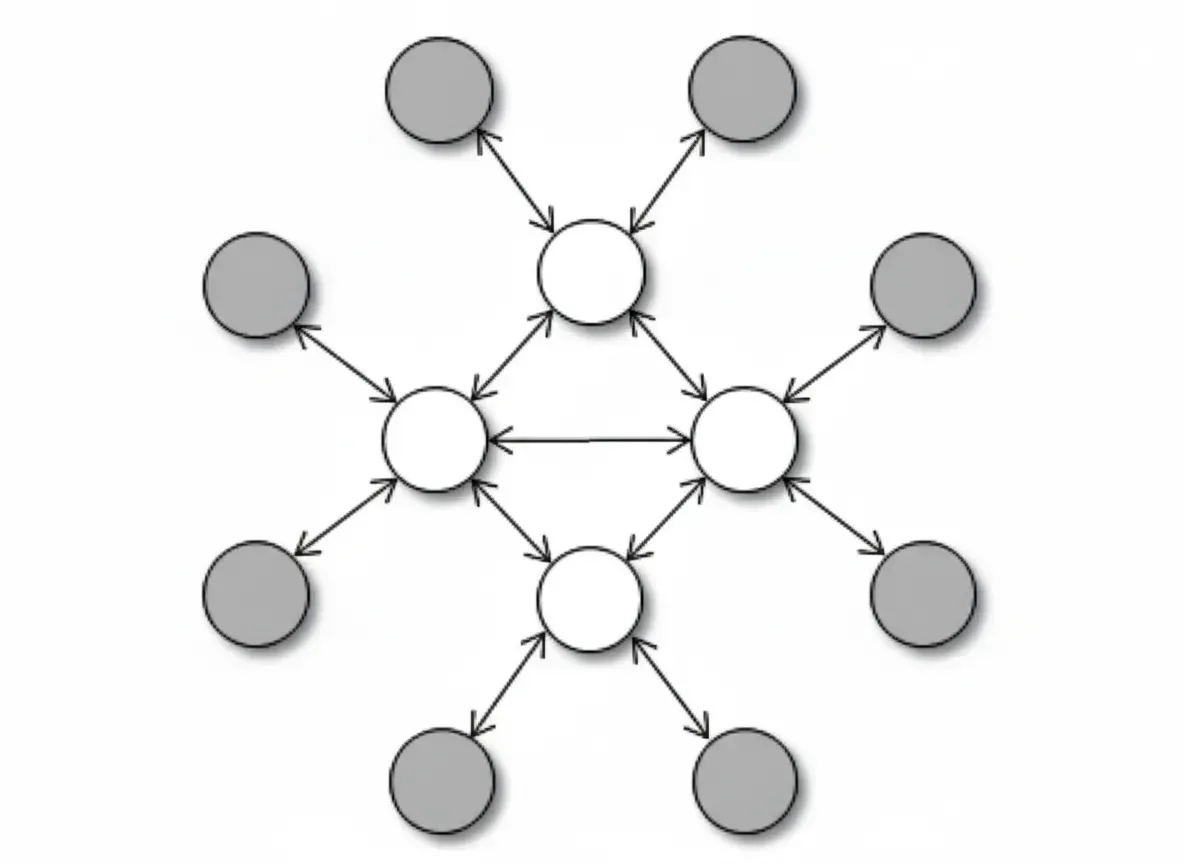

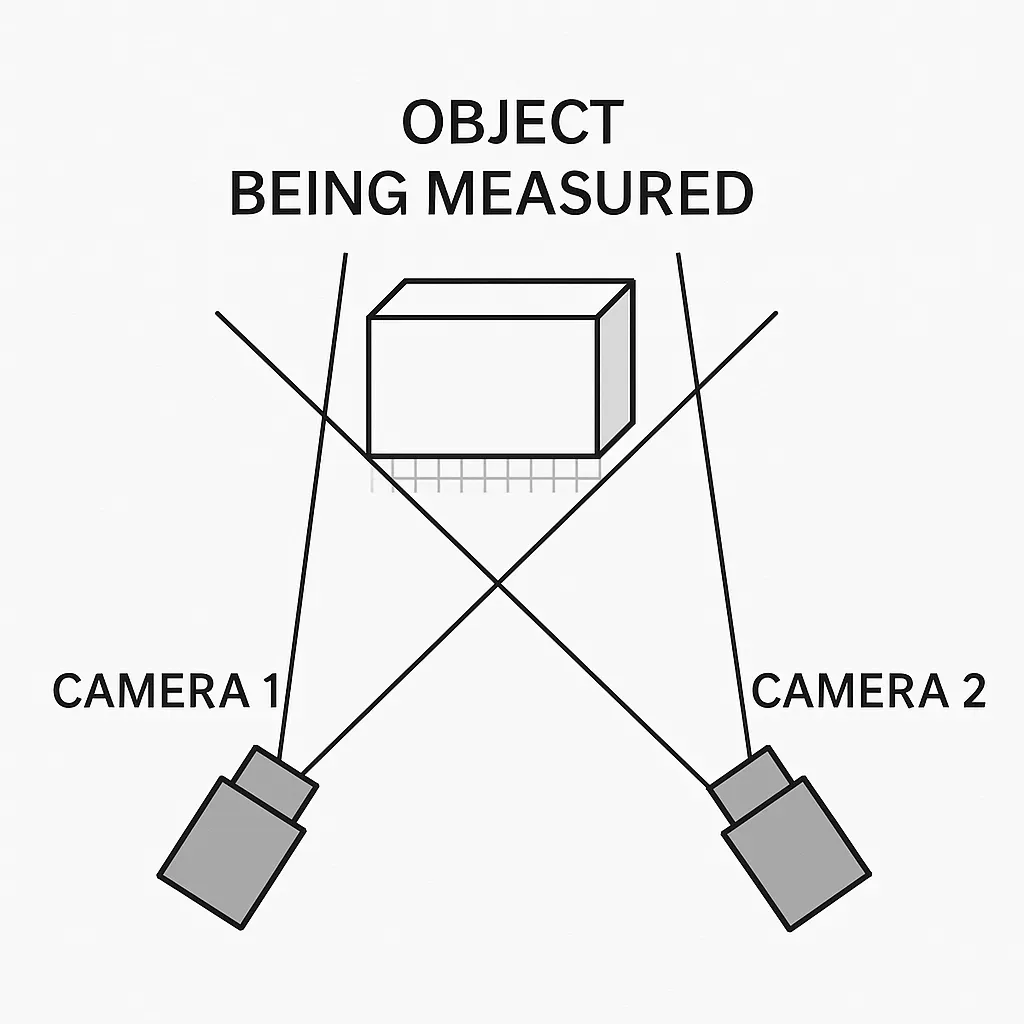

Stereo vision observes the same scene from two viewpoints to obtain images from different angles, then computes 3D information by measuring pixel position differences (disparity) and applying triangulation. This process is analogous to human binocular vision.

Hardware typically uses two cameras connected via dual-input image capture to a computer. The analog signals from the cameras are sampled, filtered, amplified, and converted to digital form to provide image data. A complete stereo vision system usually comprises six parts: image capture, camera calibration, preprocessing and feature extraction, image rectification, stereo matching, and 3D reconstruction.

Multi-view vision

Multi-view vision extends stereo vision by using multiple cameras arranged at various viewpoints, or by moving a single camera to multiple viewpoints to observe a scene. The set of captured images is processed for perception, recognition, and understanding.

In stereo vision, for a given object distance, disparity is proportional to the baseline length; a longer baseline improves distance calculation accuracy. However, excessively long baselines increase the search range and computation. Multi-baseline matching, achieved by adding more cameras, helps eliminate mismatches and improve disparity accuracy.

Optoelectronic 3D imaging techniques

Based on how image information is acquired, optoelectronic 3D imaging divides into passive and active techniques. Passive techniques accept object radiation or ambient emission; active techniques project modulated or unmodulated light onto the object and form 3D images by detecting the reflected light.

Historically, much research focused on passive 3D techniques using triangulation with two spatially separated cameras. Passive methods require objects with prominent contours such as edges and corners. Their advantages are no special hardware requirements and successful application in several fields. Drawbacks include the need for two or more high-quality cameras and associated image processing software; image quality, capture speed, and data transfer limit broader adoption.

Active 3D optoelectronic methods often project a structured light or a modulated beam to form depth. A widely used active method is Time of Flight. In recent years, many commercial 3D cameras are ToF-based and are mainly used in industrial control. For example, the SwissRanger 3000 camera implements ToF imaging by detecting phase. A modulated near-infrared beam at tens of megahertz is projected onto the scene, and the reflected light returning to the 3D camera has a phase delay that depends on distance. By measuring the phase delay between the emitted and reflected beams, scene depth is recovered. ZMD produced 3D image sensors optimized for high-speed operation, achieving response speeds above 100 MHz through process optimization for noise and speed.

3D vision image sensor technology

Whether using multiple cameras for passive 3D imaging or ToF for active 3D imaging, systems are often expensive, power-hungry, and require complex calibration software. Active methods can achieve high-precision 3D imaging but demand sensors with very high response speeds, which forces tradeoffs such as reduced image resolution. Current research largely focuses on 3D implementations based on CCD or CMOS image sensors, image processing, and display; fewer efforts address vision sensor design itself.

A newer 3D vision image sensor approach enables single-chip 3D photography while still outputting 2D images and high-resolution 3D images. This sensor does not require additional active illumination; it uses electronic shutter control to vary exposure time and capture high-speed video, then applies autofocus processing to produce depth images. This type of 3D sensor suits low-cost, compact vision applications such as mobile devices and other multimedia products.

The system comprises two parts: a 3D CMOS image sensor and a variable-focus liquid lens. The 3D CMOS integrates photoconversion circuitry, low-noise readout, noise suppression, programmable gain, analog-to-digital conversion, exposure control, bad pixel correction, color space conversion, automatic white balance, and multimedia image signal processing.

The conventional 2D system has a 2D CMOS sensor and fixed-focus lens; when capturing a scene, pixels map to a single plane so the system produces a 2D image. The proposed system includes a 3D CMOS sensor and a liquid zoom lens. The 3D CMOS sensor features fast response, high dynamic range, focal-plane judgment, and control outputs for the liquid lens. The liquid lens, developed recently, changes focal length by varying the voltage applied to the liquids. When imaging different planes, the liquid lens shape changes accordingly; capturing multiple images across planes and analyzing and fusing image contours yields a complete 3D image. This approach provides high resolution and more accurate depth imaging depending on the number of captured frames. Compared with conventional optical zoom, liquid lenses respond faster and suit rapid zoom applications.

The 3D CMOS pixel circuitry offers high sensitivity, low dark current, and low noise. Row and column decoding circuits generate pixel array timing under control circuitry; noise suppression eliminates readout noise. The analog-to-digital converter converts analog signals to digital data for the digital signal processing logic, which generates exposure control and integrates functions such as white balance, demosaicing, focal-plane judgment, and edge extraction. The ADC is multi-resolution: it outputs 10-bit data in 2D imaging mode and binary image information in 3D imaging mode. The sensor can therefore operate in a high-speed capture mode to obtain high-frame-rate video.

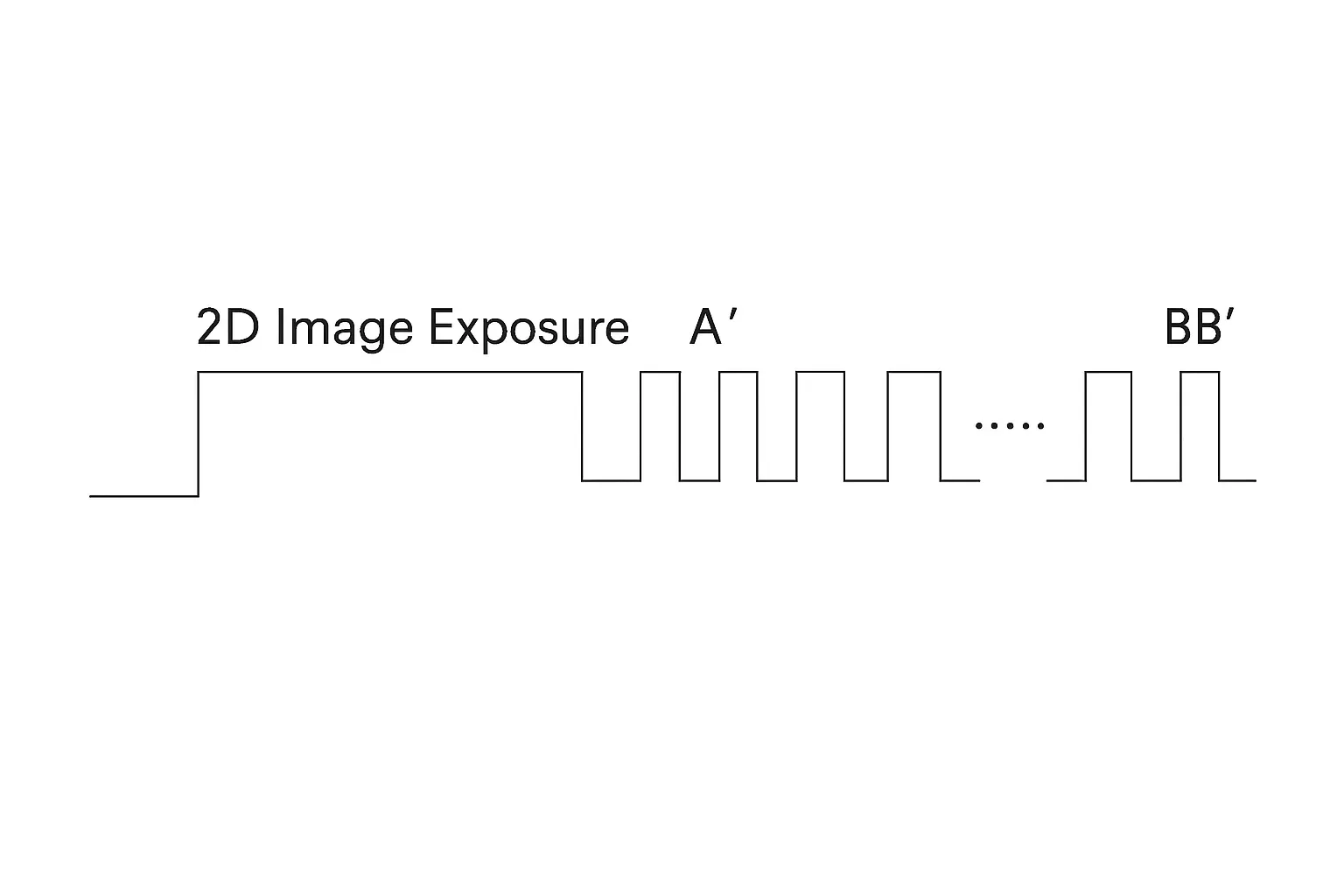

Figure 1 shows the timing: long exposures capture normal 2D images, followed by short exposures for depth images. The sensor can automatically capture consecutive frames across planes to produce depth maps for 3D image synthesis. When operating in 2D mode, the ADC outputs 10-bit data to the image processor; in 3D mode, the ADC outputs binarized image information, enabling high-speed capture.

The liquid zoom lens uses two liquids separated by a conductive electrode containing a conductive fluid. Changing the voltage on the electrode causes polar molecules to move, altering the liquid interface shape and thus changing focal length. The lens offers an effectively wide zoom range, fast response, and excellent optical performance, making it suitable for camera phones, digital cameras, and PDAs. The control voltage for the liquid lens can be programmed or provided at fixed intervals by the 3D CMOS sensor.

One key technology for emerging 3D imaging is the image capture device. Compared with traditional 3D vision sensors, the proposed approach offers simpler structure, easier implementation, and lower cost, facilitating adoption in portable multimedia devices and addressing some limitations of existing 3D sensing systems.