Overview

From video doorbells and network cameras to smartphones, computers, and cars, image sensors are ubiquitous. High resolution and fine image detail are now basic expectations for consumers.

On these edge devices, images captured by a camera are typically processed in real time by an image signal processor (ISP) before being shown to users. Ensuring high image quality while efficiently processing large volumes of data is both a technical challenge and a market opportunity for chip makers.

What an image signal processor does

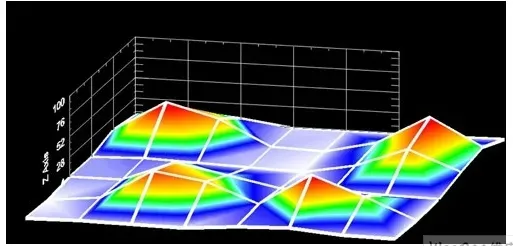

An image sensor is a rectangular semiconductor array made up of millions of pixels. These pixels can be as small as 1 micrometer and are fitted with tiny color filters. In a common Bayer filter array, the filters are red, green, or blue. When photons hit the semiconductor surface they interact with silicon atoms to produce electron–hole pairs, generating a small, measurable electrical charge. In general, the charge is proportional to the light intensity on the pixel.

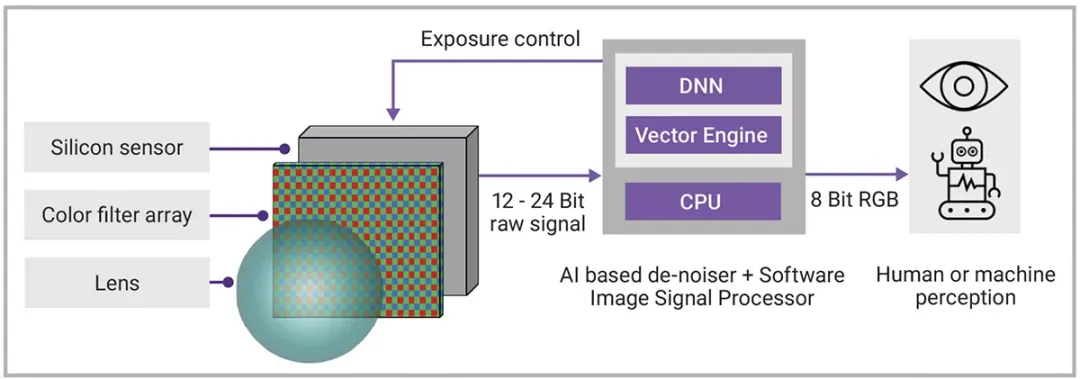

The ISP receives raw red, green, and blue data from the sensor and performs corrections such as demosaicing, color adjustment, lens distortion correction, and efficient data compression. Raw sensor data often has a bit depth between 12 and 24 bits, while the output is commonly an 8-bit RGB signal.

Today’s ISPs are typically built from IP blocks provided by a few vendors. Algorithms are often hard-coded into highly parallel hardware, which limits flexibility in the finished product.

Two main challenges: noise and flexibility

A major issue in sensors and ISPs is noise, which often becomes the limiting factor in system design.

Noise originates in the sensor itself and is most severe under low-light conditions when few photons are captured. Fewer photons result in fewer interactions with silicon, and statistical variation introduces noise. Thermal noise from silicon, which produces electron–hole pairs randomly, can be mistaken for photons. Noise also arises from measuring and digitizing extremely low charge levels. Noise propagates through the system in multiple ways.

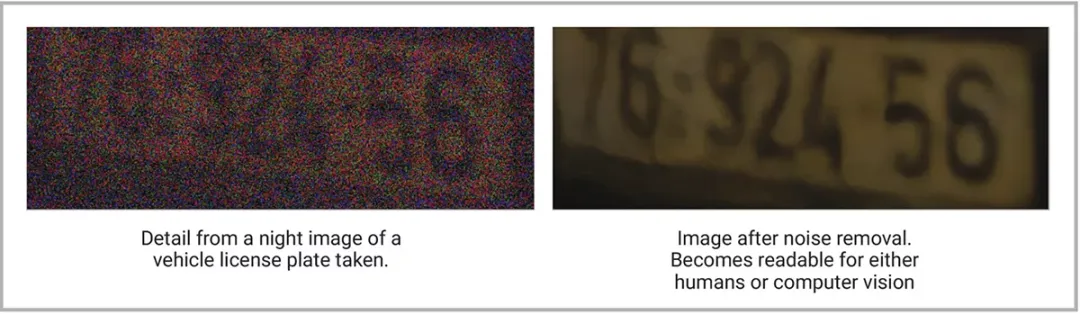

Noisy images degrade human perception and make it harder for the brain to interpret visual content. Likewise, noise impairs machine vision, reducing the reliability of object detection algorithms. Noise limits device performance in low light and reduces the ability to handle high dynamic range scenes that contain both very bright and very dark regions.

Sensor design offers several approaches to reduce noise, primarily by capturing more photons relative to noise. For example, increasing pixel size can help, but that requires larger, more expensive sensors or reduces resolution. Larger silicon areas change lens size, producing devices that are less robust and harder to package. Increasing exposure time raises the risk of motion blur and lowers frame rate. On the processing side, existing ISP denoising algorithms trade off detail preservation and smoothing; for example, some denoisers reduce current noise by smoothing images and losing feature sharpness.

Another drawback of traditional ISPs is limited flexibility. Tuning an ISP to match a specific sensor model can take weeks or months. That tuning drives cost and extends project timelines for imaging system engineering.

Software ISPs and AI-based denoising

Israeli startup Visionary.ai has developed a software-based ISP that uses AI to detect and remove more image noise than conventional algorithms. Many computer vision researchers focus on better detection and recognition methods, but Visionary.ai’s founders identified ISP optimization as a key route to improving image quality. A higher-quality ISP supplies better image data, improving downstream AI tasks such as object recognition and segmentation.

Improving the input data addresses the “garbage in, garbage out” problem and can raise accuracy and machine vision performance. For human-viewing applications such as smartphone or laptop video, Visionary.ai’s real-time denoiser produces clearer, brighter images and more accurate color rendering.

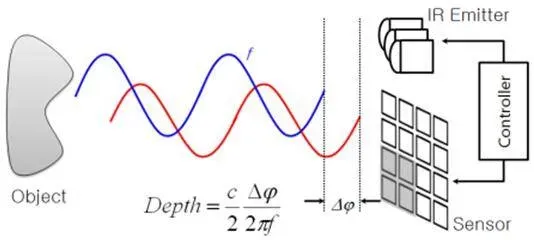

Unlike some denoisers, Visionary.ai’s AI-based method can remove noise in real time and achieve up to a 19 dB improvement in signal-to-noise ratio. To remove the maximum amount of noise, AI needs access to the sensor’s raw signal before it is modified and compressed by the ISP. Visionary.ai replaces traditional hardware ISPs entirely with a software ISP to meet this requirement.

Figure 1: Software ISP removes the maximum amount of noise in real time

Because the ISP and denoising functions are implemented in software, the hardware design must provide appropriate compute resources.

Compute choices for AI denoising

Denoising relies on neural networks. Performance requirements vary with workload, frame rate, and resolution. In early development, Visionary.ai used Nvidia Jetson platforms for research and experimentation because of their ample performance. For commercial deployment, however, the goal is a solution that fits die-area and power budgets to meet application and cost requirements.

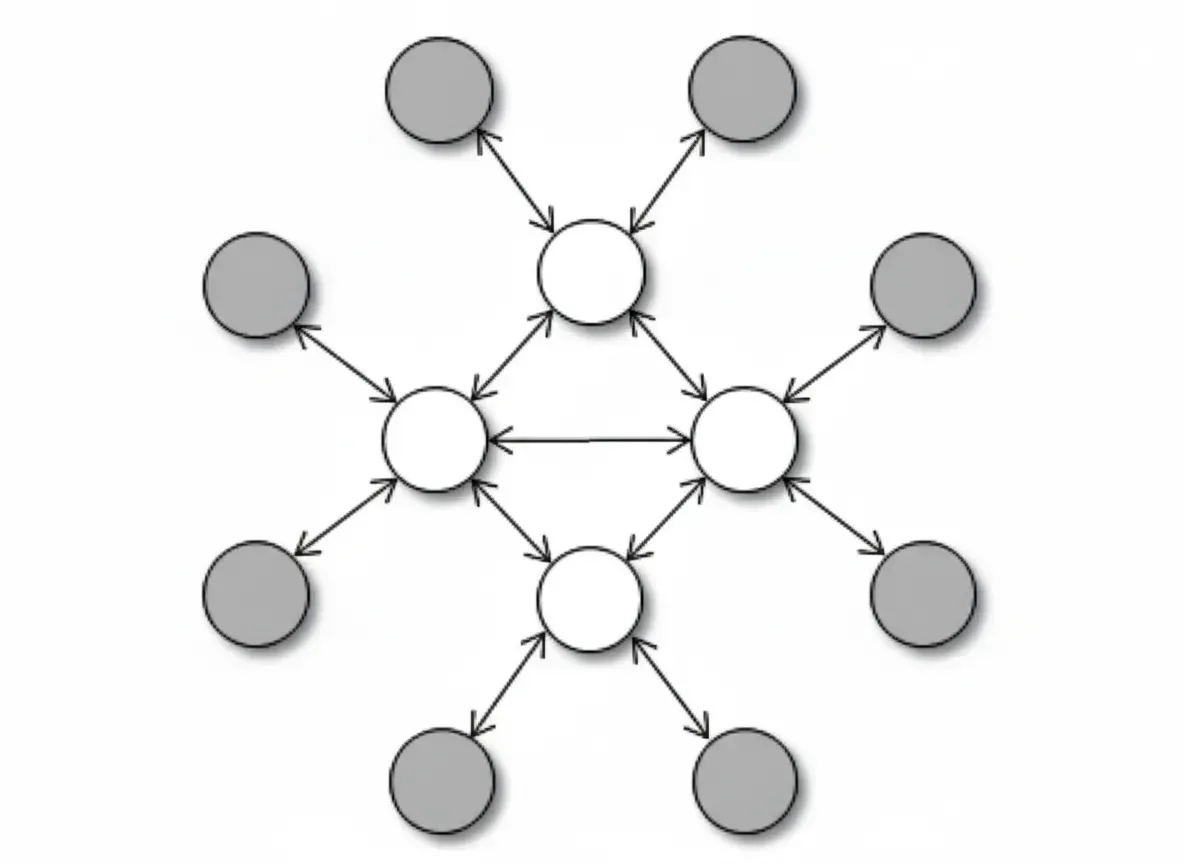

When people discuss edge AI they often cite 10, 100, or 1000 TOPS designs for a wide range of inference tasks, but denoising does not need that scale. Synopsys' ARC EV7x family is a set of heterogeneous embedded vision processors that include scalable vector DSP cores and a neural network engine. Visionary.ai’s denoising algorithm runs effectively on the Synopsys ARC EV72 processor, and the team plans to run it on newer ARC VPX vector DSP and ARC NPX neural processing units.

Besides ISP algorithms and denoising, the system requires an application processor to run control code. For low-demand control workloads, a single-core 32-bit processor is sufficient, such as Synopsys' ARC HS series.

Figure 2: AI denoiser and software ISP can optimize performance using raw sensor data

Flexibility and lifecycle updates

A software-defined ISP improves flexibility. Denoising and AI functions can be tuned faster and updated over the product lifecycle to enhance performance. If a supply chain issue forces a different sensor model, redesigning a system based on a new component becomes simpler.

With faster, lower-cost tuning, application-specific optimization becomes practical. For example, tuning for precise green detail capture in agricultural imaging or more accurate red recognition in medical imaging can be achieved with finer adjustments.

Conclusion

With its AI denoiser implemented on processors such as Synopsys ARC EV72, Visionary.ai’s software ISP has entered the market, creating new options for consumer electronics and security camera design. The team is also targeting automotive, drone, and medical markets.

The principle of software-defined systems is spreading across technology domains. Although software-based image processing is still developing, its advantages and flexibility, combined with advances in edge AI and AI imaging, are attracting growing attention from vendors.