Introduction

Machine vision sensors are increasingly widespread. Choosing the right sensor requires understanding accuracy, output, sensitivity, system cost, and the specific application requirements. A basic grasp of key sensor characteristics helps developers narrow their choices and find an appropriate sensor more quickly.

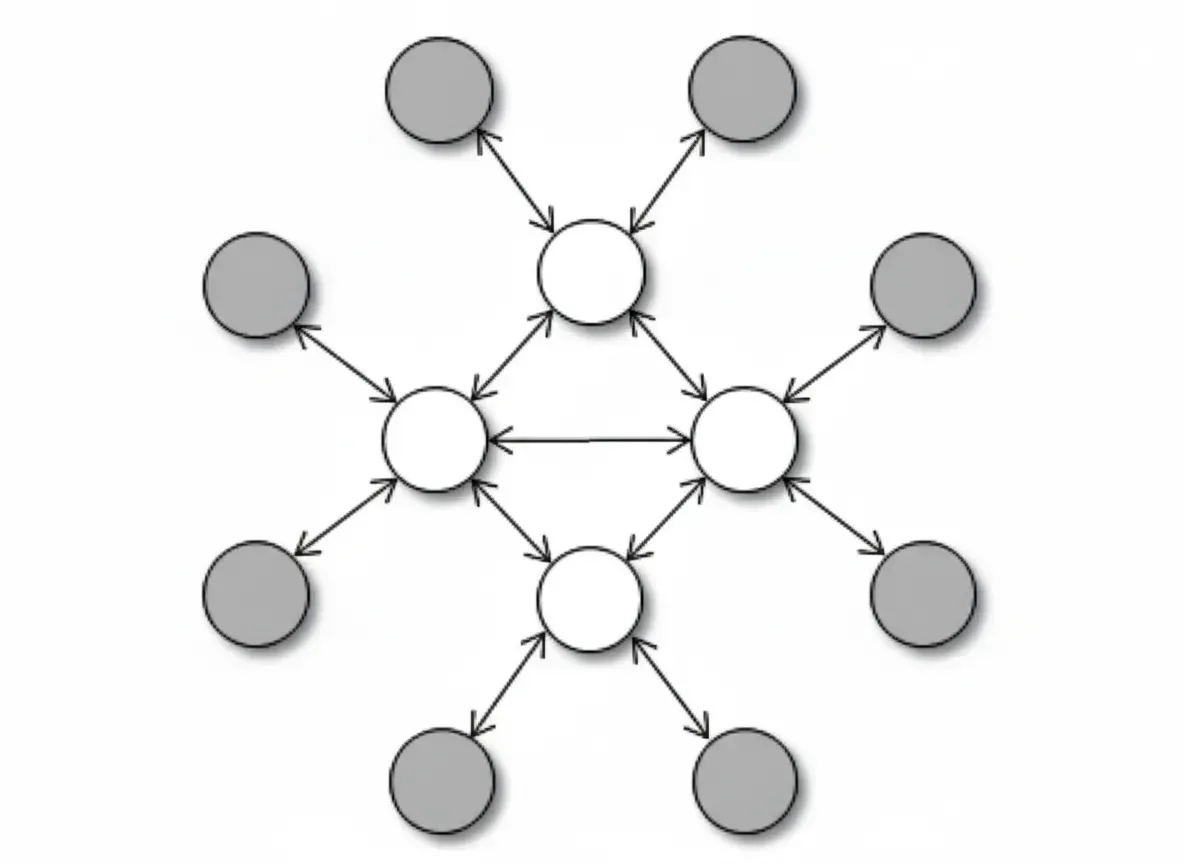

Camera and image sensor roles

The camera is the eye of a machine vision system, and its core is the image sensor. A camera is a complex assembly that includes the lens, signal processor, communication interface, and most critically, the device that converts photons into electrons: the image sensor. The lens and other components support camera function, but the sensor ultimately determines the camera's peak performance.

Three primary selection criteria

Many industry discussions focus on fabrication technology and the relative merits of CMOS versus CCD. Both approaches have advantages and trade-offs, and different fabrication processes produce sensors with different characteristics. End users care less about how a sensor is manufactured and more about how it performs in the intended application.

For a given application, three key factors determine sensor choice: dynamic range, speed, and responsivity. Dynamic range governs the quality of captured images and the level of detail. Speed refers to how many images the sensor can produce and how fast the system can receive them. Responsivity measures how efficiently the sensor converts photons into electrons, determining the required illumination level to capture useful images. Sensor technology and design together determine these traits, so system designers must evaluate them carefully.

Understanding dynamic range

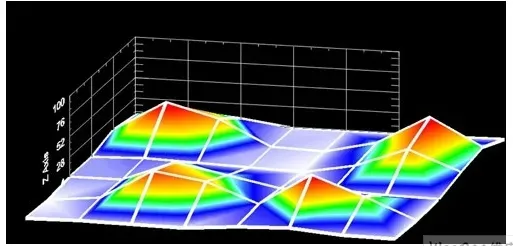

Dynamic range is often confusing because machine vision systems are digital. Image dynamic range has two parts: the sensor's usable exposure range (the ratio of brightest to darkest usable illumination) and the number of digital levels used to represent pixel signals, expressed in bits. These two parts are usually related.

The exposure dynamic range is the range of illumination levels over which the sensor produces usable signals. Photons striking an active pixel area generate electrons that the sensor captures and stores for readout. More incident photons produce more electrons; longer exposure produces more stored charge. One parameter that determines exposure dynamic range is the full-well capacity of the pixel storage node. Semiconductor processing and circuit design jointly determine the well capacity.

Electronic noise sets the sensor's minimum usable exposure. Even with no incident photons, the sensor generates electrons thermally. To produce a detectable signal, enough photons must strike the active pixel area so that the stored electrons exceed the electrons produced by dark current noise. The sensor's minimum usable exposure is the level that generates at least as many photoelectrons as are produced by noise. Only exposures above that noise-equivalent level yield useful information.

Exposure dynamic range is determined by physical and circuit design, whereas digital dynamic range is determined by the analog-to-digital representation. Digital dynamic range indicates how many distinct exposure levels the vision system can receive from the sensor. An 8-bit sensor provides 256 gray levels, a 10-bit sensor 1024 levels, and so on. Bit depth is not the sole indicator of the sensor's maximum signal, but it is often correlated with exposure range.

Signals smaller than the dark current noise level cannot provide useful information. Likewise, digital values above the sensor's maximum signal do not add information. In practice, a sensor should be designed so that the noise-equivalent exposure corresponds to the lowest digital level and there are sufficient digital steps up to the saturation level. In that sense, the sensor's digital dynamic range and exposure dynamic range both describe the ratio between saturation exposure and noise-equivalent exposure.

Trade-offs driven by interactions

Dynamic range partly determines image quality: higher bit depth allows finer detail discrimination. Lower dark current noise and higher precision typically increase sensor cost. However, not all applications require fine-grained imaging. For tasks such as parcel sorting or many electronic production inspections, an 8-bit dynamic range may be adequate. Applications like medical imaging or aerial reconnaissance often require 14-bit dynamic range.

Speed requirements

Speed is more intuitive than dynamic range: it measures how fast the sensor acquires and transfers images to the system. Speed includes frame rate, i.e., how quickly pixel data can be sent to the system, and the exposure time needed to capture a useful image. Frame rate can never be faster than exposure time, so frame rate is a common metric for sensor performance.

In inspection applications, sensor speed determines throughput. If each image corresponds to one part under inspection, inspection rate cannot exceed the sensor's frames per second. When imaging moving objects, higher acquisition speed is required to avoid motion blur. High-throughput inspection systems and imaging of fast-moving objects therefore require high-speed sensors.

Speed and dynamic range are linked: to transfer images quickly, the sensor must digitize each pixel faster, which requires a fast, stable analog-to-digital converter. From a design and physical perspective, speed often trades off with dynamic range. Higher circuit speeds generate more heat, and dark current noise increases with temperature, so higher-speed sensors tend to have higher noise and lower dynamic range than lower-speed sensors.

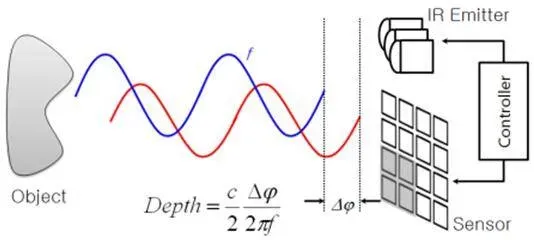

Responsivity and its relation to speed

Higher frame rates reduce available exposure time per frame. To maintain signal level with shorter exposures, designers can increase scene illumination or choose a sensor with higher responsivity.

Responsivity is the signal amplitude (voltage) generated under given exposure conditions. Three factors control responsivity in an image sensor: quantum efficiency (electrons generated per photon), the capacitance of the sensor output stage that converts stored charge (q) to voltage (V = q/C), and the gain of the sensor output amplifier. When operating at noise-equivalent exposure levels, amplifier gain alone does not increase inherent responsivity.

Designers must trade off dynamic range, speed, and responsivity. High speed and low illumination increase noise and reduce dynamic range. If dynamic range permits, demanding imaging detail can be achieved by increasing illumination to compensate for lower responsivity. The sensor's physical attributes will inevitably balance these three factors.

Resolution and pixel pitch

Beyond the three core factors, resolution and pixel pitch also affect image quality and interact with dynamic range, speed, and responsivity. Resolution refers to the number of pixels forming an image and reflects sensor size and pixel pitch. Required sensor resolution depends on field of view, working distance, sensor size, pixel pitch, and the spatial detail the system must capture. Higher resolution requires faster clocking to achieve the same frame rate, so resolution strongly impacts speed.

Pixel pitch defines the size of each pixel area and, together with sensor size, determines resolution. With limited sensor sizes, smaller pixel pitch yields higher resolution. Pixel pitch affects responsivity: smaller pixels collect fewer photons because each pixel has a smaller active area.

System-level matching

All sensor characteristics must be considered alongside other camera components. Lens resolution is measured by modulation transfer function (MTF) and must match the sensor's pixel pitch to achieve optimal imaging. For example, a lens with a 5-micrometer MTF mounted on a sensor with 3-micrometer pixel pitch will not resolve fine black-and-white line patterns; the patterns may appear as shades of gray. When selecting a sensor, ensure other system components are matched to the sensor's capabilities.

Conclusion

The most important task is to fully understand application requirements for dynamic range, speed, and responsivity. Those requirements determine acceptable performance ranges and ultimately define the needs for other system components.