Overview

To achieve a unified representation of multi-sensor data, conventional approaches include the following:

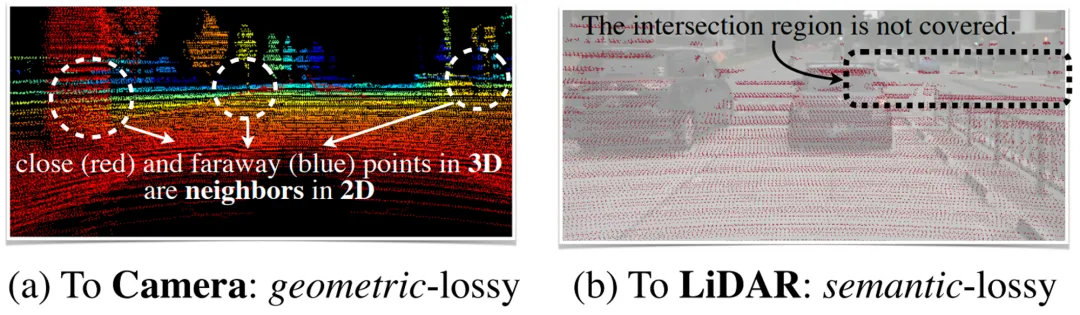

1) Lidar-to-Camera

Lidar point clouds are projected onto images and processed with 2D CNN algorithms. This causes severe geometric distortion (see figure a) and degrades performance on 3D object recognition and other geometry-oriented tasks.

2) Camera-to-Lidar

Semantic labels or CNN features are used to augment point clouds, and then a LiDAR-based detector predicts 3D bounding boxes. This point-level fusion approach loses semantic detail and performs poorly on semantic-oriented tasks (see figure b).

BEV Fusion Method

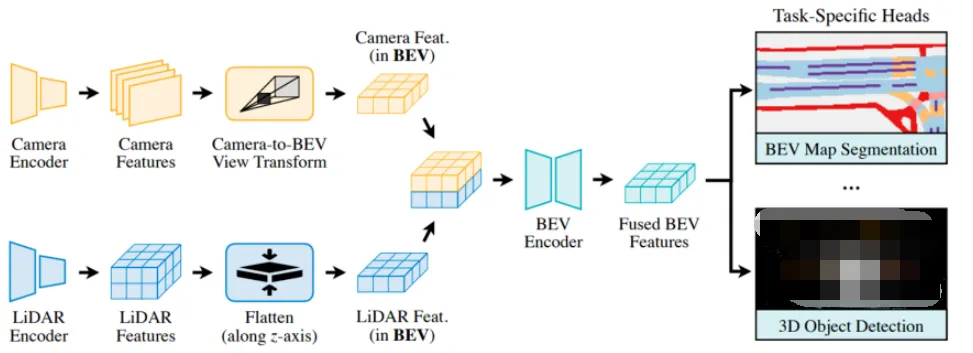

BEVFusion implements a unified multimodal feature representation in bird's-eye view (BEV) space while preserving geometric structure and semantic information.

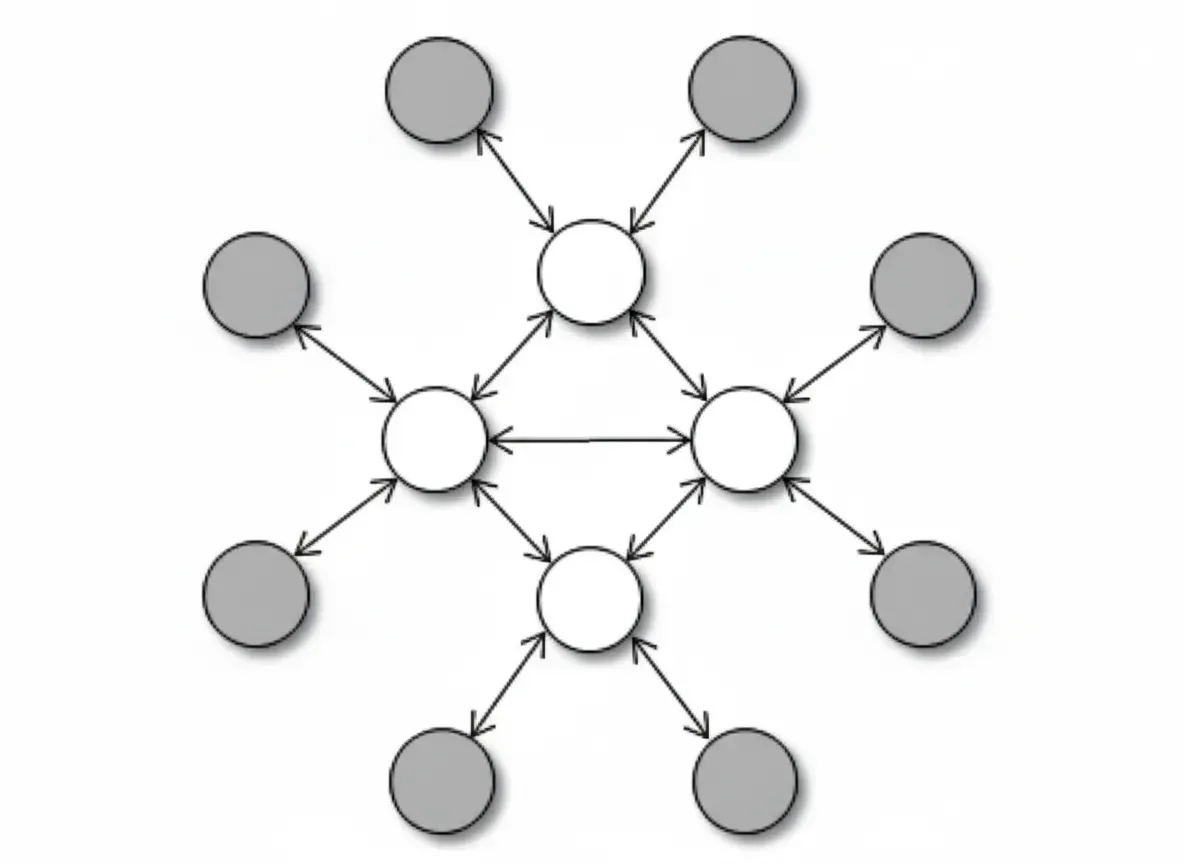

Different encoders are used to extract features from each input modality, preserving both geometry and semantic content. A fully-convolutional BEV encoder fuses the multimodal features to mitigate local misalignment between features. Task-specific heads are then added to support various 3D scene understanding tasks.

After optimization, the BEV pooling module achieves a 40x speedup. The method reports a 6% mIoU improvement over camera-only models and a 13.6% mIoU improvement over LiDAR-only models.

Camera-to-BEV Transformation

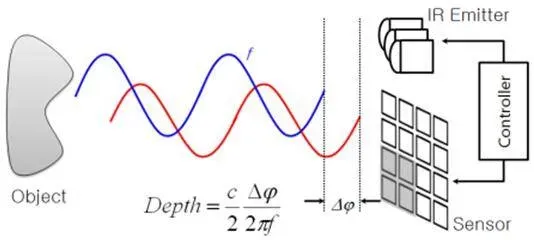

The Camera-to-BEV transformation must first resolve per-pixel depth. The paper adopts the LSS (Lift, Splat, Shoot) approach to predict a discrete per-pixel depth distribution.

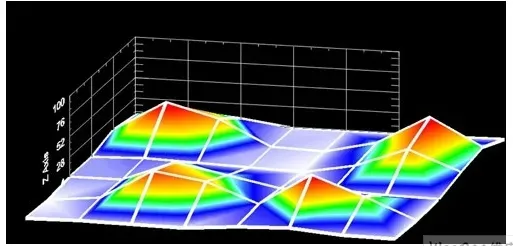

For each feature pixel Pi, D discrete points are assumed along the ray (each pixel maps to D spatial locations). Each discrete point has a normalized probability. All camera features combine to form an N x H x W x D camera feature point cloud, where N is the number of cameras and HxW is the spatial size of each camera feature map. Along the x and y directions, features are aggregated and quantized into an r x r BEV grid using BEV pooling. Finally, the features are flattened along the z axis.

BEV pooling is computationally expensive. The authors propose Precomputation and Interval Reduction to address this.

Precomputation

Camera extrinsics and intrinsics are fixed, as is the sampling interval for the D discrete points along each ray. Therefore, the x and y coordinates of each camera feature point and the BEV grid index for each point are constant. These values can be precomputed and reused during runtime.

Interval Reduction

LSS uses a prefix-sum approach to compute the aggregated value for each BEV grid. The BEV pooling task is to sum values with the same grid index. A prefix sum is used as an intermediate to assist the aggregation: when an index changes, subtract the prefix-sum value of the previous index to obtain the aggregated result.

Rather than relying on a single-threaded prefix-sum scheme, the paper implements a specialized GPU kernel to compute multiple BEV grids independently and concurrently. This avoids the overhead of computing and storing prefix sums and significantly accelerates the operation.

The optimized BEV pooling improves Camera-to-BEV transformation by 40x, reducing latency from over 500 ms to about 12 ms.

Fully-Convolutional Fusion

Lidar and camera BEV features can be fused using elementwise operators such as concatenation. Due to depth estimation errors, Lidar BEV features and camera BEV features may be spatially misaligned. A convolution-based BEV encoder is therefore used to address spatial misalignment.

class ConvFuser(nn.Sequential): def __init__(self, in_channels: int, out_channels: int) -> None: self.in_channels = in_channels self.out_channels = out_channels super().__init__( nn.Conv2d(sum(in_channels), out_channels, 3, padding=1, bias=False), nn.BatchNorm2d(out_channels), nn.ReLU(True), ) def forward(self, inputs: List[torch.Tensor]) -> torch.Tensor: return super().forward(torch.cat(inputs, dim=1))

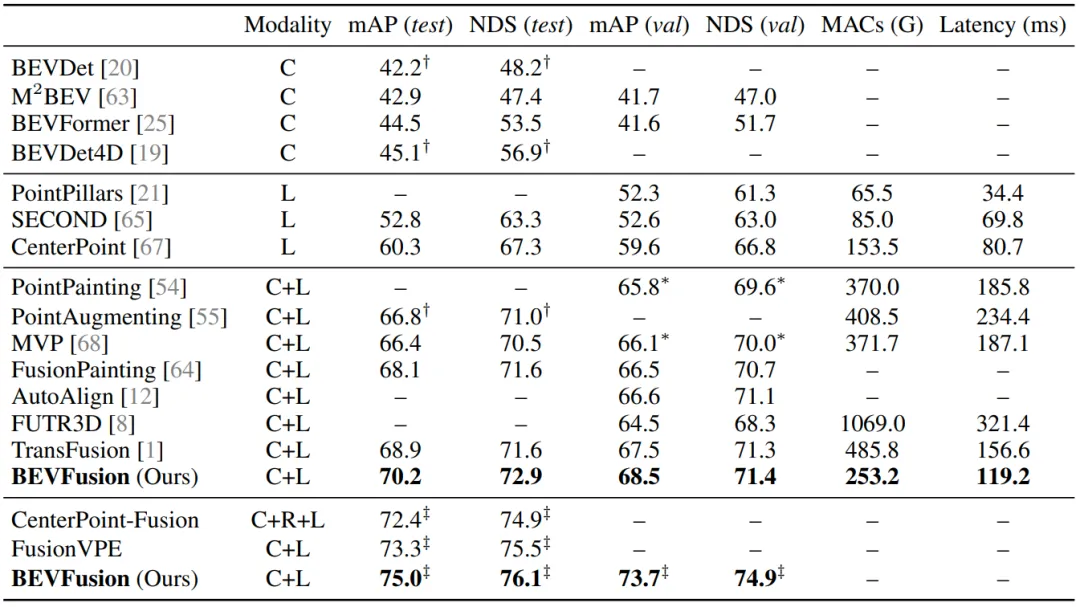

Evaluation

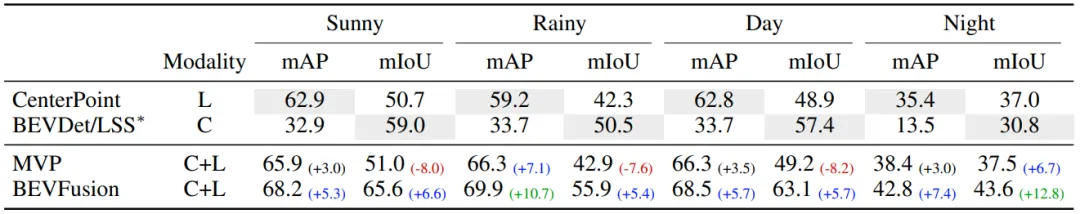

In the results table, C denotes the camera modality and L denotes the LiDAR modality. MACs measure computational cost and latency is runtime delay. BEVFusion combines camera and LiDAR modalities and achieves state-of-the-art performance while keeping computation and latency relatively low.

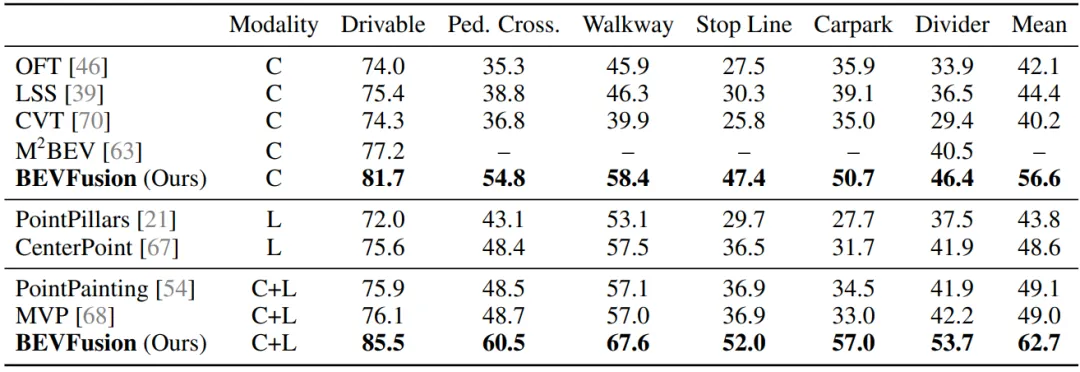

On the nuScenes BEV map segmentation task, BEVFusion achieves state-of-the-art results and improves segmentation performance across different map elements.

BEVFusion performs robustly across weather and lighting conditions including clear, rainy, daytime, and nighttime scenes.

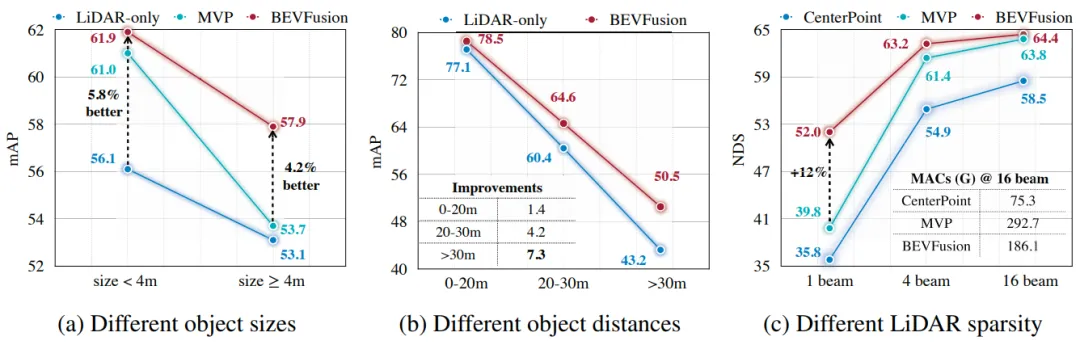

The method improves detection performance for large and small objects as well as for distant and nearby objects, and it maintains good performance even with sparse LiDAR beams.