1. Introduction

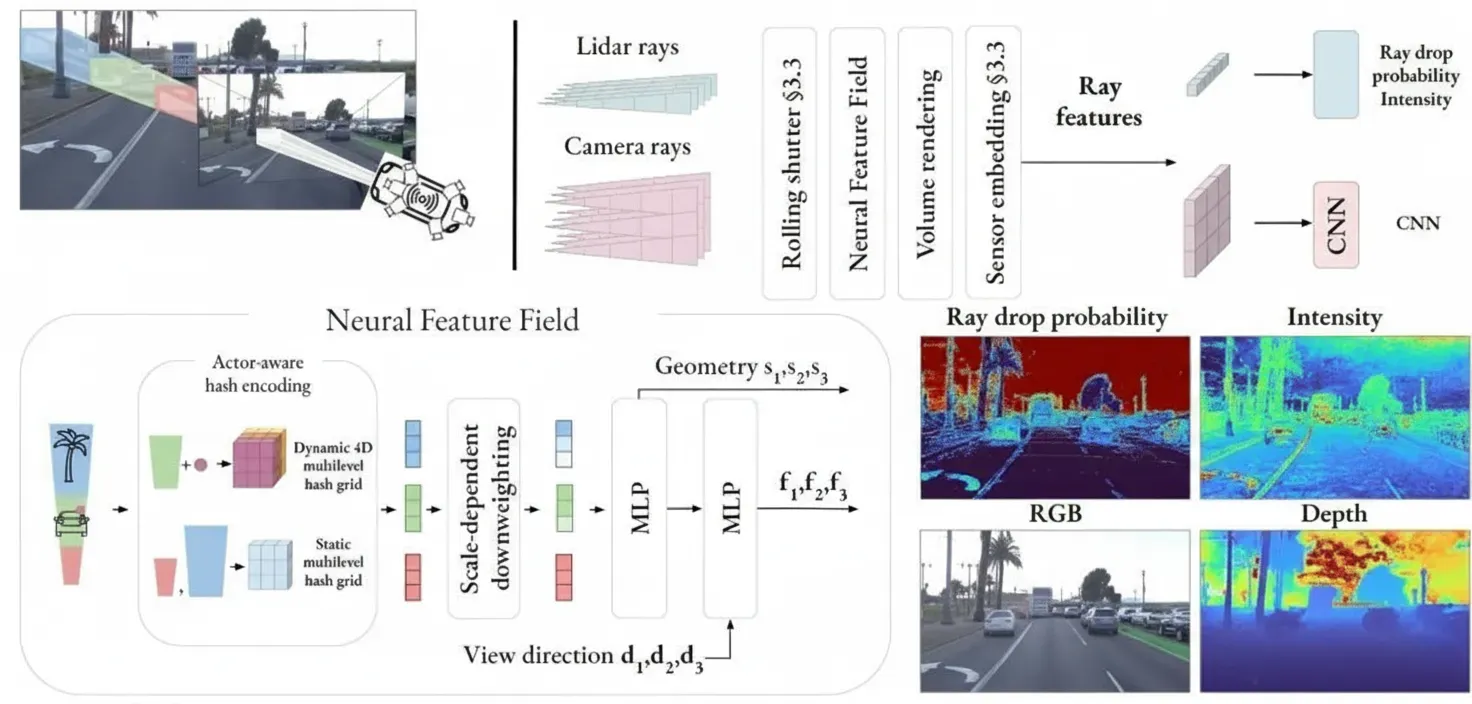

This article presents NeuRAD, a NeRF tailored for dynamic autonomous driving data. The method uses a simple network design and models a wide range of camera and lidar sensor phenomena.

2. Abstract

Neural Radiance Fields (NeRFs) have seen broad application in the autonomous driving (AD) community. Recent approaches have demonstrated NeRFs' potential for closed-loop simulation, AD system testing, and advanced training data augmentation. However, existing methods often require long training times, dense semantic supervision, or lack generalization, which hinders large-scale adoption in AD. In this work, we propose NeuRAD, a robust novel view synthesis method designed for dynamic AD data. Our approach uses a simple network architecture, models a wide range of camera and lidar sensor effects including rolling shutter, beam divergence, and ray casting, and applies to multiple out-of-distribution datasets. We validate performance on five popular AD datasets and achieve state-of-the-art results. To encourage further development, we will release the NeuRAD source code.

3. Results

NeuRAD is a neural rendering method tailored to dynamic driving scenes. It enables modification of the ego vehicle and other road users' poses, and allows adding or removing actors. These capabilities make NeuRAD suitable as a component in sensor-realistic closed-loop simulators or data augmentation engines.

4. Method

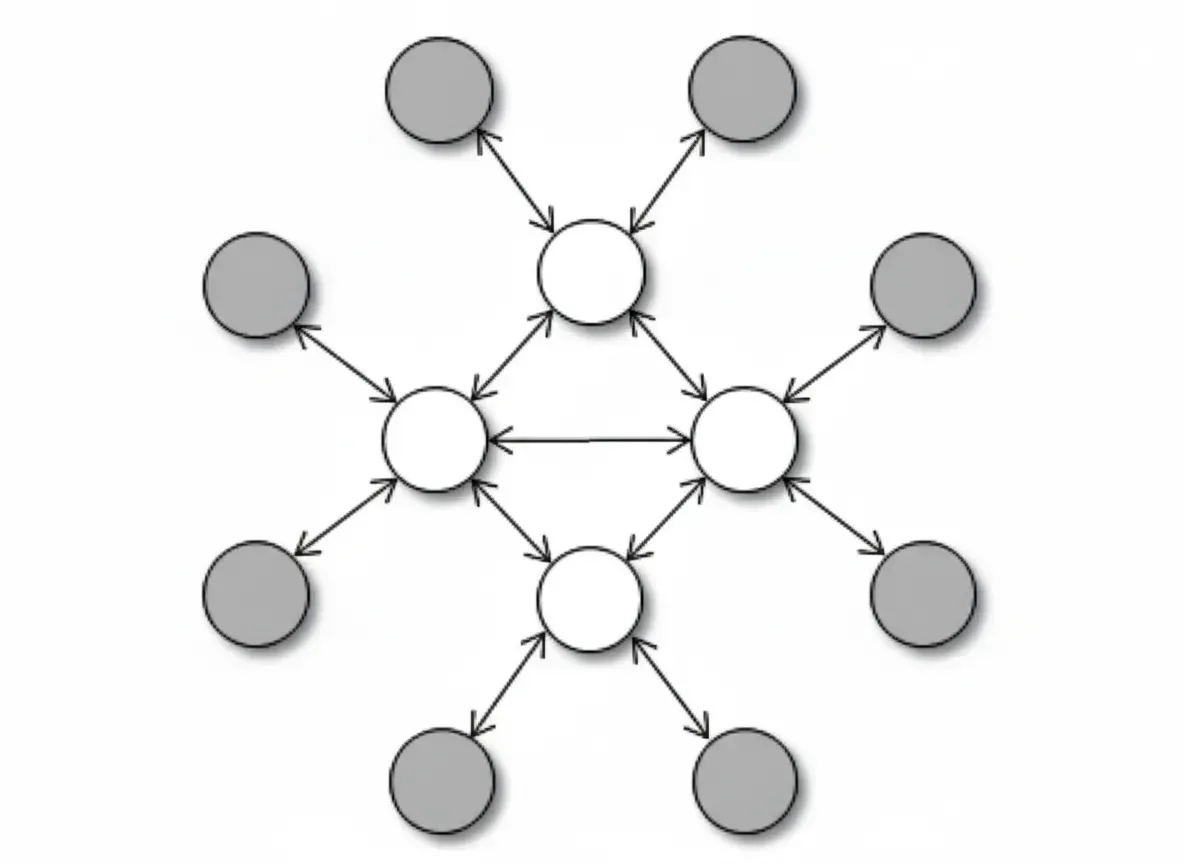

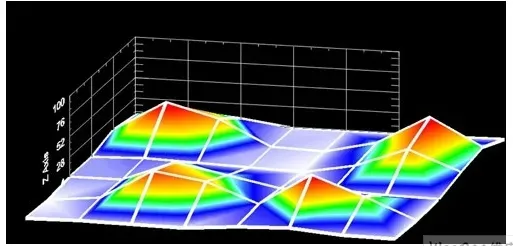

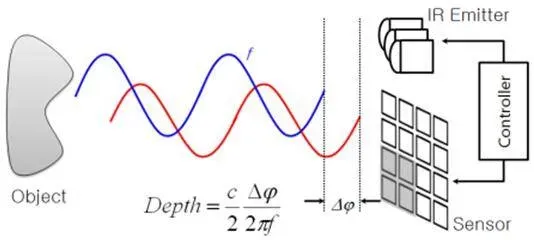

NeuRAD learns a joint neural feature field for the static and dynamic elements of driving scenes, where the two are distinguished solely by actor-specific hash encodings. Points that fall inside an actor bounding box are transformed into actor-local coordinates and queried into a 4D hash grid along with actor indices. An upsampling CNN decodes volumetric ray-level features into RGB values, and MLPs decode ray drop probability and intensity.

5. Conclusion

This work proposes NeuRAD, a neural simulator specifically designed for dynamic autonomous driving data. The model jointly processes lidar and camera data across 360 degrees, decomposes the scene into static and dynamic elements, and enables creation of sensor-editable clones of real driving scenarios. NeuRAD incorporates novel modeling of several sensor phenomena, including beam divergence, ray dropping, and rolling shutter, which improves novel-view synthesis quality.