Introduction

Image Radar, i.e. 4D millimeter-wave radar, outputs 3D position plus radial velocity; compared with conventional 3D radar (2D position plus velocity) it adds height information. Image Radar retains the characteristics of traditional 3D radar while addressing issues caused by missing height information. Tesla's integration of Image Radar into its next-generation V4 driving hardware drew industry attention. Image Radar shows advantages in cost and performance under adverse weather such as rain and snow, so designing perception and localization solutions based on Image Radar is likely to be an active research area in the coming years.

Hardware Principles and Signal Processing

Image Radar hardware

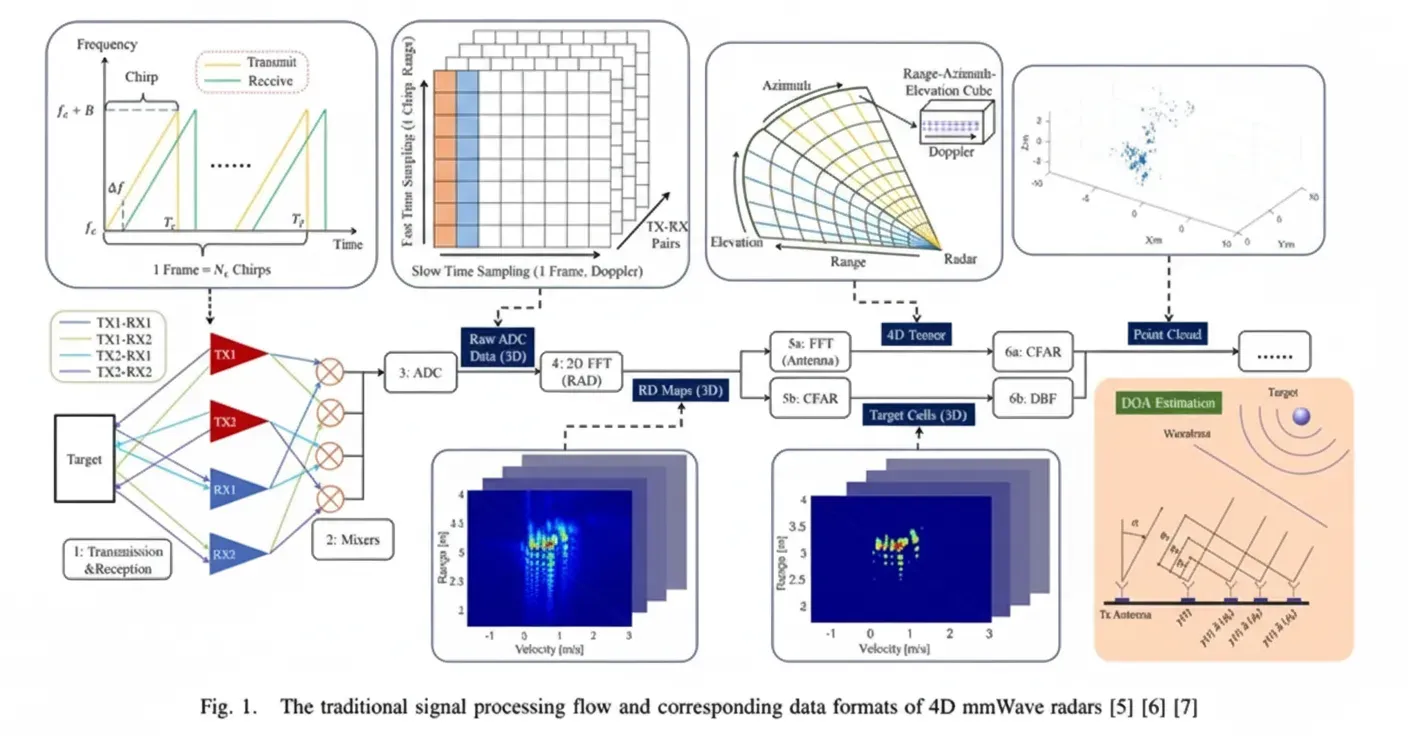

The main hardware difference between Image Radar and traditional 3D millimeter-wave radar is the additional vertical antenna arrangement, which enables elevation measurement and therefore height estimation, while introducing new challenges for signal processing.

Measurement principles

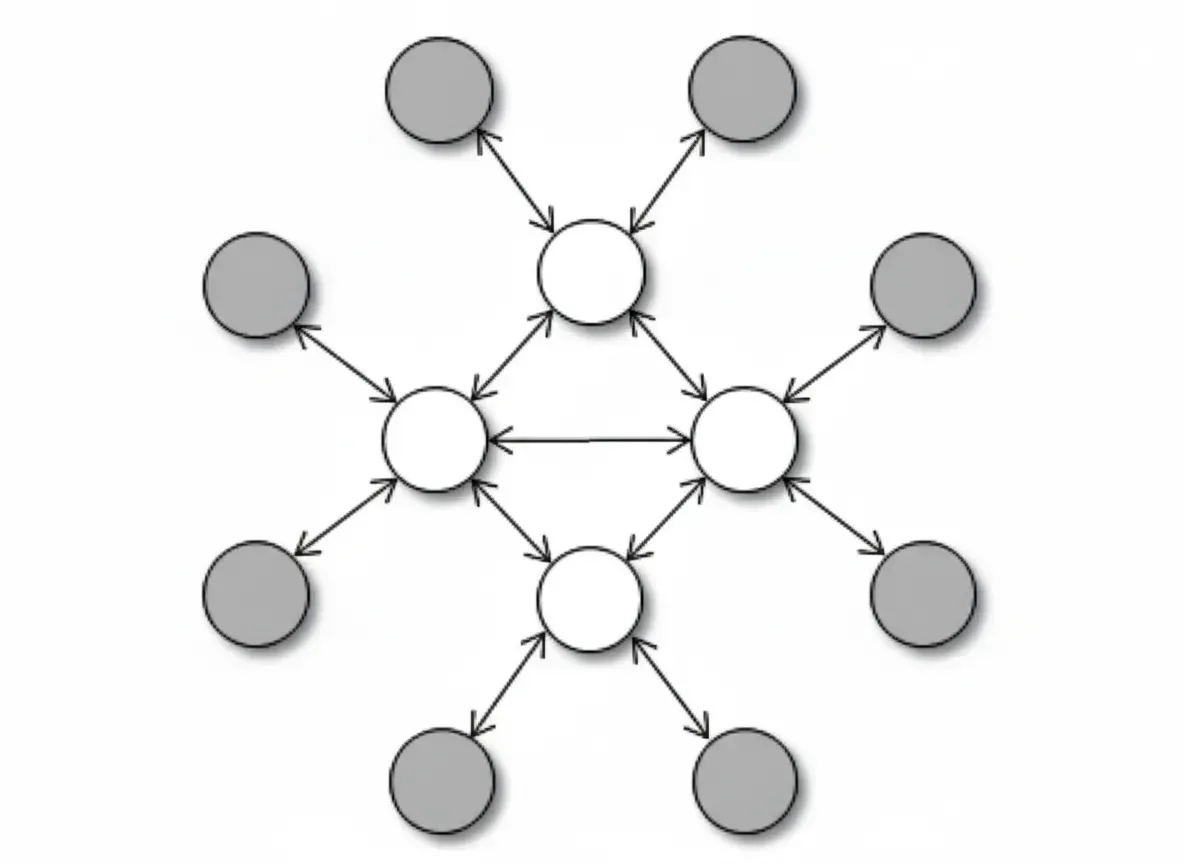

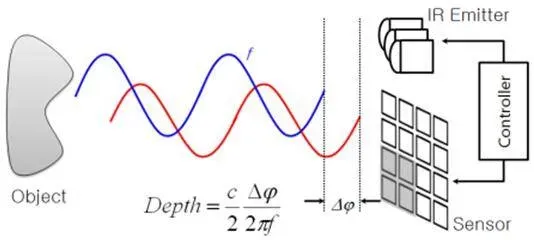

Speed measurement relies on the Doppler effect, and range is measured from the time delay between transmitted and received signals. Azimuth estimation mainly uses multiple-input multiple-output (MIMO) techniques: n transmitters (TX) and m receivers (RX) form n times m TX-RX pairs. A signal from one transmitter is received by multiple receivers, producing phase differences among the TX-RX combinations. These phase differences convert to path length differences; combined with known relative positions of the TX-RX antenna pairs, the direction of a target can be resolved. To distinguish signals from different transmitters, transmitted waveforms are mutually orthogonal.

Methods to improve measurement accuracy

Improvements fall into hardware and software approaches. On the hardware side, cascading multiple modules is commonly used to increase the number of TX-RX pairs and thus angular resolution; this is straightforward but increases size and power consumption. The hardware shown above is a 4-module cascade. Increasing the number of integrated antennas on a single chip is another path with potential to replace cascading, but antenna mutual interference increases and this approach remains under active investigation.

On the software side, virtual aperture imaging can expand the effective aperture via software to improve angular resolution; it is often combined with hardware cascading to reduce interference. Super-resolution algorithms are also used to design new signal processing pipelines that improve precision beyond FFT-based methods.

Noise characteristics and filtering

Image Radar shares similar noise types and processing methods with traditional 3D millimeter-wave radar, primarily speckle noise and multipath reflections. Standard radar filtering techniques, such as CFAR filtering and velocity filtering, are applicable.

Speckle noise: the transmitted electromagnetic pulse interacts with environmental objects, causing the received signal to be corrupted and producing speckle-like point distributions without further processing.

Multipath reflections: the same object can produce multiple return paths. Besides the direct return, signals reflected by walls or the ground appear as ghost objects located underground or behind walls, acting as static outliers.

Data Formats: 4D Tensor vs Point Cloud

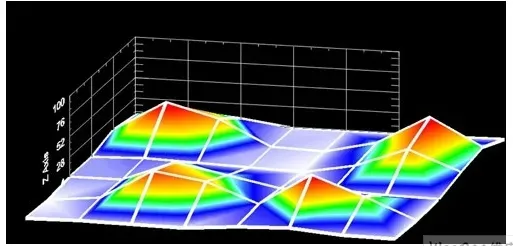

Radar outputs are typically sparse point clouds with velocity measurements. Before converting to sparse point clouds, a denser 4D tensor representation exists that contains richer information about target characteristics. In perception research, several works discuss using 4D tensors as algorithm inputs.

Algorithm Approaches for Image Radar SLAM

Recent Image Radar SLAM approaches focus on leveraging Doppler velocity measurements to compensate for the sparsity of radar point clouds. The core idea is that reflections from static scene points provide Doppler measurements that reflect the vehicle's motion; therefore each static point offers an observation of vehicle velocity, which is a strong and useful constraint.

For example, the DICP algorithm derives an observation equation based on Doppler velocity that depends only on the measured point velocity and not on the scene structure, mitigating ICP degeneracy in unstructured environments. The approach combines ICP constraints and Doppler velocity constraints for state estimation. The 4D iRIOM approach fuses Image Radar with IMU information in a framework similar to lidar-inertial odometry (LIO). It estimates vehicle velocity from multiple static point measurements and uses that as an observation for the vehicle velocity state; the core insight is again that Doppler measurements of static points measure vehicle velocity.

Compared with lidar SLAM, Image Radar SLAM requires special handling of radar-specific noise and careful static-point selection. Using IMU or wheel odometry to help select static points is practical in engineering applications.

Image Radar reflection characteristics differ from most lidars, presenting additional challenges. Metallic objects often produce strong radar returns while plastics and concrete reflect poorly; ground returns are frequently treated as clutter and filtered out. These differences lead to point cloud distributions that differ significantly from lidar. Given this, some solutions discard point cloud geometry and rely only on Doppler velocity measurements combined with IMU and wheel odometry to implement an enhanced dead-reckoning approach, which can be practical and computationally efficient.

Despite the challenges, Image Radar is a low-cost sensor that provides depth and velocity measurements and remains a viable candidate for SLAM research and application.