Overview

With rapid advances in technology, applications such as AR, VR, robotics, drones, and autonomous vehicles require accurate spatial awareness. SLAM is one of the core techniques that enables devices to localize themselves and build maps of unknown environments. An analogy often used is that a smartphone without Wi-Fi or data is like an autonomous vehicle or robot without SLAM.

What is SLAM

SLAM stands for Simultaneous Localization and Mapping. It addresses the problem of how a robot moving in an unknown environment can estimate its own trajectory from observations while building a map of that environment. SLAM encompasses several technical components, including feature extraction, data association, state estimation, state update, and landmark update.

A simplified explanation is: when you enter an unfamiliar environment and want to navigate quickly, you typically do the following steps simultaneously:

- Observe nearby landmarks such as buildings, trees, or signs and remember their features (feature extraction).

- Reconstruct those landmarks in a 3D mental map using binocular cues if available (3D reconstruction).

- While walking, continuously acquire new landmarks and correct the internal map model (bundle adjustment or EKF).

- Estimate your current position based on landmarks observed earlier (trajectory estimation).

- Optionally, when you return to a previously visited location, match current observations to past landmarks (loop-closure detection).

Because these steps run together, the approach is called simultaneous localization and mapping.

Sensors and the Visual SLAM Framework

SLAM implementation and difficulty are closely related to the sensors used and how they are mounted. Sensors generally fall into two broad categories: LiDAR and vision. Vision-based approaches further split into monocular, stereo (or multi-camera), and RGB-D. Below is a summary of common sensor types and their characteristics.

1. LiDAR

LiDAR is the oldest and most extensively studied SLAM sensor. It provides distance measurements between the robot and surrounding obstacles. Common LiDAR models such as SICK and Velodyne, and lower-cost devices like rplidar, can be used for SLAM. LiDAR measures angles and distances to obstacle points with high accuracy and relatively low computational cost, making real-time SLAM and obstacle avoidance easier to implement.

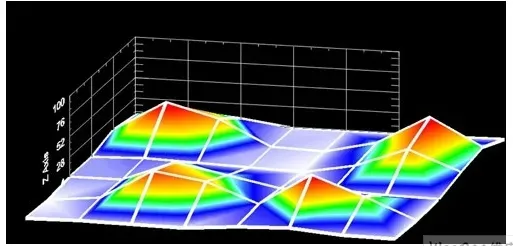

2D planar LiDAR sensors scan obstacles in a single plane and are suitable for robots constrained to planar motion, such as vacuum robots, producing 2D occupancy maps useful for navigation. Early SLAM work predominantly used LiDAR and filter-based methods such as Kalman filters and particle filters.

Advantages of LiDAR include high accuracy, high speed, and modest computational load for real-time performance. Disadvantages include high cost, which has driven research into reducing sensor cost. From a theoretical perspective, EKF-SLAM research for LiDAR is mature, but EKF approaches have limitations such as difficulty representing loop closures, linearization errors, and overhead from maintaining landmark covariance matrices.

2. Visual SLAM

Visual SLAM has been a major research focus since the 21st century. Vision is intuitive: humans navigate using sight, so applying cameras to robots seems natural. Improvements in CPU and GPU performance have enabled many visual algorithms to run at real-time frame rates above 10 Hz, which has accelerated visual SLAM development.

Visual SLAM can be categorized into monocular, stereo/multi-camera, and RGB-D approaches. Specialized cameras such as fisheye or omnidirectional cameras exist but are less common. Combining cameras with inertial measurement units (IMUs) is also an active research direction. In terms of implementation difficulty, monocular is generally harder than stereo, which is harder than RGB-D.

Monocular SLAM (MonoSLAM) uses a single camera. Its main advantage is sensor simplicity and low cost. The primary challenge is that absolute depth is not directly observable, so monocular SLAM recovers scale only up to an unknown factor. Monocular methods estimate relative depth and operate in similarity transform space Sim(3) rather than full Euclidean SE(3). External sensors like GPS or IMU are needed to fix scale in SE(3).

Monocular SLAM relies on motion-induced triangulation to estimate depth, so camera motion is required for map and trajectory convergence. Pure rotational motion provides no parallax for depth estimation, which can complicate monocular operation. On the positive side, monocular SLAM is scale-invariant and can be applied both indoors and outdoors.

Stereo cameras estimate 3D points from the baseline between cameras. Stereo can estimate depth both while moving and while stationary, avoiding many monocular limitations. Stereo setups and calibration are more complex, and depth range depends on baseline and resolution. Stereo disparity computation is computationally intensive and is often implemented on dedicated hardware such as FPGAs.

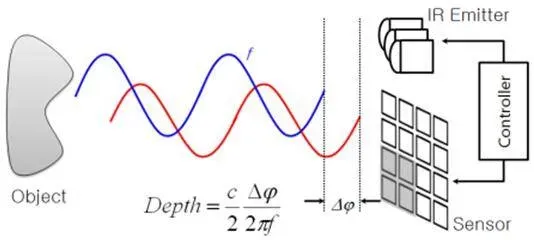

RGB-D cameras emerged around 2010 and directly provide per-pixel depth using structured infrared light or time-of-flight principles. RGB-D sensors like Kinect and Xtion supply richer information without expensive depth computation. However, they typically have limited range, higher noise, and narrower field of view, so RGB-D SLAM is mainly used indoors.

Visual Odometry and SLAM Framework Components

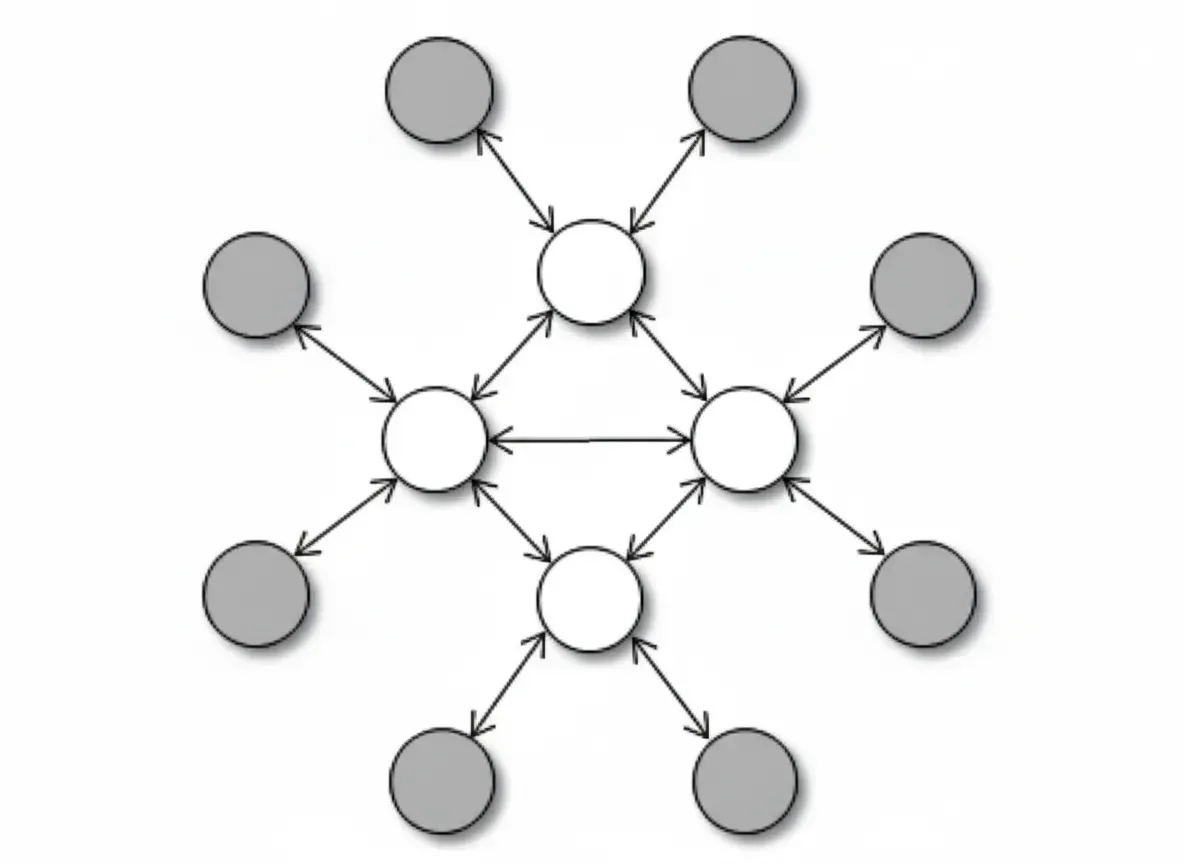

Most visual SLAM systems share a common framework. Excluding sensor input, a SLAM system often comprises four modules: visual odometry (VO), the back end, mapping, and loop-closure detection.

Visual Odometry

Visual odometry estimates the relative motion, or ego-motion, between frames. For LiDAR, one can match the current scan to a global map and solve for relative motion using ICP. For cameras, motion is represented by a 3D transform in SE(3) or Sim(3) (monocular), and computing this transform is the core VO problem. VO approaches divide into feature-based methods and direct methods that do not use features.

Feature Matching

Feature-based VO is the mainstream approach. For two images, feature points are extracted and matched to compute the camera transform. Common point features include Harris corners, SIFT, SURF, and ORB. With RGB-D cameras, known depth at features allows direct motion estimation. Given matched 3D-2D correspondences, pose estimation reduces to the Perspective-n-Point (PnP) problem, which is solved by nonlinear optimization to obtain inter-frame poses.

Direct Methods

Direct methods incorporate image pixel intensities directly into a pose estimation objective. For RGB-D, ICP can be used to align point clouds and solve for transforms. For monocular systems, pixels can be matched between frames or matched against a global model. Representative direct-method systems include SVO and LSD-SLAM. Direct methods often require more computation and higher frame rates than feature-based VO.

Back End

Visual odometry accumulates inter-frame estimates, but like other odometry systems, it suffers from drift. Small frame-to-frame errors accumulate, causing significant global deviation over time. The back end refines odometry by processing relative motion estimates to reduce drift.

Early SLAM back ends used filter-based approaches. The original SLAM formulation by R. Smith and colleagues cast SLAM as an Extended Kalman Filter (EKF) problem with motion and observation models. Incoming frame motion provided a prediction step with noise, while landmark observations provided update steps, leading to iterative prediction and correction.

Later work adopted bundle adjustment from Structure-from-Motion, replacing recursive filtering with batch or incremental optimization. Bundle adjustment considers multiple frames jointly and distributes errors across observations. Represented as graph optimization, this approach leverages sparse linear algebra for efficient solutions and handles loop closures naturally, making it the dominant back-end method in modern visual SLAM.

Loop-Closure Detection

Loop-closure detection identifies when the robot revisits the same place, enabling large reductions in accumulated error. Loop detection is essentially a similarity-detection problem for sensor observations. Visual SLAM commonly uses Bag-of-Words (BoW) models that cluster visual features (SIFT, SURF, etc.) into a dictionary and represent images as word histograms. Some approaches formulate loop detection as a classification problem with trained classifiers.

Loop detection errors can severely degrade maps. Two main error types are false positives (perceptual aliasing), where different places are mistaken as the same, and false negatives (perceptual variability), where the same place is not recognized. A good loop detector aims to maximize true positives while minimizing false positives. Precision-recall curves are commonly used to assess performance.

Primary Application Areas for SLAM

SLAM is widely used in drones, autonomous driving, robotics, AR, and smart home devices, among other domains.

Robotics

LiDAR combined with SLAM is the mainstream technology for autonomous robot localization and navigation. Compared with vision and ultrasonic range sensors, LiDAR offers strong directionality and focused measurements, making it reliable and stable for localization. LiDAR sensors gather map data, build maps, and enable path planning and navigation.

Autonomous Driving

Autonomous driving has been a major focus for companies worldwide. Autonomous vehicles use higher-end LiDAR sensors such as Velodyne and IBEO to obtain map data, build detailed maps, detect obstacles, and plan routes. Compared with robotics applications, the sensor performance requirements and costs for autonomous driving are significantly higher.

Unmanned Aerial Vehicles (UAVs)

Drones need to identify obstacles and plan avoidance trajectories during flight, which is a direct application of SLAM. Because flight domains can be large, precision requirements may be lower, and complementary sensors such as optical flow and ultrasonic rangefinders are often used to assist localization.

Augmented Reality (AR)

AR overlays virtual information on the real world in real time. This requires accurate, low-drift pose estimates so virtual objects appear stably fused with the environment. SLAM provides the necessary real-time localization. Although alternative localization methods exist, SLAM is often the preferred approach for robust AR registration.

Key differences between AR SLAM and SLAM for robotics or autonomous driving include:

- Accuracy focus: AR emphasizes local, frame-to-frame accuracy to prevent visible jitter or drift in virtual overlays, while robotics and driving require global trajectory consistency to keep overall drift low and enable loop closure.

- Efficiency: AR must run at interactive frame rates with limited computational resources; typical target frame rates are above 30 FPS. Robots often move more slowly, allowing lower frame rates and relaxed computational constraints.

- Hardware constraints: AR applications are more sensitive to size, power, and cost. Robot platforms can host fisheye, stereo, or depth cameras and high-performance CPUs, while AR often prefers more efficient and robust algorithms to meet mobile device constraints.

Future Directions

Future SLAM development will emphasize multi-sensor fusion, improved data association and loop detection, integration with heterogeneous front-end processors, and increased robustness and relocalization accuracy. These challenges will be gradually addressed as consumer demand and the supply chain evolve, enabling broader adoption of SLAM in everyday devices similar to how gyroscopes became common in smartphones.